文章目录

- 爬取网站的流程

- 案例一:爬取猫眼电影

- 案例二:爬取股吧

- 案例三:爬取某药品网站

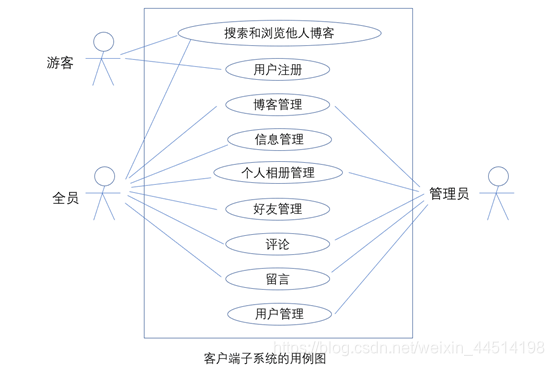

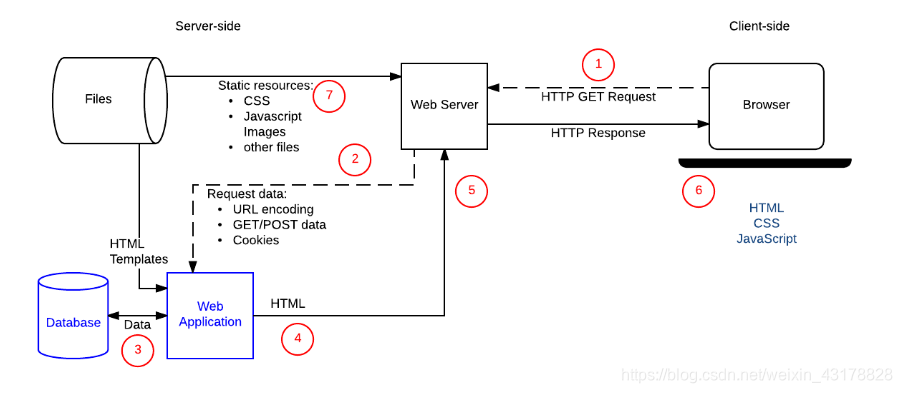

爬取网站的流程

- 确定网站的哪个url是数据的来源

- 简要分析一下网站结构,查看数据存放在哪里

- 查看是否有分页,并解决分页的问题

- 发送请求,查看response.text是否有我们所需要的数据

- 筛选数据

案例一:爬取猫眼电影

爬取目标:爬取前一百个电影的信息

import re, requests, jsonclass Maoyan:def __init__(self, url):self.url = urlself.movie_list = []self.headers = {'User-Agent': 'Mozilla/5.0 (Windows NT 6.1; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/78.0.3904.70 Safari/537.36'}self.parse()def parse(self):# 爬去页面的代码# 1.发送请求,获取响应# 分页for i in range(10):url = self.url + '?offset={}'.format(i * 10)response = requests.get(url, headers=self.headers)'''1.电影名称2、主演3、上映时间4、评分'''# 用正则筛选数据,有个原则:不断缩小筛选范围。dl_pattern = re.compile(r'<dl class="board-wrapper">(.*?)</dl>', re.S)dl_content = dl_pattern.search(response.text).group()dd_pattern = re.compile(r'<dd>(.*?)</dd>', re.S)dd_list = dd_pattern.findall(dl_content)# print(dd_list)movie_list = []for dd in dd_list:print(dd)item = {}# ------------电影名字movie_pattern = re.compile(r'title="(.*?)" class=', re.S)movie_name = movie_pattern.search(dd).group(1)# print(movie_name)actor_pattern = re.compile(r'<p class="star">(.*?)</p>', re.S)actor = actor_pattern.search(dd).group(1).strip()# print(actor)play_time_pattern = re.compile(r'<p class="releasetime">(.*?):(.*?)</p>', re.S)play_time = play_time_pattern.search(dd).group(2).strip()# print(play_time)# 评分score_pattern_1 = re.compile(r'<i class="integer">(.*?)</i>', re.S)score_pattern_2 = re.compile(r'<i class="fraction">(.*?)</i>', re.S)score = score_pattern_1.search(dd).group(1).strip() + score_pattern_2.search(dd).group(1).strip()# print(score)item['电影名字:'] = movie_nameitem['主演:'] = actoritem['时间:'] = play_timeitem['评分:'] = score# print(item)self.movie_list.append(item)# 将电影信息保存到json文件中with open('movie.json', 'w', encoding='utf-8') as fp:json.dump(self.movie_list, fp)if __name__ == '__main__':base_url = 'https://maoyan.com/board/4'Maoyan(base_url)with open('movie.json', 'r') as fp:movie_list = json.load(fp)print(movie_list)案例二:爬取股吧

爬取目标: 爬取前十页的阅读数,评论数,标题,作者,更新时间,详情页url

import json

import reimport requestsclass GuBa(object):def __init__(self):self.base_url = 'http://guba.eastmoney.com/default,99_%s.html'self.headers = {'User-Agent': 'Mozilla/5.0 (Windows NT 6.1; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/78.0.3904.70 Safari/537.36'}self.infos = []self.parse()def parse(self):for i in range(1, 13):response = requests.get(self.base_url % i, headers=self.headers)'''阅读数,评论数,标题,作者,更新时间,详情页url'''ul_pattern = re.compile(r'<ul id="itemSearchList" class="itemSearchList">(.*?)</ul>', re.S)ul_content = ul_pattern.search(response.text)if ul_content:ul_content = ul_content.group()li_pattern = re.compile(r'<li>(.*?)</li>', re.S)li_list = li_pattern.findall(ul_content)# print(li_list)for li in li_list:item = {}reader_pattern = re.compile(r'<cite>(.*?)</cite>', re.S)info_list = reader_pattern.findall(li)# print(info_list)reader_num = ''comment_num = ''if info_list:reader_num = info_list[0].strip()comment_num = info_list[1].strip()print(reader_num, comment_num)title_pattern = re.compile(r'title="(.*?)" class="note">', re.S)title = title_pattern.search(li).group(1)# print(title)author_pattern = re.compile(r'target="_blank"><font>(.*?)</font></a><input type="hidden"', re.S)author = author_pattern.search(li).group(1)# print(author)date_pattern = re.compile(r'<cite class="last">(.*?)</cite>', re.S)date = date_pattern.search(li).group(1)# print(date)detail_pattern = re.compile(r' <a href="(.*?)" title=', re.S)detail_url = detail_pattern.search(li)if detail_url:detail_url = 'http://guba.eastmoney.com' + detail_url.group(1)else:detail_url = ''print(detail_url)item['title'] = titleitem['author'] = authoritem['date'] = dateitem['reader_num'] = reader_numitem['comment_num'] = comment_numitem['detail_url'] = detail_urlself.infos.append(item)with open('guba.json', 'w', encoding='utf-8') as fp:json.dump(self.infos, fp)gb=GuBa()

案例三:爬取某药品网站

爬取目标:爬取五十页的药品信息

'''要求:抓取50页字段:总价,描述,评论数量,详情页链接用正则爬取。'''

import requests, re,jsonclass Drugs:def __init__(self):self.url = url = 'https://www.111.com.cn/categories/953710-j%s.html'self.headers = {'user-agent': 'Mozilla/5.0 (Windows NT 6.1; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/77.0.3865.90 Safari/537.36'}self.Drugs_list=[]self.parse()def parse(self):for i in range(51):response = requests.get(self.url % i, headers=self.headers)# print(response.text)# 字段:药名,总价,评论数量,详情页链接Drugsul_pattern = re.compile('<ul id="itemSearchList" class="itemSearchList">(.*?)</ul>', re.S)Drugsul = Drugsul_pattern.search(response.text).group()# print(Drugsul)Drugsli_list_pattern = re.compile('<li id="producteg(.*?)</li>', re.S)Drugsli_list = Drugsli_list_pattern.findall(Drugsul)Drugsli_list = Drugsli_list# print(Drugsli_list)for drug in Drugsli_list:# ---药名item={}name_pattern = re.compile('alt="(.*?)"', re.S)name = name_pattern.search(str(drug)).group(1)# print(name)# ---总价total_pattern = re.compile('<span>(.*?)</span>', re.S)total = total_pattern.search(drug).group(1).strip()# print(total)# ----评论comment_pattern = re.compile('<em>(.*?)</em>')comment = comment_pattern.search(drug)if comment:comment_group = comment.group(1)else:comment_group = '0'# print(comment_group)# ---详情页链接href_pattern = re.compile('" href="//(.*?)"')href='https://'+href_pattern.search(drug).group(1).strip()# print(href)item['药名']=nameitem['总价']=totalitem['评论']=commentitem['链接']=hrefself.Drugs_list.append(item)

drugs = Drugs()

print(drugs.Drugs_list)