完整代码看这里

- 获得热门省编号和直辖市编号

蚂蜂窝中的所有城市、景点以及其他都有一个专属的5位数字编号(id号),我们第一步要做的就是获取mddid,http://www.MaFengWo.cn/mdd/,

进行后续的进一步分析

我们先提取出每个目的地的专属id(即href中的数字部分,eg:10065)

def find_province_url(url):html = get_html_text(url)soup = BeautifulSoup(html, 'lxml')municipality_directly_info(soup) # 处理直辖市信息dts = soup.find('div', class_='hot-list clearfix').find_all('dt')name = []provice_id = []urls = []print('正在处理非直辖市信息')for dt in dts:all_a = dt.find_all('a')for a in all_a:name.append(a.text)link = a.attrs['href']provice_id.append(re.search(r'/(\d.*).html', link).group(1)) # 得到这个省的编码# 得到这个省的热门城市链接data_cy_p = link.replace('travel-scenic-spot/mafengwo', 'mdd/citylist')urls.append(parse.urljoin(url, data_cy_p))return name, provice_id, urls

我们会发现除了直辖市之外的目的地会有热门城市,分析网页会发现这是一个Ajax请求。这是一个post请求。需要携带我们第一步获得的mddid,页数。

def parse_city_info(response):text = response.json()['list']soup = BeautifulSoup(text, 'lxml')items = soup.find_all('li', class_="item")info = []nums = 0for item in items:city = {}city['city_id'] = item.find('a').attrs['data-id']city['city_name'] = item.find('div', class_='title').text.split()[0]city['nums'] = int(item.find('b').text)nums += city['nums']info.append(city)return info, numsdef func(page, provice_id):print(f'解析{page}页信息')data = {'mddid': provice_id, 'page': page}response = requests.post('http://www.mafengwo.cn/mdd/base/list/pagedata_citylist', data=data)info, nums = parse_city_info(response) # 得到每个景点城市的具体名字, 链接, 多人少去过return (info, nums)def parse_city_url(url, provice_id):""":param url: 省url:param provice_id: 省id:return:"""html = get_html_text(url)provice_info = {} # 存储这个省的信息soup = BeautifulSoup(html, 'lxml')pages = int(soup.find(class_="pg-last _j_pageitem").attrs['data-page']) # 这个省总共有多少页热门城市city_info = []sum_nums = 0 # 用来记录这个省的总流量tpool = ThreadPoolExecutor(1)obj = []for page in range(1, pages + 1): # 解析页面发现是个post请求t = tpool.submit(func, page, provice_id)obj.append(t)for i in as_completed(obj):info, nums = i.result()sum_nums += numscity_info.extend(info)provice_info['sum_num'] = sum_numsprovice_info['citys'] = city_inforeturn provice_info

2.到这一步我们所有热门城市的id都已经获得。接下来就是根据id号获得每个热门城市的景点。美食。

def get_city_food(self, id_):url = 'http://www.mafengwo.cn/cy/' + id_ + '/gonglve.html'print(f'正在解析{url}')soup = self.get_html_soup(url)list_rank = soup.find('ol', class_='list-rank')food = [k.text for k in list_rank.find_all('h3')]food_count = [int(k.text) for k in list_rank.find_all('span', class_='trend')]food_info = []for i, j in zip(food, food_count):fd = {}fd['food_name'] = ifd['food_count'] = jfood_info.append(fd)return food_infodef get_city_jd(self, id_):""":param id_:城市编码id:return: 景点名称和评论数量"""url = 'http://www.mafengwo.cn/jd/' + id_ + '/gonglve.html'print(f'正在解析{url}')soup = self.get_html_soup(url)jd_info = []try:all_h3 = soup.find('div', class_='row-top5').find_all('h3')except:print('没有景点')jd = {}jd['jd_name'] = ''jd['jd_count'] = 0jd_info.append(jd)return jd_infofor h3 in all_h3:jd = {}jd['jd_name'] = h3.find('a')['title']try:jd['jd_count'] = int(h3.find('em').text)except:print('没有评论')jd['jd_count'] = 0jd_info.append(jd)return jd_info

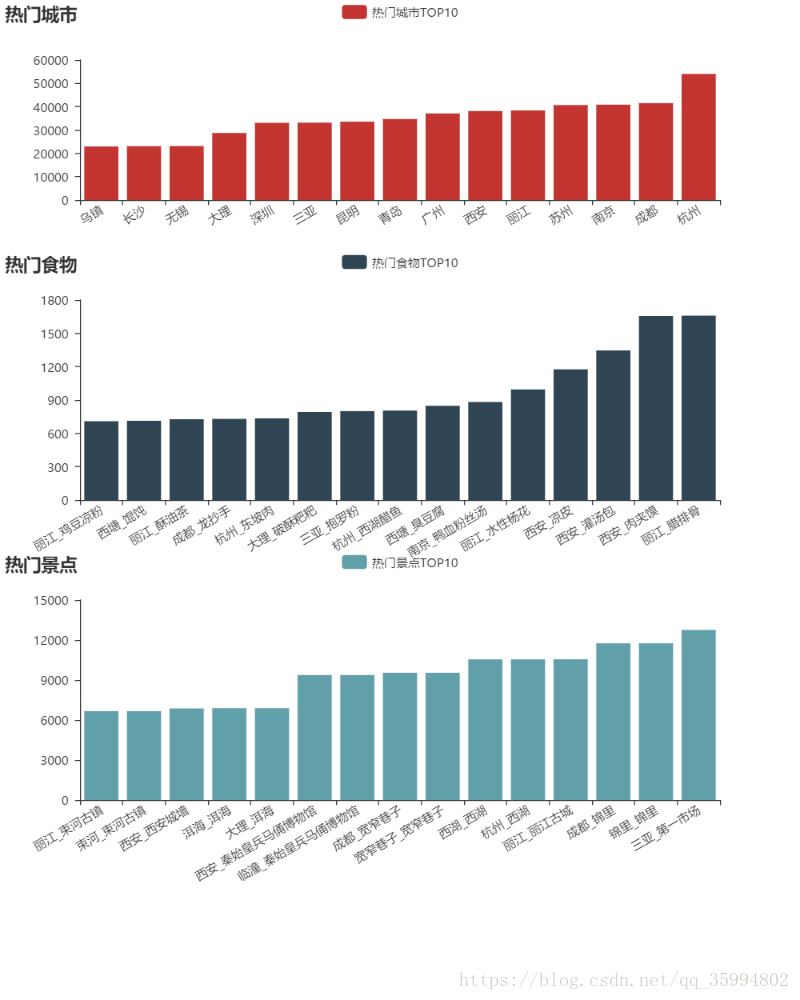

- 数据分析

![一个网站的诞生- MagicDict开发总结1 [首页]](https://images.cnblogs.com/cnblogs_com/texteditor/302155/r_Default-20110528.GIF)