中国高考志愿填报网站爬虫

- 一、环境准备

- 二、问题分析

- 三、spider

- 三、item

- 四、setting

- 五、pipelines

一、环境准备

python3.8.3

pycharm

项目所需第三方包

pip install scrapy fake-useragent requests virtualenv -i https://pypi.douban.com/simple

1.1创建虚拟环境

切换到指定目录创建

virtualenv .venv

创建完记得激活虚拟环境

1.2创建项目

scrapy startproject 项目名称

1.3使用pycharm打开项目,将创建的虚拟环境配置到项目中来

1.4创建京东spider

scrapy genspider 爬虫名称 url

1.4 修改允许访问的域名,删除https:

二、问题分析

查看网页响应的数据

发现返回的数据在json中

查看请求的网址

打开网址查看数据

拿到详情页请求地址,获取详情页数据,分别获取学校名称、学校邮箱、学校电话、学校电话、学校地址、学校邮编、学校网址

学校名称

学校邮箱

学校电话

学校地址

学校邮编

学校网址

三、spider

import json

import scrapyfrom lianjia.items import china_school_Itemclass ChinaSchoolSpider(scrapy.Spider):name = 'china_school'allowed_domains = ['api.eol.cn', 'static-data.eol.cn']start_urls = ['https://api.eol.cn/gkcx/api/?access_token=&admissions=¢ral=&department=&dual_class=&f211=&f985=&is_doublehigh=&is_dual_class=&keyword=&nature=&page=1&province_id=&ranktype=&request_type=1&school_type=&signsafe=&size=20&sort=view_total&top_school_id=&type=&uri=apidata/api/gk/school/lists']def parse(self, response):jsons = json.loads(response.text)ls = jsons.get('data')for l in ls.get('item'):school_id = l.get('school_id')school_url = 'https://gkcx.eol.cn/school/' + str(school_id)new_school_url = f'https://static-data.eol.cn/www/2.0/school/{school_id}/info.json'yield scrapy.Request(url=new_school_url, callback=self.detail_parse)for i in range(2, 143):nex_page_url = f'https://api.eol.cn/gkcx/api/?access_token=&admissions=¢ral=&department=&dual_class=&f211=&f985=&is_doublehigh=&is_dual_class=&keyword=&nature=&page={i}&province_id=&ranktype=&request_type=1&school_type=&signsafe=&size=20&sort=view_total&top_school_id=&type=&uri=apidata/api/gk/school/lists'yield scrapy.Request(url=nex_page_url, callback=self.parse)def detail_parse(self, response):item = china_school_Item()jsons = json.loads(response.text)data = jsons.get('data')school_name = data.get('name')school_email_one = data.get('email')school_email_two = data.get('school_email')school_address = data.get('address')school_postcode = data.get('postcode')school_site_one = data.get('site')school_site_two = data.get('school_site')school_phone_one = data.get('phone')school_phone_two = data.get('school_phone')item['school_name'] = school_nameitem['school_email_one'] = school_email_oneitem['school_email_two'] = school_email_twoitem['school_address'] = school_addressitem['school_postcode'] = school_postcodeitem['school_site_one'] = school_site_oneitem['school_site_two'] = school_site_twoitem['school_phone_one'] = school_phone_oneitem['school_phone_two'] = school_phone_twoyield item三、item

# Define here the models for your scraped items

#

# See documentation in:

# https://docs.scrapy.org/en/latest/topics/items.htmlimport scrapyclass china_school_Item(scrapy.Item):# define the fields for your item here like:school_name = scrapy.Field()school_email_one = scrapy.Field()school_email_two = scrapy.Field()school_address = scrapy.Field()school_postcode = scrapy.Field()school_site_one = scrapy.Field()school_site_two = scrapy.Field()school_phone_one = scrapy.Field()school_phone_two = scrapy.Field()

四、setting

import randomfrom fake_useragent import UserAgent

ua = UserAgent()

USER_AGENT = ua.random

ROBOTSTXT_OBEY = False

DOWNLOAD_DELAY = random.uniform(0.5, 1)

ITEM_PIPELINES = {'lianjia.pipelines.China_school_Pipeline': 300,

}

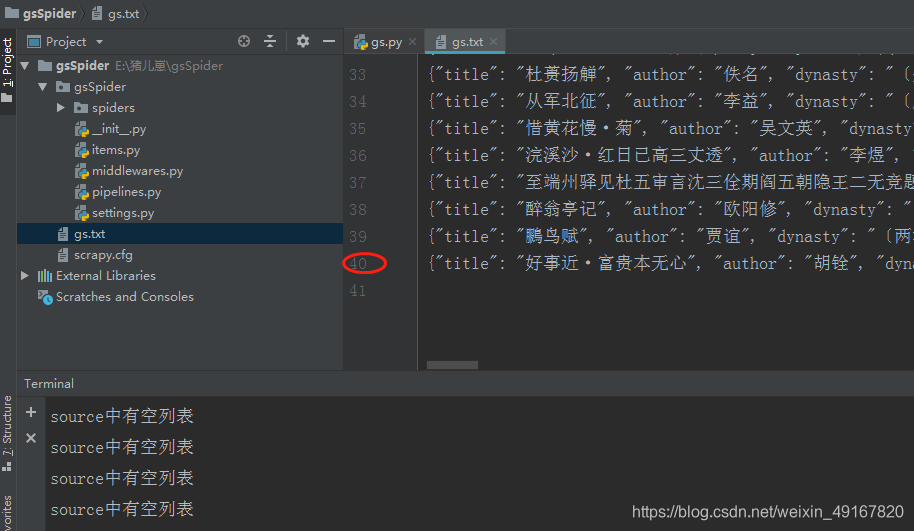

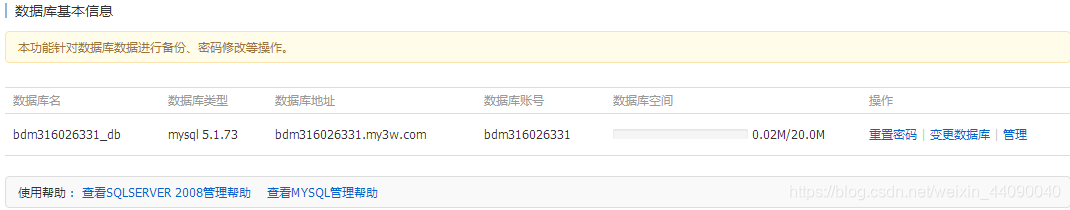

五、pipelines

class China_school_Pipeline:# def process_item(self, item, spider):# return itemdef open_spider(self, spider):self.fp = open('./china_schook.xlsx', mode='w+', encoding='utf-8')self.fp.write('school_name\tschool_email_one\tschool_email_two\tschool_address\tschool_postcode\tschool_site_one\tschool_site_two\tschool_phone_one\tschool_phone_two\t\n')def process_item(self, item, spider):# 写入文件try:line = '\t'.join(list(item.values())) + '\n'self.fp.write(line)return itemexcept:passdef close_spider(self, spider):# 关闭文件self.fp.close()