目录

- 使用openvino:(推理使用由onnx生成的xml及bin文件)

- 0.查看支持的推理设备:

- 1.构造模型:

- 2.进行预处理工作,cv::mat转ov::tensor

- 3.推理后结果提取:

- 使用opencv自带的dnn模块进行推理

- 0.模型构造

- 1.数据准备:原始mat变形为cv::dnn::Net的输入mat

- 2.推理后结果提取:

使用openvino:(推理使用由onnx生成的xml及bin文件)

0.查看支持的推理设备:

ov::Core ie;

vector<string> availableDevices = ie.get_available_devices();

for (int i = 0; i < availableDevices.size(); i++) {qDebug()<<"supported device name : "<<availableDevices[i].c_str();

}

1.构造模型:

ov::Core ie;

auto network = ie.read_model(xml,bin);

if(gpu)

{

auto compiled_model = ie.compile_model(network, "GPU");

infer_request = compiled_model.create_infer_request();

}

else

{

auto compiled_model = ie.compile_model(network, "CPU");

infer_request = compiled_model.create_infer_request();

}

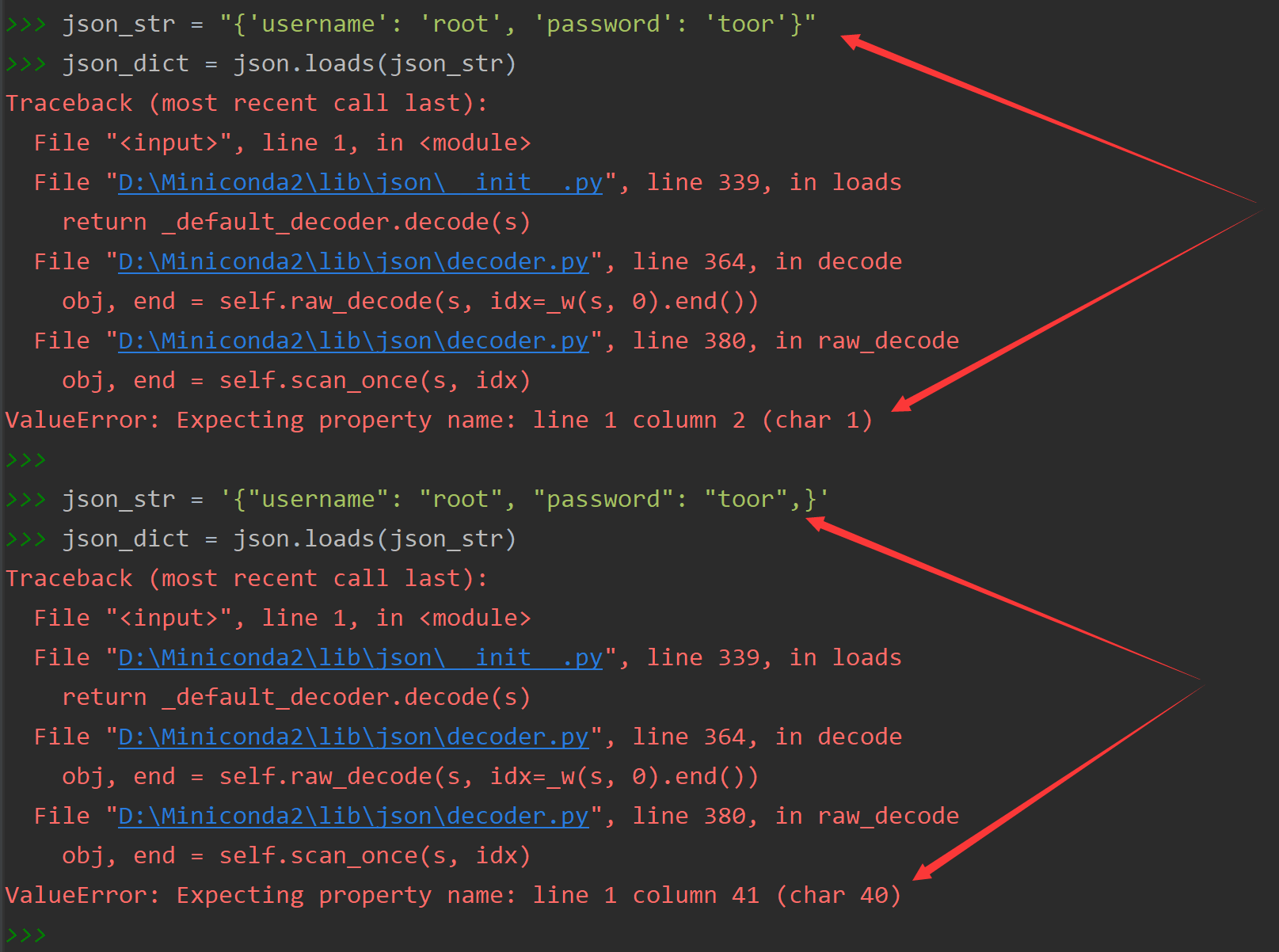

2.进行预处理工作,cv::mat转ov::tensor

计算机内存float*是一个一维数组线性存储,故相互赋值需将mat及tensor的索引统一为线性索引:从头逐一连续赋值;

一般而言有:

void cvImageToTensor(const cv::Mat & image, float *tensor, nvinfer1::Dims dimensions)

{const size_t channels = dimensions.d[1];const size_t height = dimensions.d[2];const size_t width = dimensions.d[3];// TODO: validate dimensions matchconst size_t stridesCv[3] = { width * channels, channels, 1 };const size_t strides[3] = { height * width, width, 1 };for (int i = 0; i < height; i++) {for (int j = 0; j < width; j++) {for (int k = 0; k < channels; k++) {const size_t offsetCv = i * stridesCv[0] + j * stridesCv[1] + k * stridesCv[2];const size_t offset = k * strides[0] + i * strides[1] + j * strides[2];tensor[offset] = (float) image.data[offsetCv];}}}

}

或使用opencv自己的取值函数at<>:

cv::Mat img = this->resize_image(frame, &newh, &neww, &padh, &padw);ov::Tensor input_tensor1 = infer_request.get_input_tensor(0);auto data1 = input_tensor1.data<float>();cv::cvtColor(img,img,cv::COLOR_BGR2RGB);for (int h = 0; h < 640; h++){for (int w = 0; w < 640; w++){for (int c = 0; c < 3; c++){//tensor:chw排列,这里待转tensor为(1,3,640,640)int out_index = c * 640 * 640 + h * 640 + w;//mat是hwc排列,原始mat为(640,640,3)data1[out_index] = (float(img.at<cv::Vec3b>(h, w)[c])-127.5)/128.0;}}}infer_request.infer();

3.推理后结果提取:

float* pdata_score = infer_request.get_output_tensor(n).data<float>();

使用opencv自带的dnn模块进行推理

0.模型构造

这里使用的是onnx

cv::dnn::Net net;this->net = cv::dnn::readNet(config.modelfile);

1.数据准备:原始mat变形为cv::dnn::Net的输入mat

cv::Mat img = this->resize_image(frame, &newh, &neww, &padh, &padw);cv::Mat blob;//先减127.5再调换RB通道,再除以128.0,得出的blob为(1,3,640,640)的形状cv::dnn::blobFromImage(img, blob, 1 / 128.0, cv::Size(this->inpWidth, this->inpHeight), cv::Scalar(127.5, 127.5, 127.5), true, false);this->net.setInput(blob);std::vector<cv::Mat> outs;this->net.forward(outs, this->net.getUnconnectedOutLayersNames());

2.推理后结果提取:

float* pdata_score = (float*)outs[n * 3].data;

![[附源码]SSM计算机毕业设计校园自行车租售管理系统JAVA](https://img-blog.csdnimg.cn/a249e16921784f4091bad90ad92a747d.png)

![[附源码]Python计算机毕业设计Django常见Web漏洞对应POC应用系统](https://img-blog.csdnimg.cn/3e56d44262cb4c6d9150eaaca452156a.png)

![[附源码]计算机毕业设计springboot海南琼旅旅游网](https://img-blog.csdnimg.cn/ed4c048350c84364b4c93300e542942e.png)