1. 创建maven项目

New Project

2. 添加依赖

<!-- https://mvnrepository.com/artifact/org.apache.hadoop/hadoop-client --><dependency><groupId>org.apache.hadoop</groupId><artifactId>hadoop-client</artifactId><version>2.7.2</version><scope>provided</scope></dependency><!-- https://mvnrepository.com/artifact/org.apache.hadoop/hadoop-common --><dependency><groupId>org.apache.hadoop</groupId><artifactId>hadoop-common</artifactId><version>2.7.2</version></dependency><dependency><groupId>org.apache.hadoop</groupId><artifactId>hadoop-hdfs</artifactId><version>2.7.2</version></dependency>3. java操作HDFS实现上传、下载和删除

package com.sanqian.hdfs;import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.FSDataInputStream;

import org.apache.hadoop.fs.FSDataOutputStream;

import org.apache.hadoop.fs.FileSystem;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.IOUtils;import java.io.FileInputStream;

import java.io.FileOutputStream;

import java.io.IOException;/**** HDFS文件操作: 上传文件、下载文件、删除文件*/

public class HdfsOp {public static void main(String[] args) throws IOException {//创建一个配置对象Configuration conf = new Configuration();//指定HDFS地址conf.set("fs.defaultFS", "hdfs://192.168.21.101:9000");//获取HDFS操作对象FileSystem fileSystem = FileSystem.get(conf);//上传文件//put(fileSystem);//下载文件//get(fileSystem);//删除文件delete(fileSystem);}/*** 删除文件或目录* @param fileSystem* @throws IOException*/private static void delete(FileSystem fileSystem) throws IOException {//第二个参数表示是否递归删除boolean flag = fileSystem.delete(new Path("/user/root/scala-2.11.8.zip"), true);if(flag){System.out.println("删除成功");}else{System.out.println("删除失败");}}/*** 下载文件* @param fileSystem* @throws IOException*/private static void get(FileSystem fileSystem) throws IOException {//获取HDFS文件系统中的输入流FSDataInputStream fis = fileSystem.open(new Path("/user/root/output/part-r-00000"));//获取本地文件输出流FileOutputStream fos = new FileOutputStream("D:\\data\\part-00000");//下载文件IOUtils.copyBytes(fis, fos, 1024, true);}/*** 上传文件* @param fileSystem* @throws IOException*/private static void put(FileSystem fileSystem) throws IOException {//文件本地文件的输入流FileInputStream fis = new FileInputStream("D:\\data\\scala-2.11.8.zip");//获取HDFS文件系统的输出流FSDataOutputStream fos = fileSystem.create(new Path("/user/root/scala-2.11.8.zip"));//通过工具类把输入流拷贝到输出流里面,实现本地文件上传到HDFSIOUtils.copyBytes(fis, fos, 1024, true);}}

4. 遇到问题及解决

问题1: 访问HDFS时发生报错

org.apache.hadoop.security.AccessControlException:Permission denied: user=Administrator, access=WRITE,inode="/output":hadoop:supergroup:drwxr-xr-x

其他用户没有访问HDFS的权限,解决办法有两种

方法一: 关闭HDFS权限校验,vim hdfs-site.xml 添加配置

方法二:修改HDFS根路径的权限为777

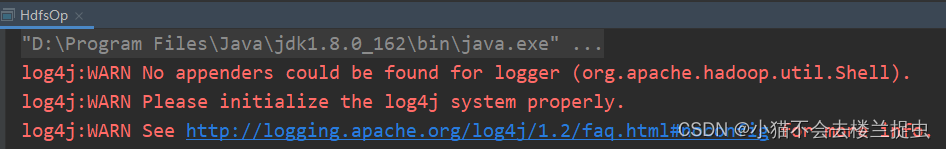

hadoop fs -chmod -R 777 /问题2:解决控制台的警告信息

解决办法:在resources下新建文件log4j.properties ,在文件中粘贴如下内容:

log4j.rootLogger=info,console

log4j.appender.console=org.apache.log4j.ConsoleAppender

log4j.appender.console.target=System.out

log4j.appender.console.layout=org.apache.log4j.PatternLayout

log4j.appender.console.layout.ConversionPattern=%d{yy/MM/dd HH:mm:ss} %p %c{2}: %m%n

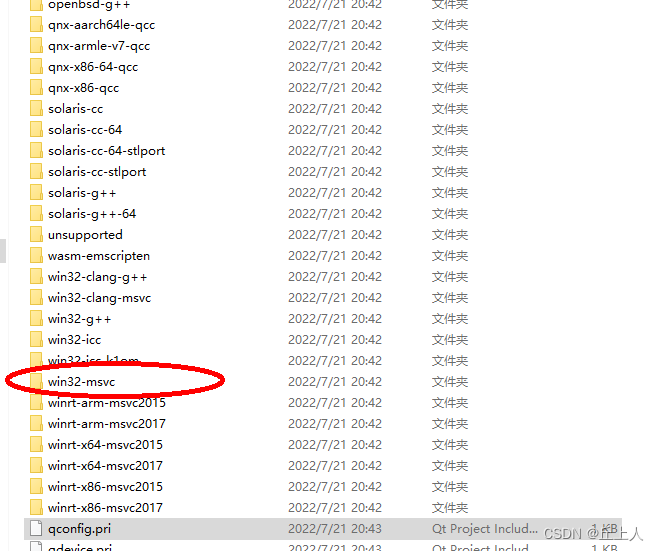

问题3:widnows下运行程序报错: Could not locate executable null\bin\winutils.exe in the Hadoop binaries.

22/09/24 14:31:04 ERROR util.Shell: Failed to locate the winutils binary in the hadoop binary path

java.io.IOException: Could not locate executable null\bin\winutils.exe in the Hadoop binaries.

at org.apache.hadoop.util.Shell.getQualifiedBinPath(Shell.java:356)

at org.apache.hadoop.util.Shell.getWinUtilsPath(Shell.java:371)

at org.apache.hadoop.util.Shell.<clinit>(Shell.java:364)

at org.apache.hadoop.util.StringUtils.<clinit>(StringUtils.java:80)

at org.apache.hadoop.fs.FileSystem$Cache$Key.<init>(FileSystem.java:2807)

at org.apache.hadoop.fs.FileSystem$Cache$Key.<init>(FileSystem.java:2802)

at org.apache.hadoop.fs.FileSystem$Cache.get(FileSystem.java:2668)

at org.apache.hadoop.fs.FileSystem.get(FileSystem.java:371)

at org.apache.hadoop.fs.FileSystem.get(FileSystem.java:170)

at com.sanqian.hdfs.HdfsOp.main(HdfsOp.java:26)

解决办法:

参考:https://blog.csdn.net/qq_38204087/article/details/107119168

注:记得要重启电脑