31.网站数据监控-2(scrapy文件下载)

温州数据采集

这里采集网站数据是下载pdf:http://wzszjw.wenzhou.gov.cn/col/col1357901/index.html

(涉及的问题就是scrapy 文件的下载设置,之前没用scrapy下载文件,所以弄了很久才弄好,网上很多不过写的都不完善。)

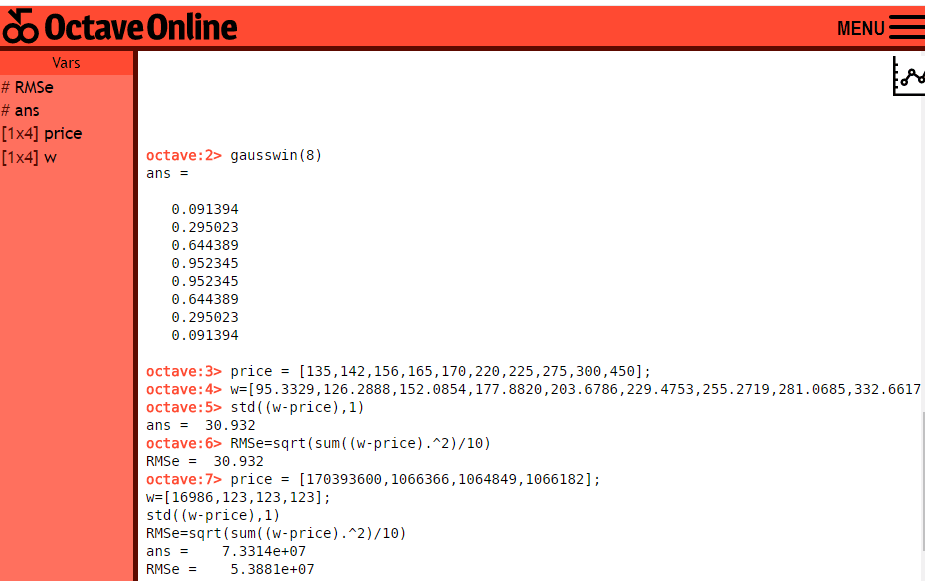

主要重点就是设置:

1.piplines.py 文件下载代码 这部分可以直接拿来用不需要修改。

2.就是下载文件的url要放在列表里 item['file_urls']=[url](wenzhou.py)

3. setting.py 主要配置

ITEM_PIPELINES = {

'wenzhou_web.pipelines.WenzhouWebPipeline': 300,

# 下载文件管道

'scrapy.pipelines.MyFilePipeline': 1,

}

#下载路径

FILES_STORE = './download'

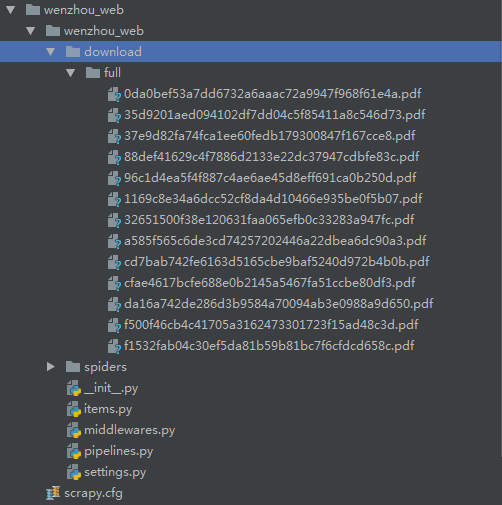

4.下载的文件就会保存到download文件夹中

如图:

wenzhou.py

# -*- coding: utf-8 -*- import scrapy import re from wenzhou_web.items import WenzhouWebItem class WenzhouSpider(scrapy.Spider):name = 'wenzhou'base_url=['http://wzszjw.wenzhou.gov.cn']allowed_domains = ['wzszjw.wenzhou.gov.cn']start_urls = ['http://wzszjw.wenzhou.gov.cn/col/col1357901/index.html']custom_settings = {"DOWNLOAD_DELAY": 0.5,"ITEM_PIPELINES": {'wenzhou_web.pipelines.MysqlPipeline': 320,'wenzhou_web.pipelines.MyFilePipeline': 321,},"DOWNLOADER_MIDDLEWARES": {'wenzhou_web.middlewares.WenzhouWebDownloaderMiddleware': 500,},}def parse(self, response):_response=response.texttag_list=re.findall("<span>.*?</span><b>·</b><a href=\'(.*?)\'",_response)for tag in tag_list:url=self.base_url[0]+tag# print(url)yield scrapy.Request(url=url,callback=self.parse_detail)def parse_detail(self,response):# _response=response.text.encode('utf8')# print(_response)_response=response.textitem=WenzhouWebItem()pdf_url=re.findall(r'<a target="_blank" href="(.*?)"',_response)for u in pdf_url:u=u.split('pdf')[0]# print(u)#链接url="http://wzszjw.wenzhou.gov.cn"+u+"pdf"# print(url) item['file_urls']=[url]yield item# # #标题# # try:# # title=re.findall('<img src=".*?".*?><span style=".*?">(.*?)</span></a></p><meta name="ContentEnd">',_response)# # print(title[0])# # except:# # print('有异常!')

items.py

# -*- coding: utf-8 -*-# Define here the models for your scraped items # # See documentation in: # https://doc.scrapy.org/en/latest/topics/items.htmlimport scrapyclass WenzhouWebItem(scrapy.Item):# define the fields for your item here like:# name = scrapy.Field()# pass#链接file_urls=scrapy.Field()

middlewares.py

# -*- coding: utf-8 -*-# Define here the models for your spider middleware # # See documentation in: # https://doc.scrapy.org/en/latest/topics/spider-middleware.htmlfrom scrapy import signalsclass WenzhouWebSpiderMiddleware(object):# Not all methods need to be defined. If a method is not defined,# scrapy acts as if the spider middleware does not modify the# passed objects. @classmethoddef from_crawler(cls, crawler):# This method is used by Scrapy to create your spiders.s = cls()crawler.signals.connect(s.spider_opened, signal=signals.spider_opened)return sdef process_spider_input(self, response, spider):# Called for each response that goes through the spider# middleware and into the spider.# Should return None or raise an exception.return Nonedef process_spider_output(self, response, result, spider):# Called with the results returned from the Spider, after# it has processed the response.# Must return an iterable of Request, dict or Item objects.for i in result:yield idef process_spider_exception(self, response, exception, spider):# Called when a spider or process_spider_input() method# (from other spider middleware) raises an exception.# Should return either None or an iterable of Response, dict# or Item objects.passdef process_start_requests(self, start_requests, spider):# Called with the start requests of the spider, and works# similarly to the process_spider_output() method, except# that it doesn’t have a response associated.# Must return only requests (not items).for r in start_requests:yield rdef spider_opened(self, spider):spider.logger.info('Spider opened: %s' % spider.name)class WenzhouWebDownloaderMiddleware(object):# Not all methods need to be defined. If a method is not defined,# scrapy acts as if the downloader middleware does not modify the# passed objects. @classmethoddef from_crawler(cls, crawler):# This method is used by Scrapy to create your spiders.s = cls()crawler.signals.connect(s.spider_opened, signal=signals.spider_opened)return sdef process_request(self, request, spider):# Called for each request that goes through the downloader# middleware.# Must either:# - return None: continue processing this request# - or return a Response object# - or return a Request object# - or raise IgnoreRequest: process_exception() methods of# installed downloader middleware will be calledreturn Nonedef process_response(self, request, response, spider):# Called with the response returned from the downloader.# Must either;# - return a Response object# - return a Request object# - or raise IgnoreRequestreturn responsedef process_exception(self, request, exception, spider):# Called when a download handler or a process_request()# (from other downloader middleware) raises an exception.# Must either:# - return None: continue processing this exception# - return a Response object: stops process_exception() chain# - return a Request object: stops process_exception() chainpassdef spider_opened(self, spider):spider.logger.info('Spider opened: %s' % spider.name)

piplines.py

# -*- coding: utf-8 -*-# Define your item pipelines here # # Don't forget to add your pipeline to the ITEM_PIPELINES setting # See: https://doc.scrapy.org/en/latest/topics/item-pipeline.htmlfrom scrapy.conf import settings from scrapy.exceptions import DropItem from scrapy.pipelines.files import FilesPipeline import pymysql from urllib.parse import urlparse import scrapyclass WenzhouWebPipeline(object):def process_item(self, item, spider):return item# 数据保存mysql class MysqlPipeline(object):def open_spider(self, spider):self.host = settings.get('MYSQL_HOST')self.port = settings.get('MYSQL_PORT')self.user = settings.get('MYSQL_USER')self.password = settings.get('MYSQL_PASSWORD')self.db = settings.get(('MYSQL_DB'))self.table = settings.get('TABLE')self.client = pymysql.connect(host=self.host, user=self.user, password=self.password, port=self.port, db=self.db, charset='utf8')def process_item(self, item, spider):item_dict = dict(item)cursor = self.client.cursor()values = ','.join(['%s'] * len(item_dict))keys = ','.join(item_dict.keys())sql = 'INSERT INTO {table}({keys}) VALUES ({values})'.format(table=self.table, keys=keys, values=values)try:if cursor.execute(sql, tuple(item_dict.values())): # 第一个值为sql语句第二个为 值 为一个元组print('数据入库成功!')self.client.commit()except Exception as e:print(e)print('数据已存在!')self.client.rollback()return itemdef close_spider(self, spider):self.client.close() #定义下载 class MyFilePipeline(FilesPipeline):def get_media_requests(self, item, info):for file_url in item['file_urls']:yield scrapy.Request(file_url)def item_completed(self, results, item, info):image_paths = [x['path'] for ok, x in results if ok]if not image_paths:raise DropItem("Item contains no file")item['file_urls'] = image_pathsreturn item

setting.py

# -*- coding: utf-8 -*-# Scrapy settings for wenzhou_web project # # For simplicity, this file contains only settings considered important or # commonly used. You can find more settings consulting the documentation: # # https://doc.scrapy.org/en/latest/topics/settings.html # https://doc.scrapy.org/en/latest/topics/downloader-middleware.html # https://doc.scrapy.org/en/latest/topics/spider-middleware.html BOT_NAME = 'wenzhou_web'SPIDER_MODULES = ['wenzhou_web.spiders'] NEWSPIDER_MODULE = 'wenzhou_web.spiders'# mysql配置参数 MYSQL_HOST = "192.168.113.129" MYSQL_PORT = 3306 MYSQL_USER = "root" MYSQL_PASSWORD = "123456" MYSQL_DB = 'web_datas' TABLE = "web_wenzhou"# Crawl responsibly by identifying yourself (and your website) on the user-agent #USER_AGENT = 'wenzhou_web (+http://www.yourdomain.com)'# Obey robots.txt rules ROBOTSTXT_OBEY = False# Configure maximum concurrent requests performed by Scrapy (default: 16) #CONCURRENT_REQUESTS = 32# Configure a delay for requests for the same website (default: 0) # See https://doc.scrapy.org/en/latest/topics/settings.html#download-delay # See also autothrottle settings and docs #DOWNLOAD_DELAY = 3 # The download delay setting will honor only one of: #CONCURRENT_REQUESTS_PER_DOMAIN = 16 #CONCURRENT_REQUESTS_PER_IP = 16# Disable cookies (enabled by default) #COOKIES_ENABLED = False# Disable Telnet Console (enabled by default) #TELNETCONSOLE_ENABLED = False# Override the default request headers: #DEFAULT_REQUEST_HEADERS = { # 'Accept': 'text/html,application/xhtml+xml,application/xml;q=0.9,*/*;q=0.8', # 'Accept-Language': 'en', #}# Enable or disable spider middlewares # See https://doc.scrapy.org/en/latest/topics/spider-middleware.html #SPIDER_MIDDLEWARES = { # 'wenzhou_web.middlewares.WenzhouWebSpiderMiddleware': 543, #}# Enable or disable downloader middlewares # See https://doc.scrapy.org/en/latest/topics/downloader-middleware.html DOWNLOADER_MIDDLEWARES = {'wenzhou_web.middlewares.WenzhouWebDownloaderMiddleware': 500, }# Enable or disable extensions # See https://doc.scrapy.org/en/latest/topics/extensions.html #EXTENSIONS = { # 'scrapy.extensions.telnet.TelnetConsole': None, #}# Configure item pipelines # See https://doc.scrapy.org/en/latest/topics/item-pipeline.html ITEM_PIPELINES = {'wenzhou_web.pipelines.WenzhouWebPipeline': 300,# 下载文件管道'scrapy.pipelines.MyFilePipeline': 1, }FILES_STORE = './download'# Enable and configure the AutoThrottle extension (disabled by default) # See https://doc.scrapy.org/en/latest/topics/autothrottle.html #AUTOTHROTTLE_ENABLED = True # The initial download delay #AUTOTHROTTLE_START_DELAY = 5 # The maximum download delay to be set in case of high latencies #AUTOTHROTTLE_MAX_DELAY = 60 # The average number of requests Scrapy should be sending in parallel to # each remote server #AUTOTHROTTLE_TARGET_CONCURRENCY = 1.0 # Enable showing throttling stats for every response received: #AUTOTHROTTLE_DEBUG = False# Enable and configure HTTP caching (disabled by default) # See https://doc.scrapy.org/en/latest/topics/downloader-middleware.html#httpcache-middleware-settings #HTTPCACHE_ENABLED = True #HTTPCACHE_EXPIRATION_SECS = 0 #HTTPCACHE_DIR = 'httpcache' #HTTPCACHE_IGNORE_HTTP_CODES = [] #HTTPCACHE_STORAGE = 'scrapy.extensions.httpcache.FilesystemCacheStorage'

posted on 2018-09-25 16:50 五杀摇滚小拉夫 阅读(...) 评论(...) 编辑 收藏

![[站长手记] 教训:title中关键词的位置对于网站排名的至关重要性](http://hi.csdn.net/attachment/201004/16/0_1271396761pNvM.gif)