前言

问题的现象主要是如下

项目刚启动的时候 十分正常, 然后 随着时间的推移, 比如说 项目跑了 四五天之后

项目 突然出现问题, 一部分服务能够正常访问, 一部分服务抛出异常

异常信息 就是 too many files

这里的主要的问题是 在异常之前, redis 集群没有密码, 然后 某一天 redis 集群增加了密码之后, 就出现了 上述的问题

当然 这个 也是 从后面的情况中 推导出去的

异常信息 如下

java.io.IOException: Too many open filesat sun.nio.ch.ServerSocketChannelImpl.accept0(Native Method)at sun.nio.ch.ServerSocketChannelImpl.accept(ServerSocketChannelImpl.java:422)at sun.nio.ch.ServerSocketChannelImpl.accept(ServerSocketChannelImpl.java:250)at org.apache.tomcat.util.net.NioEndpoint.serverSocketAccept(NioEndpoint.java:450)at org.apache.tomcat.util.net.NioEndpoint.serverSocketAccept(NioEndpoint.java:73)at org.apache.tomcat.util.net.Acceptor.run(Acceptor.java:95)at java.lang.Thread.run(Thread.java:748)

2023-06-16 11:03:00.354 [http-nio-8291-Acceptor-0] ERROR org.apache.tomcat.util.net.Acceptor - Socket accept failed

java.io.IOException: Too many open filesat sun.nio.ch.ServerSocketChannelImpl.accept0(Native Method)at sun.nio.ch.ServerSocketChannelImpl.accept(ServerSocketChannelImpl.java:422)at sun.nio.ch.ServerSocketChannelImpl.accept(ServerSocketChannelImpl.java:250)at org.apache.tomcat.util.net.NioEndpoint.serverSocketAccept(NioEndpoint.java:450)at org.apache.tomcat.util.net.NioEndpoint.serverSocketAccept(NioEndpoint.java:73)at org.apache.tomcat.util.net.Acceptor.run(Acceptor.java:95)at java.lang.Thread.run(Thread.java:748)异常出现了 too many files, 一般的处理方式就是 查看项目 是否有那么多 需要打开的 文件, 网络连接, socket, pipe 等等

如果项目确实 需要打开那么多的 FileDescriptor, 那么 调整 linux 的 open fields 的数量即可

但是 如果是 大量的异常情况的 FileDescriptor, 那么 就需要排查对应的问题了

我们这里的情况就是 大量的 异常的 FileDescriptor

问题的调试

首先看一下 现场情况

查看 当前进程的所有 fd 的信息如下, 可以看到 大量的 socket 的 FileDescriptor

root@ubuntu:~# ll /proc/17843/fd | grep socket

lrwx------ 1 root root 64 Jun 21 09:13 14 -> socket:[1092572]

lrwx------ 1 root root 64 Jun 21 09:13 158 -> socket:[1091360]

lrwx------ 1 root root 64 Jun 21 09:13 183 -> socket:[1091361]

lrwx------ 1 root root 64 Jun 21 09:13 208 -> socket:[1091362]

lrwx------ 1 root root 64 Jun 21 09:13 209 -> socket:[1091363]

lrwx------ 1 root root 64 Jun 21 09:13 210 -> socket:[1091364]

lrwx------ 1 root root 64 Jun 21 09:13 211 -> socket:[1091365]

lrwx------ 1 root root 64 Jun 21 09:13 212 -> socket:[1098021]

lrwx------ 1 root root 64 Jun 21 09:13 213 -> socket:[1091372]

lrwx------ 1 root root 64 Jun 21 09:13 214 -> socket:[1091373]

lrwx------ 1 root root 64 Jun 21 09:13 215 -> socket:[1091374]

lrwx------ 1 root root 64 Jun 21 09:13 216 -> socket:[1093391]

lrwx------ 1 root root 64 Jun 21 09:13 217 -> socket:[1093392]

lrwx------ 1 root root 64 Jun 21 09:13 218 -> socket:[1094944]

lrwx------ 1 root root 64 Jun 21 09:13 219 -> socket:[1091375]

lrwx------ 1 root root 64 Jun 21 09:13 220 -> socket:[1091376]

lrwx------ 1 root root 64 Jun 21 09:13 221 -> socket:[1091377]

lrwx------ 1 root root 64 Jun 21 09:13 222 -> socket:[1091378]

lrwx------ 1 root root 64 Jun 21 09:13 223 -> socket:[1091379]

lrwx------ 1 root root 64 Jun 21 09:13 224 -> socket:[1091380]

lrwx------ 1 root root 64 Jun 21 09:13 225 -> socket:[1091389]

lrwx------ 1 root root 64 Jun 21 09:13 226 -> socket:[1091390]

lrwx------ 1 root root 64 Jun 21 09:13 227 -> socket:[1091391]

lrwx------ 1 root root 64 Jun 21 09:13 228 -> socket:[1091392]

lrwx------ 1 root root 64 Jun 21 09:13 229 -> socket:[1091393]

lrwx------ 1 root root 64 Jun 21 09:13 230 -> socket:[1091394]

lrwx------ 1 root root 64 Jun 21 09:13 231 -> socket:[1091401]

lrwx------ 1 root root 64 Jun 21 09:13 232 -> socket:[1094176]

lrwx------ 1 root root 64 Jun 21 09:13 233 -> socket:[1094181]

lrwx------ 1 root root 64 Jun 21 09:13 234 -> socket:[1094238]

lrwx------ 1 root root 64 Jun 21 09:13 235 -> socket:[1094247]

lrwx------ 1 root root 64 Jun 21 09:13 236 -> socket:[1094248]

lrwx------ 1 root root 64 Jun 21 09:13 237 -> socket:[1095491]

lrwx------ 1 root root 64 Jun 21 09:13 238 -> socket:[1095494]

lrwx------ 1 root root 64 Jun 21 09:13 239 -> socket:[1095495]

lrwx------ 1 root root 64 Jun 21 09:13 240 -> socket:[1095496]

lrwx------ 1 root root 64 Jun 21 09:13 241 -> socket:[1095497]

lrwx------ 1 root root 64 Jun 21 09:13 242 -> socket:[1095498]

lrwx------ 1 root root 64 Jun 21 09:13 243 -> socket:[1091535]

lrwx------ 1 root root 64 Jun 21 09:13 244 -> socket:[1091536]

lrwx------ 1 root root 64 Jun 21 09:13 245 -> socket:[1091537]

lrwx------ 1 root root 64 Jun 21 09:13 246 -> socket:[1091538]

lrwx------ 1 root root 64 Jun 21 09:13 247 -> socket:[1091539]

lrwx------ 1 root root 64 Jun 21 09:13 248 -> socket:[1091540]

lrwx------ 1 root root 64 Jun 21 09:13 249 -> socket:[1098022]

lrwx------ 1 root root 64 Jun 21 09:13 25 -> socket:[1092819]

lrwx------ 1 root root 64 Jun 21 09:13 250 -> socket:[1098023]

lrwx------ 1 root root 64 Jun 21 09:13 251 -> socket:[1098024]

lrwx------ 1 root root 64 Jun 21 09:13 252 -> socket:[1098025]

lrwx------ 1 root root 64 Jun 21 09:13 253 -> socket:[1098026]

lrwx------ 1 root root 64 Jun 21 09:13 254 -> socket:[1110159]

lrwx------ 1 root root 64 Jun 21 09:13 255 -> socket:[1098782]

lrwx------ 1 root root 64 Jun 21 09:13 256 -> socket:[1098783]

lrwx------ 1 root root 64 Jun 21 09:13 257 -> socket:[1095991]

lrwx------ 1 root root 64 Jun 21 09:13 258 -> socket:[1095992]

lrwx------ 1 root root 64 Jun 21 09:13 259 -> socket:[1095993]

lrwx------ 1 root root 64 Jun 21 09:13 26 -> socket:[1092821]

lrwx------ 1 root root 64 Jun 21 09:13 260 -> socket:[1095994]

lrwx------ 1 root root 64 Jun 21 09:26 261 -> socket:[1101540]

lrwx------ 1 root root 64 Jun 21 09:26 262 -> socket:[1101541]

lrwx------ 1 root root 64 Jun 21 09:26 263 -> socket:[1101542]

lrwx------ 1 root root 64 Jun 21 09:26 264 -> socket:[1101543]

lrwx------ 1 root root 64 Jun 21 09:26 265 -> socket:[1101544]

lrwx------ 1 root root 64 Jun 21 09:26 266 -> socket:[1101545]

lrwx------ 1 root root 64 Jun 21 09:26 267 -> socket:[1104166]

lrwx------ 1 root root 64 Jun 21 09:26 268 -> socket:[1104167]

lrwx------ 1 root root 64 Jun 21 09:26 269 -> socket:[1104168]

lrwx------ 1 root root 64 Jun 21 09:26 270 -> socket:[1104169]

lrwx------ 1 root root 64 Jun 21 09:26 271 -> socket:[1104170]

lrwx------ 1 root root 64 Jun 21 09:26 272 -> socket:[1104171]然后 ss 查看一下当前进程的 socket 的信息, 这里可以看到 具体的主机端口信息, 才能看到大概的 FileDescriptor 的情况

然后 这里可以明确的就是 大量的 异常的和 redis 集群保持连接的 socket 未正常关闭

root@ubuntu:~/docker/ay-resource-portals# ss -tiap | grep 17843

ESTAB 0 0 192.168.220.140:5005 192.168.220.1:62199 users:(("java",pid=17843,fd=5))

LISTEN 0 100 :::8291 :::* users:(("java",pid=17843,fd=35))

ESTAB 0 0 ::ffff:192.168.220.140:52566 ::ffff:192.168.220.133:afs3-callback users:(("java",pid=17843,fd=261))

ESTAB 0 0 ::ffff:192.168.220.140:58626 ::ffff:192.168.220.133:afs3-vlserver users:(("java",pid=17843,fd=239))

ESTAB 0 0 ::ffff:192.168.220.140:58726 ::ffff:192.168.220.133:afs3-vlserver users:(("java",pid=17843,fd=257))

ESTAB 0 0 ::ffff:192.168.220.140:60864 ::ffff:192.168.220.133:afs3-volser users:(("java",pid=17843,fd=235))

ESTAB 0 0 ::ffff:192.168.220.140:56576 ::ffff:192.168.220.133:afs3-errors users:(("java",pid=17843,fd=248))

ESTAB 0 0 ::ffff:192.168.220.140:56392 ::ffff:192.168.220.133:afs3-errors users:(("java",pid=17843,fd=211))

ESTAB 0 0 ::ffff:192.168.220.140:58658 ::ffff:192.168.220.133:afs3-vlserver users:(("java",pid=17843,fd=245))

ESTAB 0 0 ::ffff:192.168.220.140:56784 ::ffff:192.168.220.133:afs3-errors users:(("java",pid=17843,fd=272))

ESTAB 0 0 ::ffff:192.168.220.140:32840 ::ffff:192.168.220.133:afs3-volser users:(("java",pid=17843,fd=265))

ESTAB 0 0 ::ffff:192.168.220.140:52478 ::ffff:192.168.220.133:afs3-callback users:(("java",pid=17843,fd=255))

ESTAB 0 0 ::ffff:192.168.220.140:40552 ::ffff:192.168.220.133:afs3-prserver users:(("java",pid=17843,fd=226))

ESTAB 0 0 ::ffff:192.168.220.140:56614 ::ffff:192.168.220.133:afs3-errors users:(("java",pid=17843,fd=253))

ESTAB 0 0 ::ffff:192.168.220.140:40590 ::ffff:192.168.220.133:afs3-prserver users:(("java",pid=17843,fd=232))

ESTAB 0 0 ::ffff:192.168.220.140:46740 ::ffff:192.168.220.133:afs3-kaserver users:(("java",pid=17843,fd=228))

ESTAB 0 0 ::ffff:192.168.220.140:52340 ::ffff:192.168.220.133:afs3-callback users:(("java",pid=17843,fd=231))

ESTAB 0 0 ::ffff:192.168.220.140:52312 ::ffff:192.168.220.133:afs3-callback users:(("java",pid=17843,fd=225))

ESTAB 0 0 ::ffff:192.168.220.140:56522 ::ffff:192.168.220.133:afs3-errors users:(("java",pid=17843,fd=236))

ESTAB 0 0 ::ffff:192.168.220.140:56734 ::ffff:192.168.220.133:afs3-errors users:(("java",pid=17843,fd=266))

ESTAB 0 0 ::ffff:192.168.220.140:35912 ::ffff:192.168.220.133:27017 users:(("java",pid=17843,fd=30))

ESTAB 0 0 ::ffff:192.168.220.140:60768 ::ffff:192.168.220.133:afs3-volser users:(("java",pid=17843,fd=217))

ESTAB 0 0 ::ffff:192.168.220.140:46834 ::ffff:192.168.220.133:afs3-kaserver users:(("java",pid=17843,fd=246))

ESTAB 0 0 ::ffff:192.168.220.140:58534 ::ffff:192.168.220.133:afs3-vlserver users:(("java",pid=17843,fd=221))

ESTAB 0 0 ::ffff:192.168.220.140:35938 ::ffff:192.168.220.133:27017 users:(("java",pid=17843,fd=49))

ESTAB 0 0 ::ffff:192.168.220.140:40688 ::ffff:192.168.220.133:afs3-prserver users:(("java",pid=17843,fd=249))

ESTAB 0 0 ::ffff:192.168.220.140:46906 ::ffff:192.168.220.133:afs3-kaserver users:(("java",pid=17843,fd=258))

ESTAB 0 0 ::ffff:192.168.220.140:40806 ::ffff:192.168.220.133:afs3-prserver users:(("java",pid=17843,fd=262))

ESTAB 0 0 ::ffff:192.168.220.140:56428 ::ffff:192.168.220.133:afs3-errors users:(("java",pid=17843,fd=218))

ESTAB 0 0 ::ffff:192.168.220.140:58468 ::ffff:192.168.220.133:afs3-vlserver users:(("java",pid=17843,fd=208))

ESTAB 0 0 ::ffff:192.168.220.140:33660 ::ffff:10.30.2.25:mysql users:(("java",pid=17843,fd=33))

ESTAB 0 0 ::ffff:192.168.220.140:52616 ::ffff:192.168.220.133:afs3-callback users:(("java",pid=17843,fd=267))

ESTAB 0 0 ::ffff:192.168.220.140:36766 ::ffff:10.60.50.16:8848 users:(("java",pid=17843,fd=26))

ESTAB 0 0 ::ffff:192.168.220.140:56454 ::ffff:192.168.220.133:afs3-errors users:(("java",pid=17843,fd=224))

ESTAB 0 0 ::ffff:192.168.220.140:37262 ::ffff:10.60.50.16:8848 users:(("java",pid=17843,fd=254))

ESTAB 0 0 ::ffff:192.168.220.140:40718 ::ffff:192.168.220.133:afs3-prserver users:(("java",pid=17843,fd=256))

ESTAB 0 0 ::ffff:192.168.220.140:58516 ::ffff:192.168.220.133:afs3-vlserver users:(("java",pid=17843,fd=215))

ESTAB 0 0 ::ffff:192.168.220.140:60822 ::ffff:192.168.220.133:afs3-volser users:(("java",pid=17843,fd=229))

ESTAB 0 0 ::ffff:192.168.220.140:60918 ::ffff:192.168.220.133:afs3-volser users:(("java",pid=17843,fd=247))

ESTAB 0 0 ::ffff:192.168.220.140:46992 ::ffff:192.168.220.133:afs3-kaserver users:(("java",pid=17843,fd=264))

ESTAB 0 0 ::ffff:192.168.220.140:46782 ::ffff:192.168.220.133:afs3-kaserver users:(("java",pid=17843,fd=234))

ESTAB 0 0 ::ffff:192.168.220.140:60988 ::ffff:192.168.220.133:afs3-volser users:(("java",pid=17843,fd=259))

ESTAB 0 0 ::ffff:192.168.220.140:58818 ::ffff:192.168.220.133:afs3-vlserver users:(("java",pid=17843,fd=263))

ESTAB 0 0 ::ffff:192.168.220.140:58698 ::ffff:192.168.220.133:afs3-vlserver users:(("java",pid=17843,fd=250))

ESTAB 0 0 ::ffff:192.168.220.140:60734 ::ffff:192.168.220.133:afs3-volser users:(("java",pid=17843,fd=210))

ESTAB 0 0 ::ffff:192.168.220.140:52258 ::ffff:192.168.220.133:afs3-callback users:(("java",pid=17843,fd=213))

ESTAB 0 0 ::ffff:192.168.220.140:40856 ::ffff:192.168.220.133:afs3-prserver users:(("java",pid=17843,fd=268))

ESTAB 0 0 ::ffff:192.168.220.140:46808 ::ffff:192.168.220.133:afs3-kaserver users:(("java",pid=17843,fd=240))

ESTAB 0 0 ::ffff:192.168.220.140:58602 ::ffff:192.168.220.133:afs3-vlserver users:(("java",pid=17843,fd=233))

ESTAB 0 0 ::ffff:192.168.220.140:52380 ::ffff:192.168.220.133:afs3-callback users:(("java",pid=17843,fd=237))

ESTAB 0 0 ::ffff:192.168.220.140:52230 ::ffff:192.168.220.133:afs3-callback users:(("java",pid=17843,fd=158))

ESTAB 0 0 ::ffff:192.168.220.140:46714 ::ffff:192.168.220.133:afs3-kaserver users:(("java",pid=17843,fd=222))

ESTAB 0 0 ::ffff:192.168.220.140:60796 ::ffff:192.168.220.133:afs3-volser users:(("java",pid=17843,fd=223))

ESTAB 0 0 ::ffff:192.168.220.140:60960 ::ffff:192.168.220.133:afs3-volser users:(("java",pid=17843,fd=252))

ESTAB 0 0 ::ffff:192.168.220.140:52448 ::ffff:192.168.220.133:afs3-callback users:(("java",pid=17843,fd=212))

ESTAB 0 0 ::ffff:192.168.220.140:47046 ::ffff:192.168.220.133:afs3-kaserver users:(("java",pid=17843,fd=270))

ESTAB 0 0 ::ffff:192.168.220.140:32892 ::ffff:192.168.220.133:afs3-volser users:(("java",pid=17843,fd=271))

ESTAB 0 0 ::ffff:192.168.220.140:40526 ::ffff:192.168.220.133:afs3-prserver users:(("java",pid=17843,fd=220))

ESTAB 0 0 ::ffff:192.168.220.140:46874 ::ffff:192.168.220.133:afs3-kaserver users:(("java",pid=17843,fd=251))

ESTAB 0 0 ::ffff:192.168.220.140:46690 ::ffff:192.168.220.133:afs3-kaserver users:(("java",pid=17843,fd=216))

ESTAB 0 0 ::ffff:192.168.220.140:46648 ::ffff:192.168.220.133:afs3-kaserver users:(("java",pid=17843,fd=209))

ESTAB 0 0 ::ffff:192.168.220.140:40622 ::ffff:192.168.220.133:afs3-prserver users:(("java",pid=17843,fd=238))

ESTAB 0 0 ::ffff:192.168.220.140:56480 ::ffff:192.168.220.133:afs3-errors users:(("java",pid=17843,fd=230))

ESTAB 0 0 ::ffff:192.168.220.140:58864 ::ffff:192.168.220.133:afs3-vlserver users:(("java",pid=17843,fd=269))

ESTAB 0 0 ::ffff:192.168.220.140:40498 ::ffff:192.168.220.133:afs3-prserver users:(("java",pid=17843,fd=214))

ESTAB 0 0 ::ffff:192.168.220.140:56546 ::ffff:192.168.220.133:afs3-errors users:(("java",pid=17843,fd=242))

ESTAB 0 0 ::ffff:192.168.220.140:60892 ::ffff:192.168.220.133:afs3-volser users:(("java",pid=17843,fd=241))

ESTAB 0 0 ::ffff:192.168.220.140:40650 ::ffff:192.168.220.133:afs3-prserver users:(("java",pid=17843,fd=244))

ESTAB 0 0 ::ffff:192.168.220.140:56646 ::ffff:192.168.220.133:afs3-errors users:(("java",pid=17843,fd=260))

ESTAB 0 0 ::ffff:192.168.220.140:58560 ::ffff:192.168.220.133:afs3-vlserver users:(("java",pid=17843,fd=227))

ESTAB 0 0 ::ffff:192.168.220.140:52288 ::ffff:192.168.220.133:afs3-callback users:(("java",pid=17843,fd=219))

ESTAB 0 0 ::ffff:192.168.220.140:37272 ::ffff:10.60.50.16:8848 users:(("java",pid=17843,fd=45))

ESTAB 0 0 ::ffff:192.168.220.140:40466 ::ffff:192.168.220.133:afs3-prserver users:(("java",pid=17843,fd=183))

ESTAB 0 0 ::ffff:192.168.220.140:52410 ::ffff:192.168.220.133:afs3-callback users:(("java",pid=17843,fd=243))

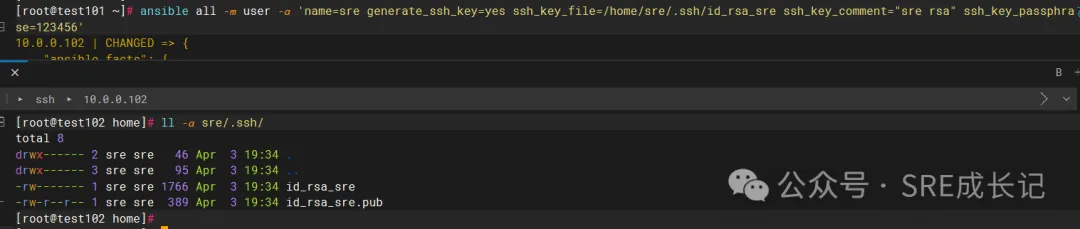

ESTAB 0 0 ::ffff:192.168.220.140:8291 ::ffff:192.168.220.140:40466 users:(("java",pid=17843,fd=46))重新构造一下问题的现场, 这个还是 蛮花时间的, 集群搭建好了, 密码配置了, 项目启动好了之后, 没有期望的那么多 FileDescriptor 的创建

然后 调试生产环境的项目情况, 原来这部分 和 redis 创建的连接是来自于 acurator 发送的测试请求

然后 我们这里构造测试请求, 然后 观察 fd 的相关信息, 可以看到 redis集群 总共六个节点, 每次 请求之后, /proc/$pid/fd 下面增加 六个 FileDescriptor, 并且 长时间没有释放

curl http://192.168.220.140:8291/actuator/health

至此 问题就基本上 复现出来了, 然后生产环境上面有 spring-boot-admin, 会定时 向各个 微服务节点 发送 /actuator/health 检测状态, 然后 随着 时间的推移, FileDescriptor 越来越多

完整的请求上下文信息如下, 当前请求是 http://192.168.220.140:8291/actuator/health

然后 来到 HealthEndpointWebExtension 来处理业务请求

然后再到 CompositeHealthIndicator 来检测各个类型的指标, 然后我们这里出现问题的是在 检查 redis 的情况 的时候出现的问题

然后是获取 redis 的连接, 检查 健康状况 什么的

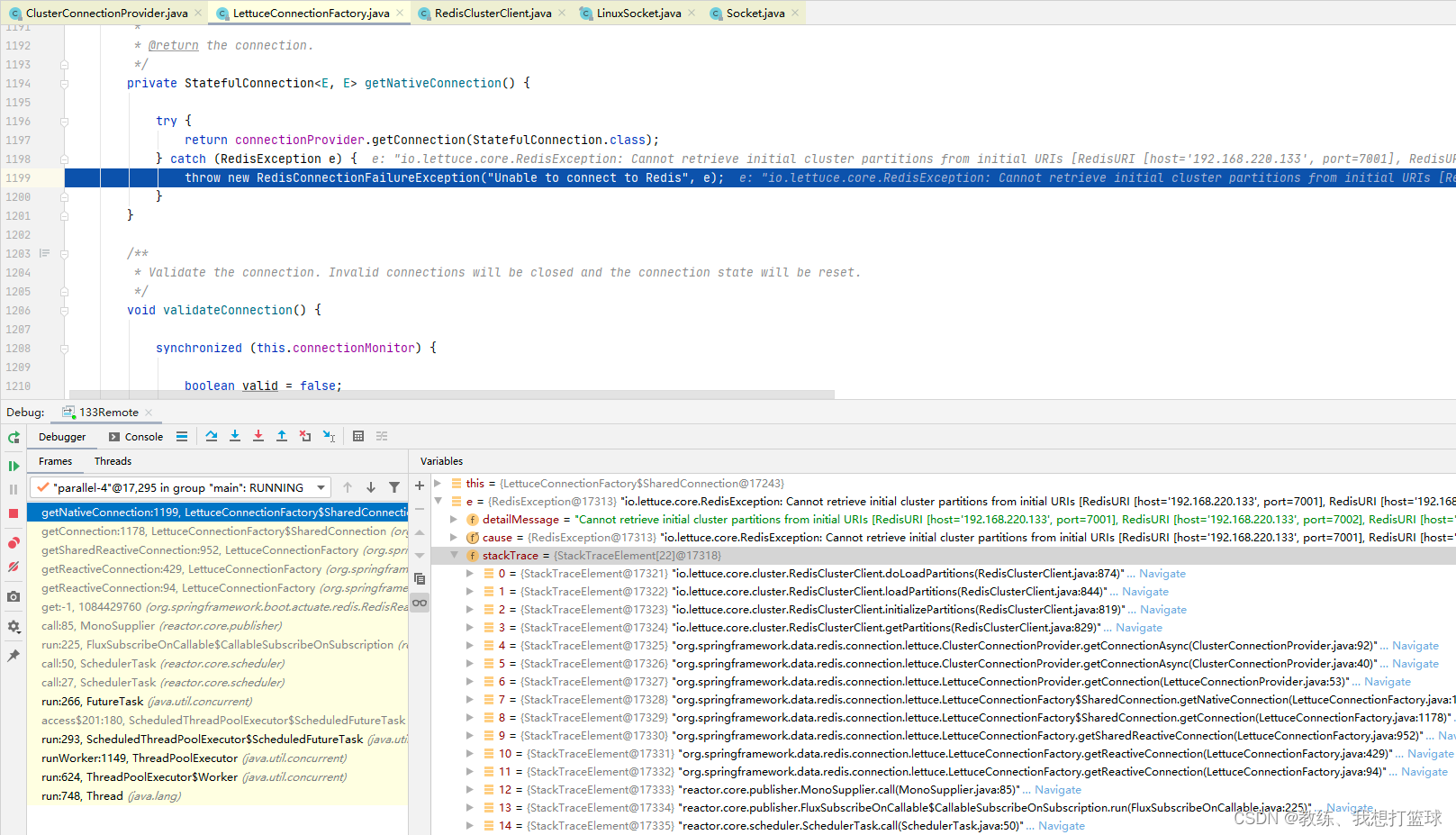

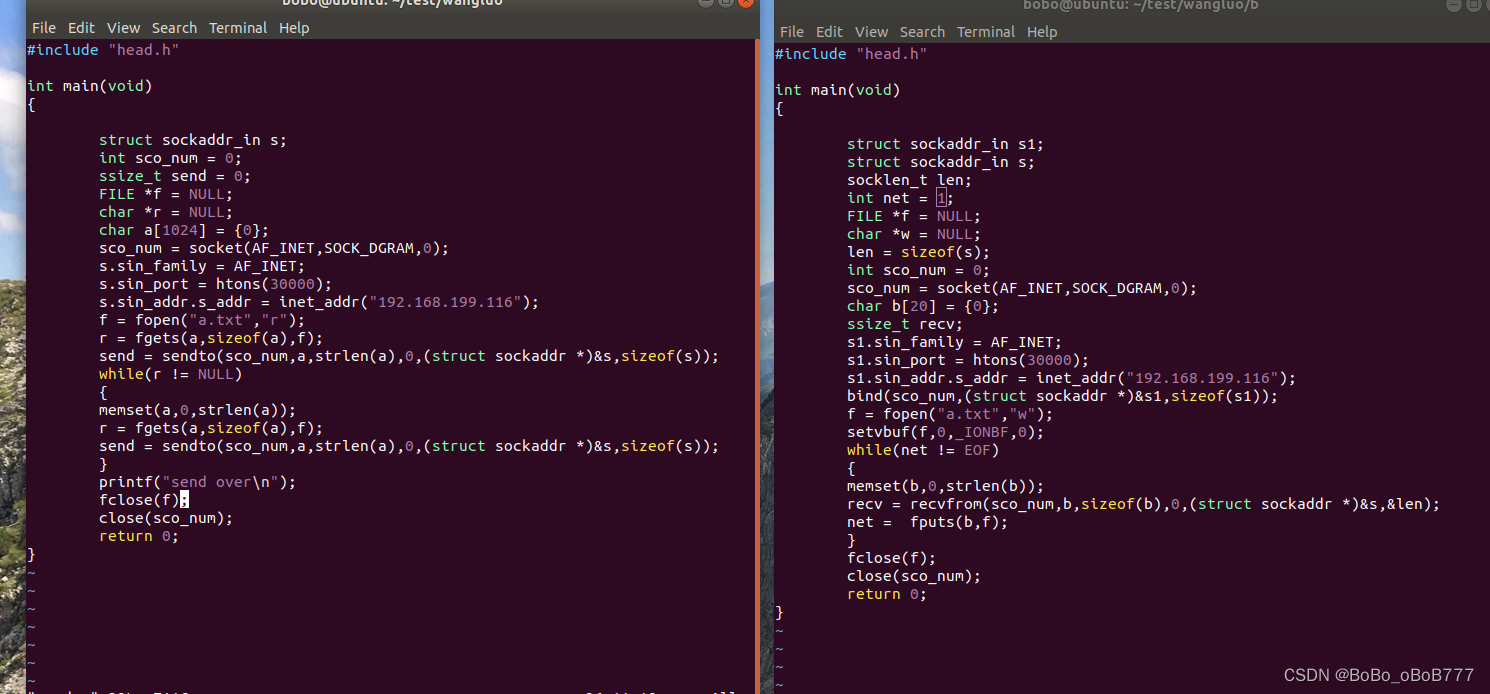

具体的和 redis 创建连接的地方

核心构成 每一次 请求都需要和 redis 创建连接的地方是在这里

GetNativeConnection 会抛出异常, 然后 this.connection 一直为空

然后在 getNativeConnection 中会每次创建和 redis 的连接

然后并向其发送 cluster nodes, client list 等两个请求, 然后 redis 均响应 “io.lettuce.core.RedisException: Cannot retrieve initial cluster partitions from initial URIs [RedisURI [host='192.168.220.133', port=7001], RedisURI [host='192.168.220.133', port=7002], RedisURI [host='192.168.220.133', port=7003], RedisURI [host='192.168.220.133', port=7004], RedisURI [host='192.168.220.133', port=7005], RedisURI [host='192.168.220.133', port=7006]]”

然后 具体的异常是在这里, 获取 cluster nodes, client list 的时候看到 异常信息, 然后 抛出对应的 异常信息

这两个接口 正常响应出的数据如下

127.0.0.1:7001> cluster nodes

5cc767285fe4c522e7851d7b2610992160b72e23 192.168.220.133:7001@17001 myself,master - 0 1687364372000 1 connected 0-5460

30f24b7878a573dd5696394de7ea20ea4deb4591 192.168.220.133:7004@17004 slave cfe925b9621300694e1019139a2daef55406eb44 0 1687364373033 4 connected

cfe925b9621300694e1019139a2daef55406eb44 192.168.220.133:7003@17003 master - 0 1687364373000 3 connected 10923-16383

96d9911ec437b7fec1100fe901aa6c19fe38989e 192.168.220.133:7006@17006 slave 15dd54b917bd8c4483616e7029f0c7b3c071078b 0 1687364373000 6 connected

15dd54b917bd8c4483616e7029f0c7b3c071078b 192.168.220.133:7002@17002 master - 0 1687364374043 2 connected 5461-10922

373b275b28079e4ef5bcd581e9877edb8496d712 192.168.220.133:7005@17005 slave 5cc767285fe4c522e7851d7b2610992160b72e23 0 1687364375057 5 connected

127.0.0.1:7001> client list

id=15597 addr=192.168.220.140:52478 fd=20 name= age=801 idle=789 flags=N db=0 sub=0 psub=0 multi=-1 qbuf=0 qbuf-free=0 obl=0 oll=0 omem=0 events=r cmd=client

id=16247 addr=192.168.220.140:52616 fd=33 name= age=147 idle=147 flags=N db=0 sub=0 psub=0 multi=-1 qbuf=0 qbuf-free=0 obl=0 oll=0 omem=0 events=r cmd=client

id=16214 addr=127.0.0.1:51118 fd=22 name= age=179 idle=0 flags=N db=0 sub=0 psub=0 multi=-1 qbuf=26 qbuf-free=32742 obl=0 oll=0 omem=0 events=r cmd=client

id=15336 addr=192.168.220.140:52288 fd=14 name= age=1057 idle=1057 flags=N db=0 sub=0 psub=0 multi=-1 qbuf=0 qbuf-free=0 obl=0 oll=0 omem=0 events=r cmd=client

id=15348 addr=192.168.220.140:52312 fd=15 name= age=1047 idle=1047 flags=N db=0 sub=0 psub=0 multi=-1 qbuf=0 qbuf-free=0 obl=0 oll=0 omem=0 events=r cmd=client

id=15470 addr=192.168.220.140:52410 fd=18 name= age=926 idle=926 flags=N db=0 sub=0 psub=0 multi=-1 qbuf=0 qbuf-free=0 obl=0 oll=0 omem=0 events=r cmd=client

id=16039 addr=192.168.220.140:52566 fd=21 name= age=355 idle=355 flags=N db=0 sub=0 psub=0 multi=-1 qbuf=0 qbuf-free=0 obl=0 oll=0 omem=0 events=r cmd=client

id=15393 addr=192.168.220.140:52340 fd=16 name= age=1004 idle=953 flags=N db=0 sub=0 psub=0 multi=-1 qbuf=0 qbuf-free=0 obl=0 oll=0 omem=0 events=r cmd=client

id=15258 addr=192.168.220.140:52230 fd=12 name= age=1132 idle=1132 flags=N db=0 sub=0 psub=0 multi=-1 qbuf=0 qbuf-free=0 obl=0 oll=0 omem=0 events=r cmd=client

id=15325 addr=192.168.220.140:52258 fd=13 name= age=1067 idle=1062 flags=N db=0 sub=0 psub=0 multi=-1 qbuf=0 qbuf-free=0 obl=0 oll=0 omem=0 events=r cmd=client

id=15450 addr=192.168.220.140:52380 fd=17 name= age=944 idle=944 flags=N db=0 sub=0 psub=0 multi=-1 qbuf=0 qbuf-free=0 obl=0 oll=0 omem=0 events=r cmd=client

id=15575 addr=192.168.220.140:52448 fd=19 name= age=821 idle=821 flags=N db=0 sub=0 psub=0 multi=-1 qbuf=0 qbuf-free=0 obl=0 oll=0 omem=0 events=r cmd=client

假设配置了正确的密码之后

第一次访问 http://192.168.220.140:8291/actuator/health 此时 this.connection 为 null, getNativeConnection 拿到对应的连接, 然后进行测试

第二次访问 http://192.168.220.140:8291/actuator/health 此时 this.connection 不为 null, 直接使用上次暂存的 connection

StatefulRedisConnection/EpollSocketChannel/LinuxSocket 为什么没有被回收?

LinuxSocket 被 EpollSocketChannel 引用

这整个 EpollSocketChannel 列表被 EpollEventLoop.channels 的一个 Map 引用

而 EpollEventLoop 的生命周期较长

StatefulRedisConnection 这里不多赘述, 也是被 某对象 引用

问题异常现场

可以看到的是有 3w+ 个 LinuxSocket, 每一个对应于一个 Socket, 占用一个 FileDescriptor

均是泄露的 Redis 连接 以及相关的数据结构

这些 FileDescriptor 主要是包含了 大量的 fd 为 -1 的 FileDescriptor

1个 sun.nio.ch.ServerSocketChannelImpl 监听端口相关

十几个个 sun.nio.ch.SocketChannelImpl, 主要用于处理 客户端的请求

3个 java.net.SocksSocketImpl 来保持和 mongo 的连接

实际生产的fd 的情况如下, 大量的 socket 的 fd

socket 的具体信息如下

问题在新版本

即使是在 spring-data-redis 2.2.x 版本中 泄露的问题依然存在

在 initialize 的过程中 client.getPartitions 会抛出异常, 然后 initialized 总是 false, 然后每次 /actuator/health 请求都会走 client.getPartitions, 创建了一批 tcp 连接, 但是没有释放

从代码上面来看 貌似, 目前 这个问题 还是存在

然后查看 当前进程的 fd, 发现 还是在不断泄露

异常的情况如下

配置非 redis 的其他服务

这个问题的复现 也可以在 可以链接的 tcp 非 redis 服务上面复现

具有一定的通用性, 也具有一定的隐蔽性

不仅会消耗 客户端的链接, 也会 消耗对方服务器的资源

完

——实操演示](https://img-blog.csdnimg.cn/direct/742f58c929d8415cb9830b0c829944e4.png)