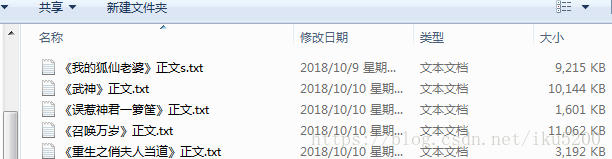

目标:每一个小说保存成一个txt文件

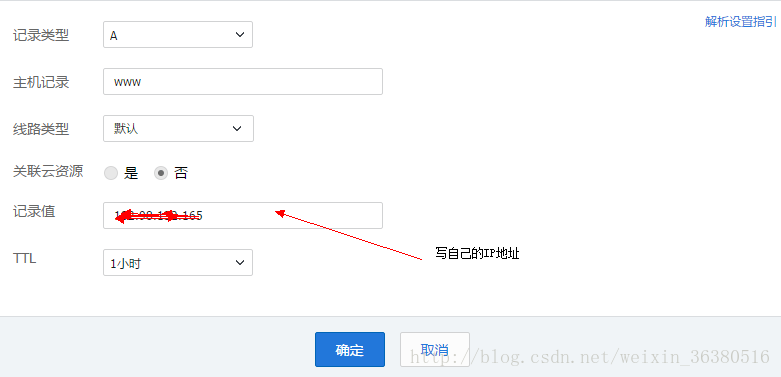

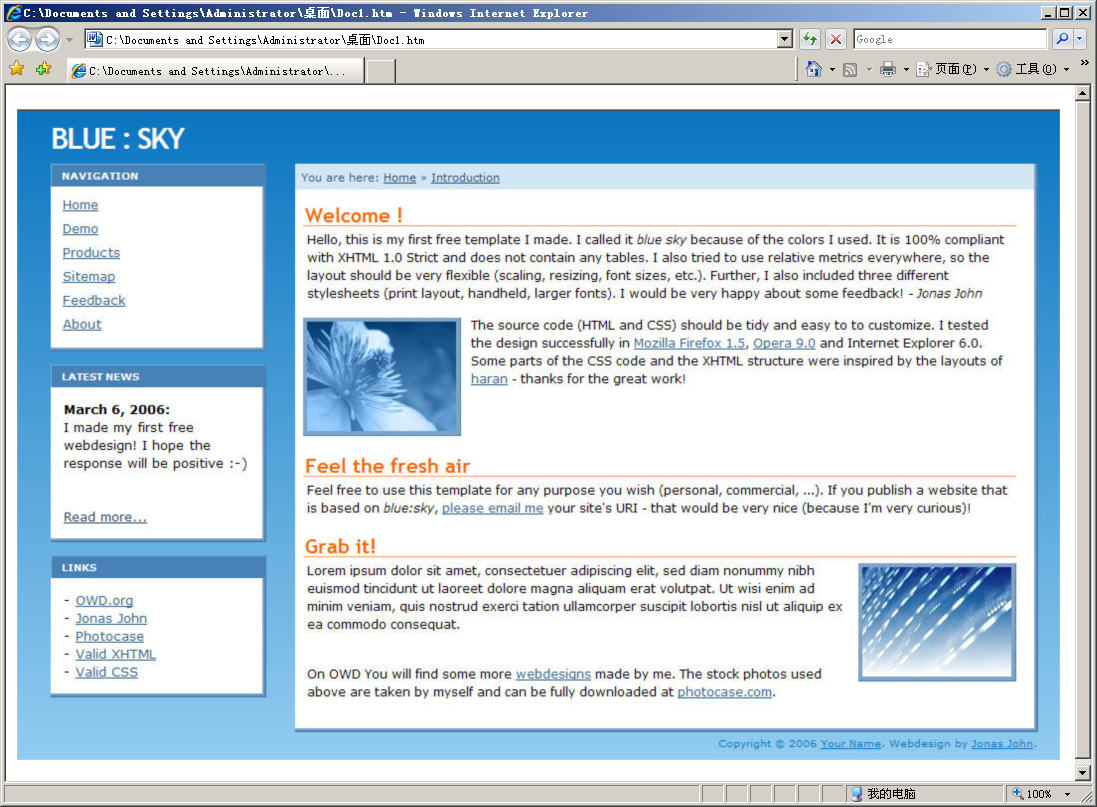

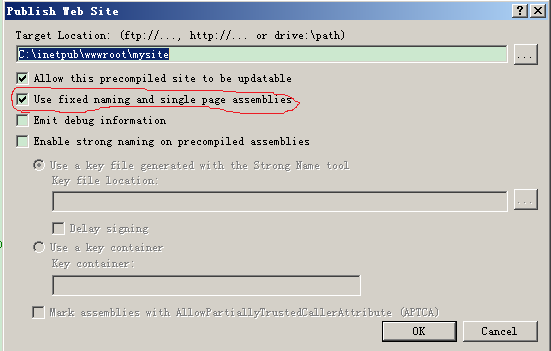

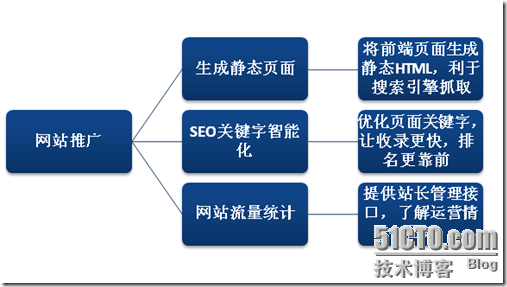

思路:获取每个小说地址(图一),进入后获取每章节地址(图二),然后进入获取该章节内容(图三)保存文件中。循环

效果图:

每一行都有注释,不多解释了

import requests

from bs4 import BeautifulSoup

import osif __name__ == '__main__':# 要下载的网页url = 'https://www.biqubao.com/quanben/'# 网站根网址root_url = 'https://www.biqubao.com'# 保存本地路径path = 'F:\\python\\txt'# 解析网址req = requests.get(url)# 设置编码,浏览器查看网站编码:F12,控制开输入document.characterSet回车即可查看req.encoding = 'gbk'# 获取网页所有内容soup = BeautifulSoup(req.text, 'html.parser')# 查找网页中div的id为main的标签list_tag = soup.div(id="main")# 查看div内所有里标签li = list_tag[0](['li'])# 删除第一个没用的标签del li[0]# 循环遍历for i in li:# 获取到a标签间的内容---小说类型txt_type = i.a.string# 获取a标签的href地址值---小说网址short_url = (i(['a'])[1].get('href'))# 获取第三个span标签的值---作者author = i(['span'])[3].string# 获取网页设置网页编码req = requests.get(root_url + short_url)req.encoding = 'gbk'# 解析网页soup = BeautifulSoup(req.text, "html.parser")list_tag = soup.div(id="list")# 获取小说名name = list_tag[0].dl.dt.stringprint("类型:{} 短址:{} 作者:{} 小说名:{}".format(txt_type, short_url, author, name))# 创建同名文件夹# paths = path + '\\' + nameif not os.path.exists(path):# 获取当前目录并组合新目录# os.path.join(path, name)os.mkdir(path)# 循环所有的dd标签for dd_tag in list_tag[0].dl.find_all('dd'):# 章节名zjName = dd_tag.string# 章节地址zjUrl = root_url + dd_tag.a.get('href')# 访问网址爬取章节内容req2 = requests.get(zjUrl)req2.encoding = 'gbk'zj_soup = BeautifulSoup(req2.text, "html.parser")content_tag = zj_soup.div.find(id="content")# 把空格内容替换成换行text = str(content_tag.text.replace('\xa0', '\n'))text.replace('\ufffd', '\n')# 写入文件操作'a'追加with open(path + "\\" + name + ".txt", 'a') as f:f.write('\n' + '\n' + zjName)f.write(text)print("{}------->写入完毕".format(zjName))