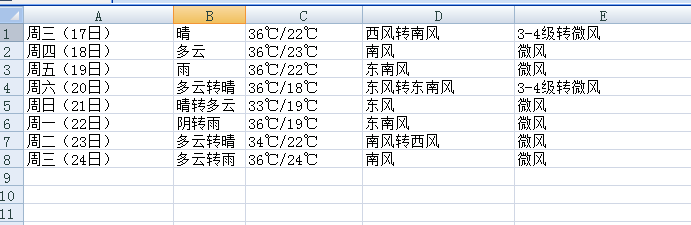

参考一个前辈的代码,修改了一个案例开始学习beautifulsoup做爬虫获取天气信息,前辈获取的是7日内天气,

我看旁边还有8-15日就模仿修改了下。其实其他都没有变化,只变换了获取标签的部分。但是我碰到

一个span获取的问题,如我的案例中每日的源代码是这样的。

<li class="t"> <span class="time">周五(19日)</span> <big class="png30 d301"></big> <big class="png30 n301"></big> <span class="wea">雨</span> <span class="tem"><em>36℃</em>/22℃</span> <span class="wind">东南风</span> <span class="wind1">微风</span> </li>

上门的所有span标签中,日期,天气,风向都可以通过beautifulsoup进行标签匹配获取。唯独温度获取不到,

获取到的值为none,我奇怪了好酒,用span.em能获取到36°,获取不完全,不符合我的要求。最后没办法。

我只能通过获取到这个span这一回内容

<span class="tem"><em>36℃</em>/22℃</span>

然后通过字符串替换替换掉多余的字符。剩余36℃/22℃

得到这个结果。存入变量并写入csv文件。

以下为全部代码,如有不对的地方欢迎指教。

''' Created on 2017年5月10日@author: bekey qq:402151718 '''#conding:UTF-8import requests import csv import random import time import socket import http.client #import urllib.request from bs4 import BeautifulSoupdef get_content(url , data = None):header={'Accept': 'text/html,application/xhtml+xml,application/xml;q=0.9,image/webp,*/*;q=0.8','Accept-Encoding': 'gzip, deflate, sdch','Accept-Language': 'zh-CN,zh;q=0.8','Connection': 'keep-alive','User-Agent': 'Mozilla/5.0 (Windows NT 6.1; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/57.0.2987.133 Safari/537.36'}timeout = random.choice(range(80, 180))while True:try:rep = requests.get(url,headers = header,timeout = timeout)rep.encoding = 'utf-8'# req = urllib.request.Request(url, data, header)# response = urllib.request.urlopen(req, timeout=timeout)# html1 = response.read().decode('UTF-8', errors='ignore')# response.close()break# except urllib.request.HTTPError as e:# print( '1:', e)# time.sleep(random.choice(range(5, 10)))# # except urllib.request.URLError as e:# print( '2:', e)# time.sleep(random.choice(range(5, 10)))except socket.timeout as e:print( '3:', e)time.sleep(random.choice(range(8,15)))except socket.error as e:print( '4:', e)time.sleep(random.choice(range(20, 60)))except http.client.BadStatusLine as e:print( '5:', e)time.sleep(random.choice(range(30, 80)))except http.client.IncompleteRead as e:print( '6:', e)time.sleep(random.choice(range(5, 15)))return rep.text# return html_textdef get_data(html_text):final = []bs = BeautifulSoup(html_text, "html.parser") # 创建BeautifulSoup对象body = bs.body # 获取body部分data = body.find('div', {'id': '15d'}) # 找到id为7d的divul = data.find('ul') # 获取ul部分li = ul.find_all('li') # 获取所有的lifor day in li: # 对每个li标签中的内容进行遍历temp = []#print(day)span = day.find_all('span') #找到所有的span标签#print(span)date = span[0].string # 找到日期temp.append(date) # 添加到temp中wea1 = span[1].string#获取天气情况temp.append(wea1) #加入到listtem =str(span[2])tem = tem.replace('<span class="tem"><em>', '')tem = tem.replace('</span>','')tem = tem.replace('</em>','')#tem = tem.find('span').string #获取温度temp.append(tem) #温度加入list windy = span[3].stringtemp.append(windy)#加入到listwindy1 = span[4].stringtemp.append(windy1)#加入到list final.append(temp)return finaldef write_data(data, name):file_name = namewith open(file_name, 'a', errors='ignore', newline='') as f:f_csv = csv.writer(f)f_csv.writerows(data)if __name__ == '__main__':url ='http://www.weather.com.cn/weather15d/101180101.shtml'html = get_content(url)#print(html)result = get_data(html)#print(result)write_data(result, 'weather7.csv')

效果如图:

项目地址:git@github.com:zhangbei59/weather_get.git