双目深度估计ONNX Practical Stereo Matching via Cascaded Recurrent Network with Adaptive Correlation https://github.com/ibaiGorordo/ONNX-CREStereo-Depth-Estimation

-

双目深度估计需要从dataset读取左右两个view的图片。

-

使用模型进行深度图的估计

model_path = f'models/crestereo_{version}_iter{iters}_{shape[0]}x{shape[1]}.onnx'

depth_estimator = CREStereo(model_path)

disparity_map = depth_estimator(left_img, right_img)

CREStereo model: https://github.com/megvii-research/CREStereo

CREStereo - Pytorch: https://github.com/ibaiGorordo/CREStereo-Pytorch

PINTO0309's model zoo: https://github.com/PINTO0309/PINTO_model_zoo

PINTO0309's model conversion tool: https://github.com/PINTO0309/openvino2tensorflow

Driving Stereo dataset: https://drivingstereo-dataset.github.io/

Depthai library: https://pypi.org/project/depthai/

Original paper: https://arxiv.org/abs/2203.11483

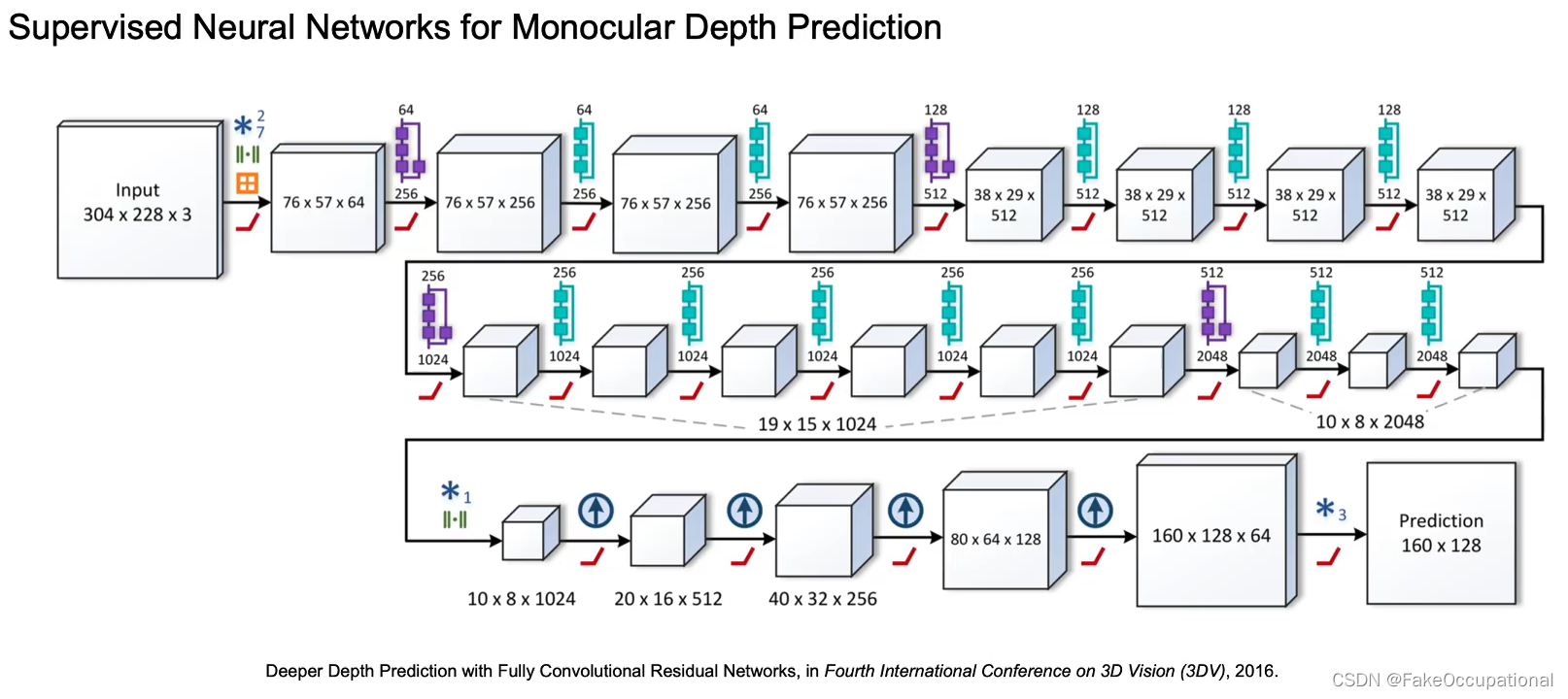

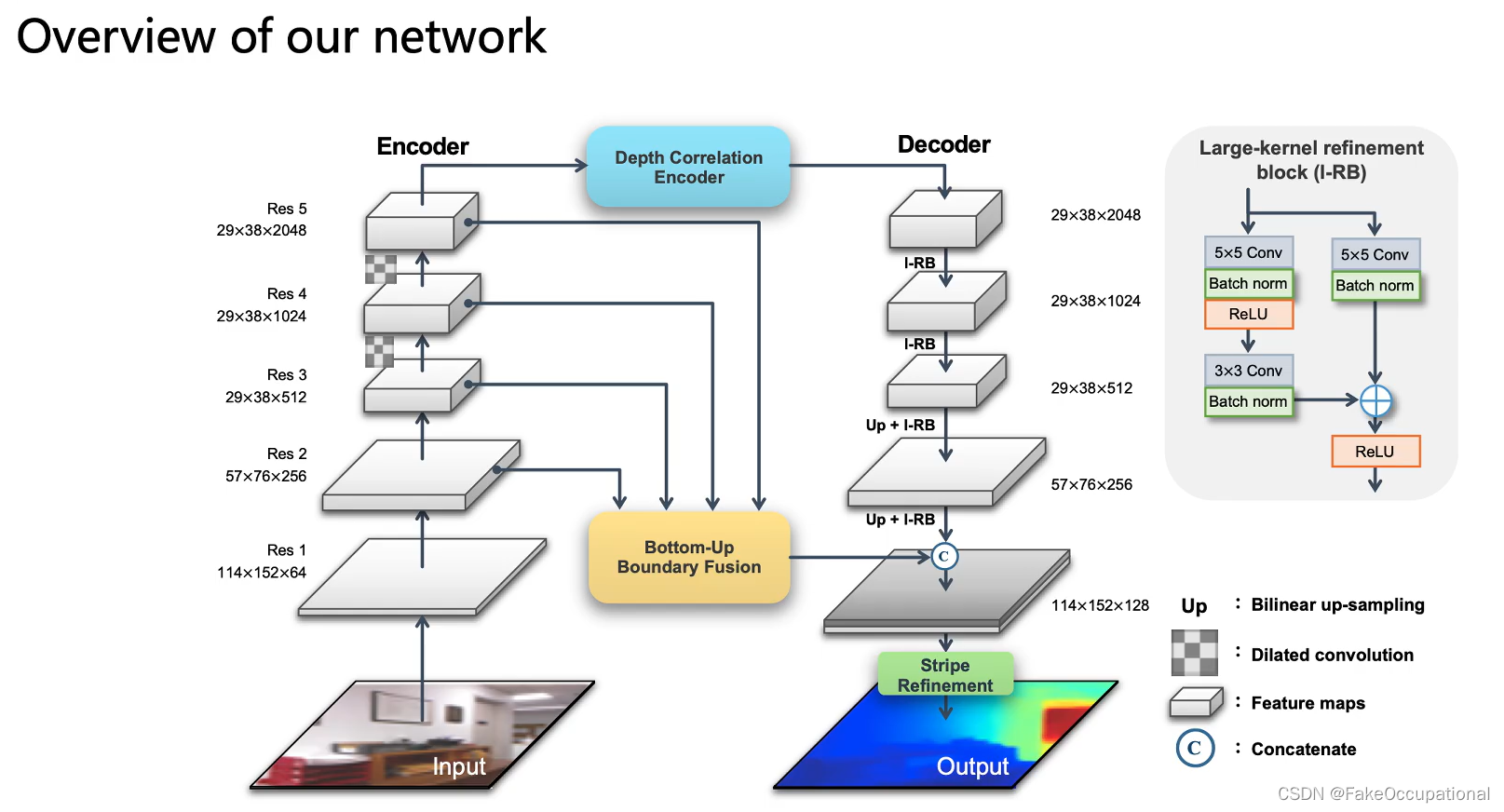

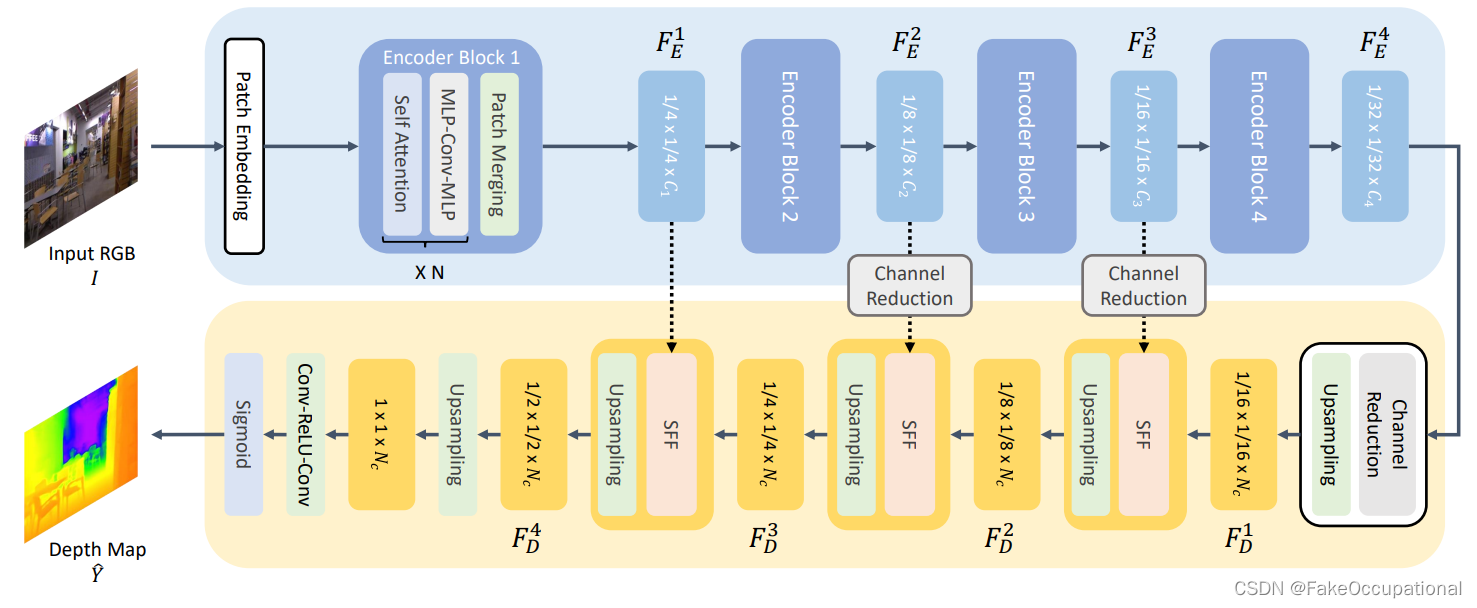

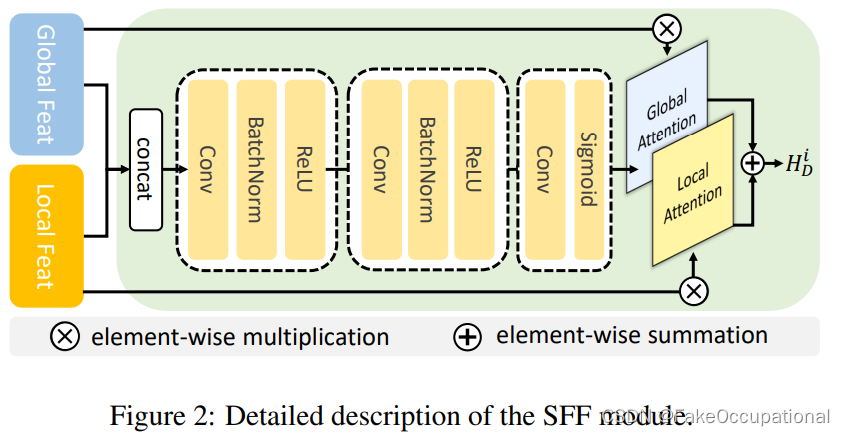

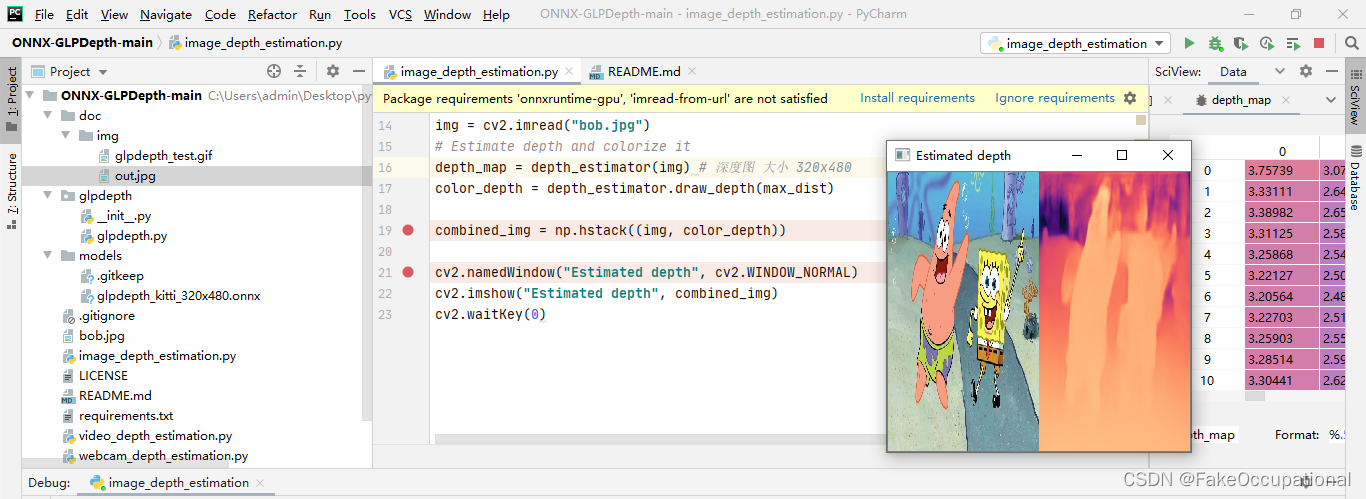

单目深度估计monocular

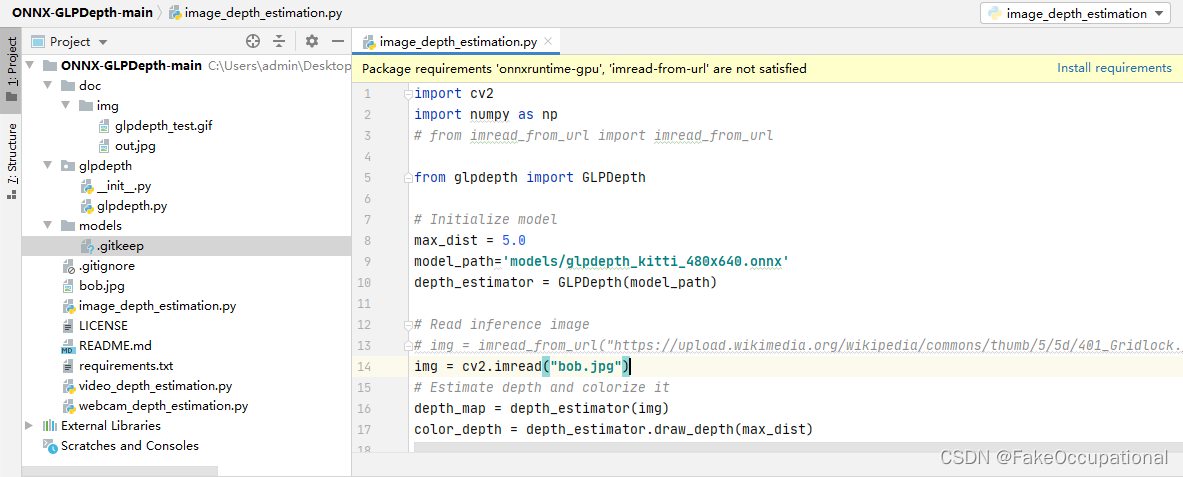

GLPDepth

-

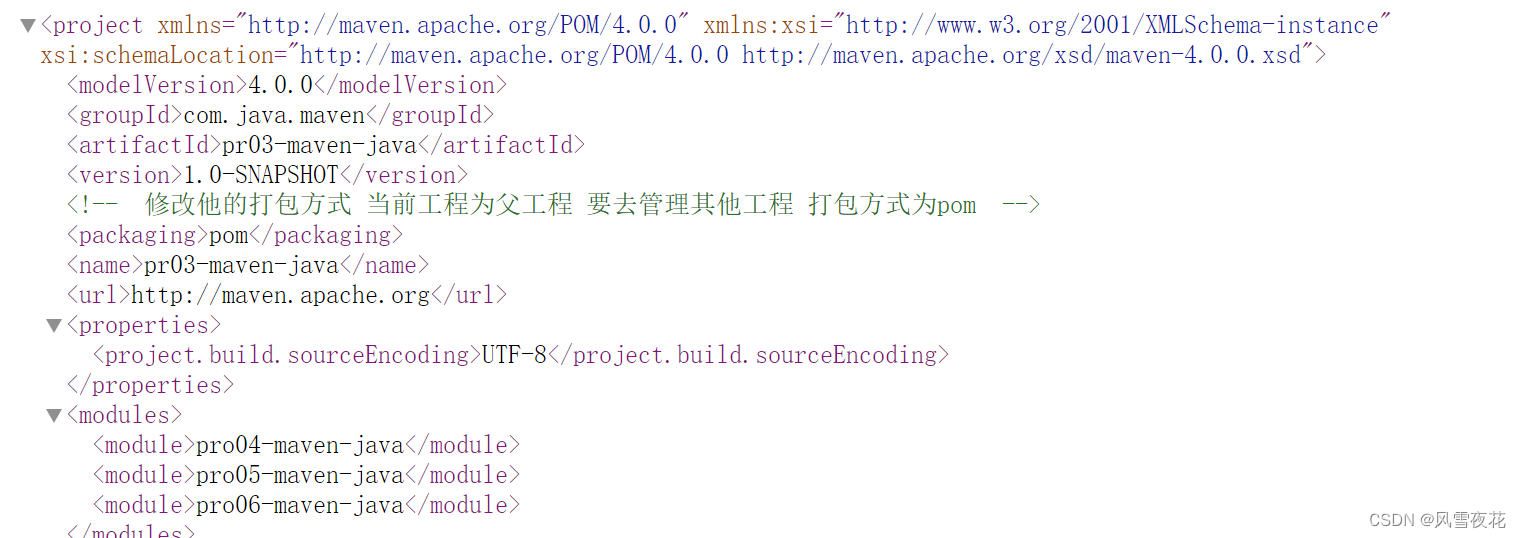

下载github代码(稍微修改一下,用本地读取替换在线读取)

-

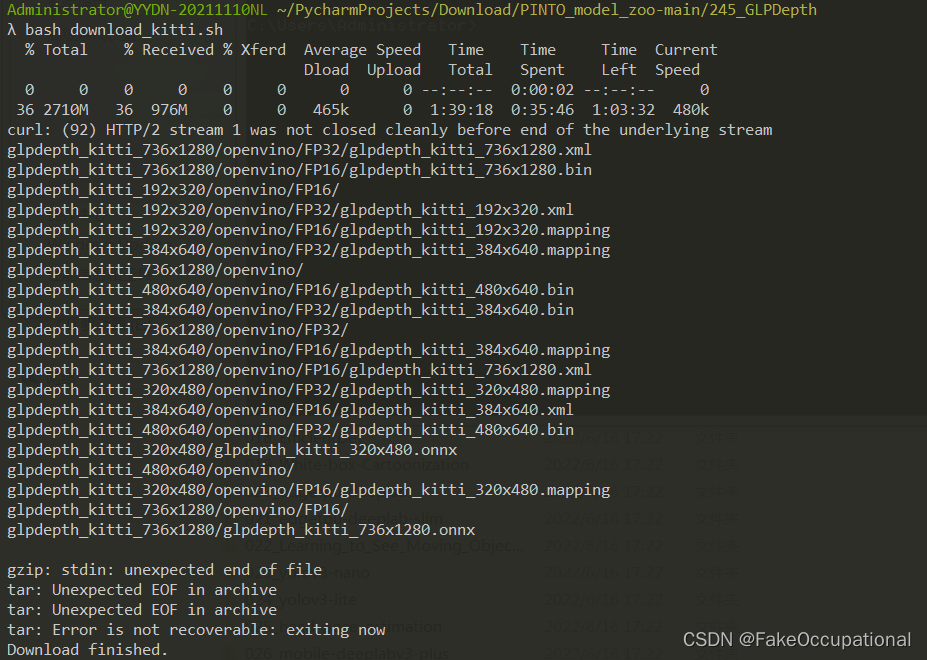

去PINTO0309’s model zoo: https://github.com/PINTO0309/PINTO_model_zoo下载模型

-

运行bash

monodepth2 https://github.com/nianticlabs/monodepth2

python test_simple.py --image_path assets/test_image.jpg --model_name mono+stereo_640x192

with torch.no_grad():for idx, image_path in enumerate(paths):if image_path.endswith("_disp.jpg"):print('don t try to predict disparity for a disparity image!')continue# Load image and preprocessinput_image = pil.open(image_path).convert('RGB')original_width, original_height = input_image.sizeinput_image = input_image.resize((feed_width, feed_height), pil.LANCZOS)input_image = transforms.ToTensor()(input_image).unsqueeze(0)# PREDICTIONinput_image = input_image.to(device)features = encoder(input_image)outputs = depth_decoder(features)disp = outputs[("disp", 0)]# 还原大小 torch.Size([1, 1, 235, 638])disp_resized = torch.nn.functional.interpolate(disp, (original_height, original_width), mode="bilinear", align_corners=False)# Saving numpy fileoutput_name = os.path.splitext(os.path.basename(image_path))[0]scaled_disp, depth = disp_to_depth(disp, 0.1, 100)if args.pred_metric_depth:name_dest_npy = os.path.join(output_directory, "{}_depth.npy".format(output_name))metric_depth = STEREO_SCALE_FACTOR * depth.cpu().numpy()np.save(name_dest_npy, metric_depth)else:name_dest_npy = os.path.join(output_directory, "{}_disp.npy".format(output_name))np.save(name_dest_npy, scaled_disp.cpu().numpy())# Saving colormapped depth imagedisp_resized_np = disp_resized.squeeze().cpu().numpy()vmax = np.percentile(disp_resized_np, 95)normalizer = mpl.colors.Normalize(vmin=disp_resized_np.min(), vmax=vmax)mapper = cm.ScalarMappable(norm=normalizer, cmap='magma')colormapped_im = (mapper.to_rgba(disp_resized_np)[:, :, :3] * 255).astype(np.uint8)im = pil.fromarray(colormapped_im)name_dest_im = os.path.join(output_directory, "{}_disp.jpeg".format(output_name))im.save(name_dest_im)print(" Processed {:d} of {:d} images - saved predictions to:".format(idx + 1, len(paths)))print(" - {}".format(name_dest_im))print(" - {}".format(name_dest_npy))print('-> Done!')有时没有网络的情况下运行报错urllib.error.URLError: <urlopen error [Errno -2] Name or service not known>

# download_model_if_doesnt_exist(args.model_name)

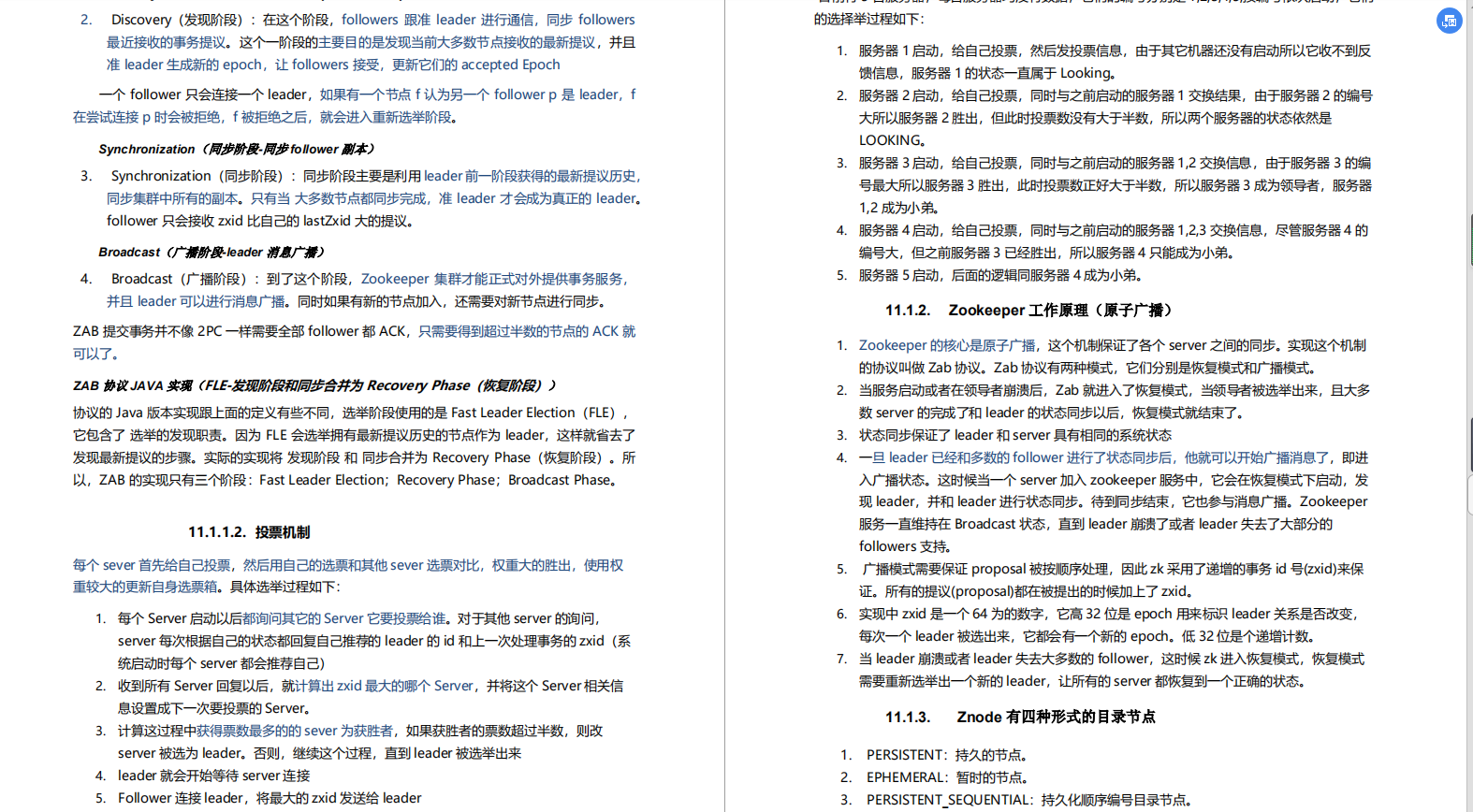

参考与更多

单目深度估计总结

深入研究自监督单目深度估计:Monodepth2

consistent-video-deprh-estimation:github上的一个有趣的应用

效果惊艳!ONNX-CREStereo深度估计!

monodepth https://github.com/mrharicot/monodepth

https://github.com/nianticlabs/monodepth2

Digging Into Self-Supervised Monocular Depth Estimation

https://arxiv.org/abs/1806.01260

- https://www.bilibili.com/video/BV1HZ4y1c7nC