安装nfs服务器 yum install rpcbind nfs-utils -y

systemctl enable rpcbind

systemctl enable nfs

systemctl start rpcbind

systemctl start nfs

mkdir -p /root/data/sc-data

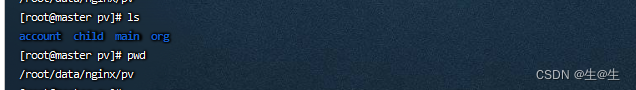

[ root@master sc-data]

/root/data/sc-data 192.168 .1.0/24( rw,no_root_squash)

/root/data/nginx/pv 192.168 .1.0/24( rw,no_root_squash)

别的服务器检测是否能用

[ root@node1 ~]

Export list for 192.168 .1.90:

/root/data/sc-data 192.168 .1.0/24

/root/data/nginx/pv 192.168 .1.0/24

创建pv [ root@master xiongfei]

apiVersion: v1

kind: PersistentVolume

metadata:name: pv-nginx

spec:capacity: storage: 5GiaccessModes: - ReadWriteMany persistentVolumeReclaimPolicy: Retain nfs:path: /root/data/nginx/pvserver: 192.168 .1.90

创建pvc [ root@master xiongfei]

apiVersion: v1

kind: PersistentVolumeClaim

metadata:name: pvc-nginxnamespace: dev

spec:accessModes: - ReadWriteManyresources:requests:storage: 4Gi

创建一个configmap [ root@master xiongfei]

apiVersion: v1

kind: ConfigMap

metadata:name: nginx-configmapnamespace: dev

data:default.conf: | -server { listen 80 ; server_name localhost; location / { root /usr/share/nginx/html/account; index index.html index.htm; } location /account { proxy_pass http://192.168.1.130:8088/account; } error_page 405 = 200 $uri ; error_page 500 502 503 504 /50x.html; location = /50x.html { root html; } } server { listen 8081 ; server_name localhost; location / { root /usr/share/nginx/html/org; index index.html index.htm; } location /org { proxy_pass http://192.168.1.130:8082/org; proxy_http_version 1.1 ; proxy_set_header Upgrade $http_upgrade ; proxy_set_header Connection "Upgrade" ; proxy_set_header X-Real-IP $remote_addr ; proxy_read_timeout 600s; } location ~ ^/V1.0/( .*) { rewrite /( .*) $ /org/$1 break ; proxy_pass http://192.168.1.130:8082; proxy_set_header Host $proxy_host ; } }

创建一个hpa,提高可用行(这个在这里不用看) [ root@master xiongfei]

apiVersion: autoscaling/v1

kind: HorizontalPodAutoscaler

metadata:name: pc-hpanamespace: dev

spec:minReplicas: 1 maxReplicas: 10 targetCPUUtilizationPercentage: 10 scaleTargetRef: apiVersion: apps/v1kind: Deploymentname: nginx-deploy 创建一个pod 实例验证一下 [ root@master xiongfei]

apiVersion: v1

kind: Service

metadata:labels:app: nginx-servicename: nginx-service namespace: dev

spec:ports:- name: account-nginxport: 80 protocol: TCPtargetPort: 80 nodePort: 30013 - name: org-nginxport: 8081 protocol: TCPtargetPort: 8081 nodePort: 30014 selector:app: nginx-pod1type: NodePort---

apiVersion: apps/v1

kind: Deployment

metadata:labels:app: nginx-deployname: nginx-deploynamespace: dev

spec:replicas: 1 selector:matchLabels:app: nginx-pod1strategy:type: RollingUpdatetemplate:metadata:labels:app: nginx-pod1namespace: devspec:containers:- image: nginx:1.17.1name: nginxports:- containerPort: 80 protocol: TCP- containerPort: 8081 protocol: TCPresources:limits:cpu: "1" requests:cpu: "500m" volumeMounts:- name: nginx-configmountPath: /etc/nginx/conf.d/readOnly: true - name: nginx-htmlmountPath: /usr/share/nginx/html/readOnly: false volumes:- name: nginx-configconfigMap:name: nginx-configmap - name: nginx-htmlpersistentVolumeClaim:claimName: pvc-nginx readOnly: false 查看pv pvc 之间的绑定状态 [ root@master ~]

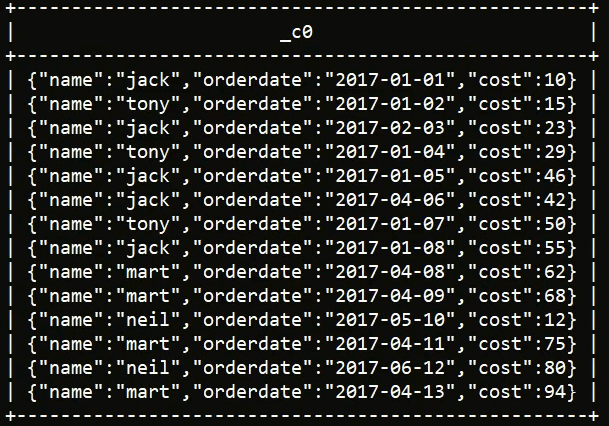

NAME CAPACITY ACCESS MODES RECLAIM POLICY STATUS CLAIM STORAGECLASS REASON AGE

persistentvolume/pv-nginx 5Gi RWX Retain Bound dev/pvc-nginx 2d6hNAME STATUS VOLUME CAPACITY ACCESS MODES STORAGECLASS AGE

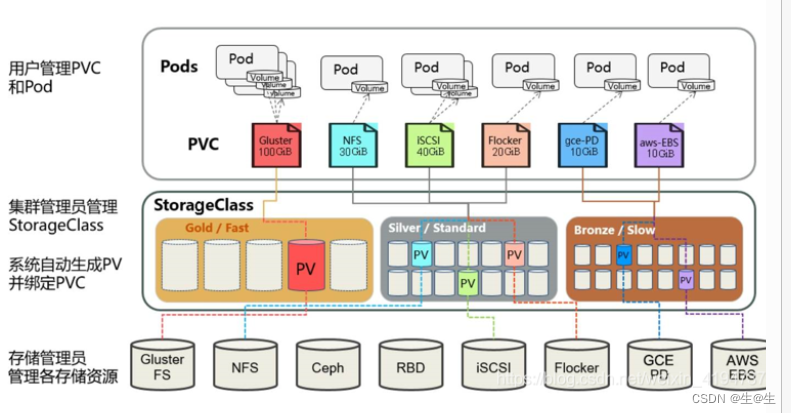

persistentvolumeclaim/pvc-nginx Bound pv-nginx 5Gi RWX 2d6h创建一个pod 实例验证一下 以上的这种演示方式是通过手动的情况下创建的

创建 nfs provisioner (nfs 配置器) [ root@master k8s-StorageClass]

kind: Deployment

apiVersion: apps/v1

metadata:name: nfs-client-provisioner namespace: kube-system

spec:replicas: 2 strategy:type: Recreateselector:matchLabels:app: nfs-client-provisionertemplate:metadata:labels:app: nfs-client-provisionerspec:serviceAccountName: nfs-client-provisioneraffinity:podAntiAffinity:preferredDuringSchedulingIgnoredDuringExecution:- weight: 100 podAffinityTerm:topologyKey: kubernetes.io/hostnamelabelSelector:matchLabels:app: nfs-client-provisionercontainers:- name: nfs-client-provisionerimage: quay.io/external_storage/nfs-client-provisioner:v3.1.0-k8s1.11volumeMounts:- name: nfs-client-rootmountPath: /persistentvolumesenv:- name: PROVISIONER_NAMEvalue: nfs-client-provisioner- name: NFS_SERVERvalue: 192.168 .1.90- name: NFS_PATHvalue: /root/data/sc-datavolumes:- name: nfs-client-rootnfs:server: 192.168 .1.90path: /root/data/sc-data创建rbac 资源的授权策略集合 [ root@master k8s-StorageClass]

kind: ServiceAccount

apiVersion: v1

metadata:name: nfs-client-provisionernamespace: kube-system

---

kind: ClusterRole

apiVersion: rbac.authorization.k8s.io/v1

metadata:name: nfs-client-provisioner-runner

rules:- apiGroups: [ "" ] resources: [ "persistentvolumes" ] verbs: [ "get" , "list" , "watch" , "create" , "delete" ] - apiGroups: [ "" ] resources: [ "persistentvolumeclaims" ] verbs: [ "get" , "list" , "watch" , "update" ] - apiGroups: [ "storage.k8s.io" ] resources: [ "storageclasses" ] verbs: [ "get" , "list" , "watch" ] - apiGroups: [ "" ] resources: [ "events" ] verbs: [ "create" , "update" , "patch" ]

---

kind: ClusterRoleBinding

apiVersion: rbac.authorization.k8s.io/v1

metadata:name: run-nfs-client-provisioner

subjects:- kind: ServiceAccountname: nfs-client-provisionernamespace: kube-system

roleRef:kind: ClusterRolename: nfs-client-provisioner-runnerapiGroup: rbac.authorization.k8s.io

---

kind: Role

apiVersion: rbac.authorization.k8s.io/v1

metadata:namespace: kube-systemname: leader-locking-nfs-client-provisioner

rules:- apiGroups: [ "" ] resources: [ "endpoints" ] verbs: [ "get" , "list" , "watch" , "create" , "update" , "patch" ]

---

kind: RoleBinding

apiVersion: rbac.authorization.k8s.io/v1

metadata:namespace: kube-systemname: leader-locking-nfs-client-provisioner

subjects:- kind: ServiceAccountname: nfs-client-provisionernamespace: kube-system

roleRef:kind: Rolename: leader-locking-nfs-client-provisionerapiGroup: rbac.authorization.k8s.io创建storageclass [ root@master k8s-StorageClass]

apiVersion: storage.k8s.io/v1

kind: StorageClass

metadata:annotations:kubectl.kubernetes.io/last-applied-configuration: | { "apiVersion" : "storage.k8s.io/v1" ,"kind" : "StorageClass" ,"metadata" :{ "annotations" :{ } ,"name" : "nfs" } ,"provisioner" : "nfs-client-provisioner" ,"reclaimPolicy" : "Delete" } storageclass.beta.kubernetes.io/is-default-class: "true" storageclass.kubernetes.io/is-default-class: "true" name: nfs

provisioner: nfs-client-provisioner

parameters:archiveOnDelete: "true"

reclaimPolicy: Retain

1 . 第一种情况

parameters:archiveOnDelete: "true"

reclaimPolicy: delete文件会以arch.. . 命名,数据还在,新创立的pod,不会引用之前数据目录里的数据

2 . 第二种情况

parameters:archiveOnDelete: "false"

reclaimPolicy: deletenfs 目录下的数据 会被全部删除3 . **第三种情况**

parameters:archiveOnDelete: "false"

reclaimPolicy: Retain 当回收策略改为Retain时, 删除pod时候, pvc pv 在删除时, nfs 文件不会被清楚,还是以defatult 这样的形势存在,当再次创建pod,会引用之前的数据

5 . 第四种情况

parameters:archiveOnDelete: "true"

reclaimPolicy: Retain数据目录下的 pvc的名字不变 还是以default 这样命名,新创建的pod ,不会引用之前留存的数据,pv的状态会变为Released

创建一个测试pod [ root@master k8s-StorageClass]

apiVersion: v1

kind: Pod

metadata:name: nginx

spec:containers:- name: nginximage: nginx:latestports:- containerPort: 80 volumeMounts:- name: wwwmountPath: /usr/share/nginx/htmlvolumes:- name: wwwpersistentVolumeClaim:claimName: nginx

---

apiVersion: v1

kind: PersistentVolumeClaim

metadata:name: nginx

spec:storageClassName: "nfs" accessModes:- ReadWriteManyresources:requests:storage: 5Gi