文章目录

- 一、输出解析器 (Output Parsers)

- 快速入门

- 二、列表解析器

- 三、datetime 日期时间解析器

- 四、枚举解析器

- 五、自动修复解析器

- 六、Pydantic(JSON)解析器

- 七、重试解析器

- 八、结构化输出解析器 structured

转载改编自:

https://python.langchain.com.cn/docs/modules/model_io/output_parsers/

一、输出解析器 (Output Parsers)

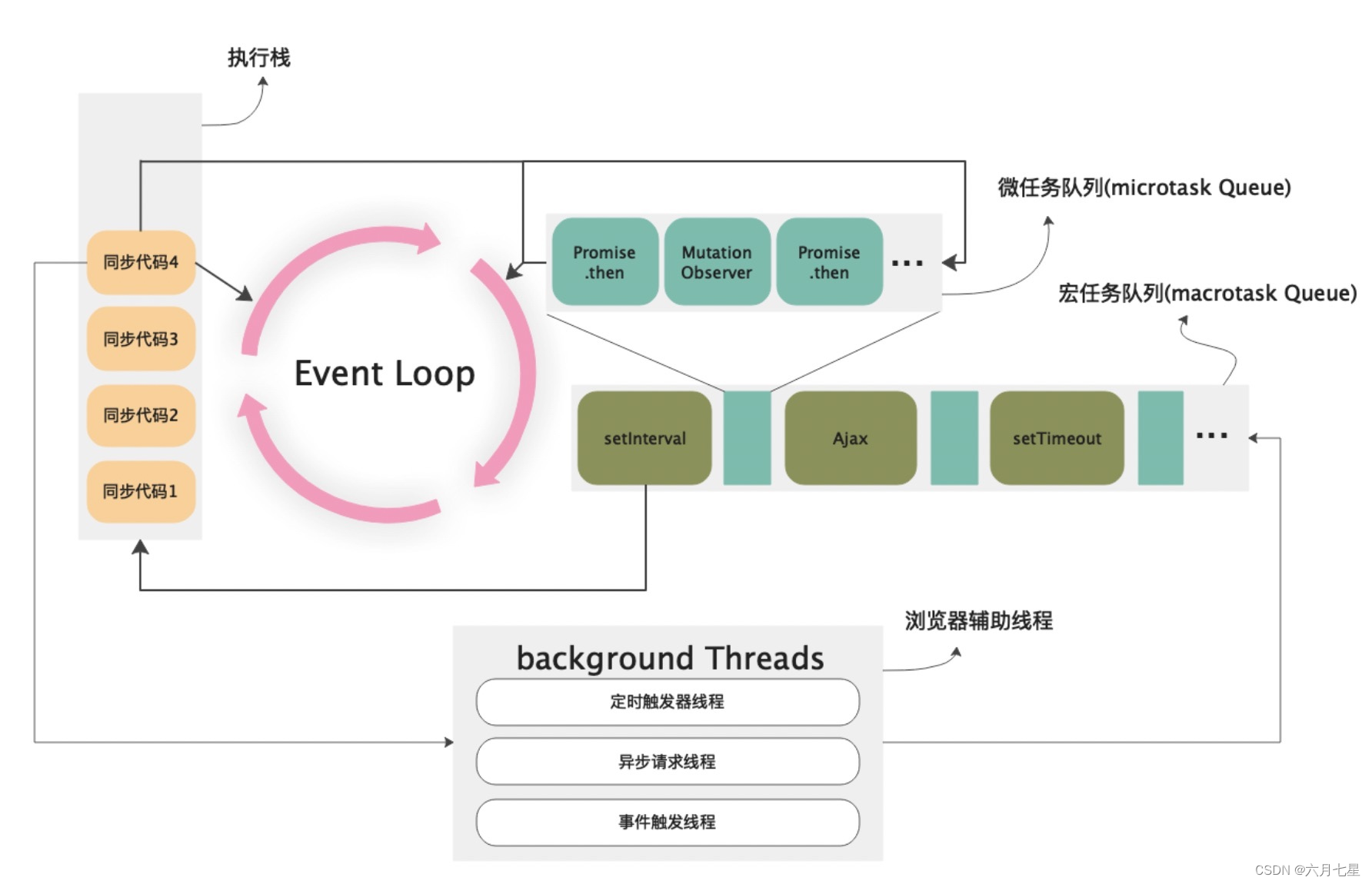

语言模型输出文本,但很多时候希望获得 比仅文本更结构化的信息。这就是输出解析器的作用。

输出解析器是帮助 结构化语言模型响应的类。一个输出解析器必须实现两个主要方法:

- “获取格式化指令”: 一个返回包含语言模型输出 应如何格式化的字符串的方法。

- “解析”: 一个接受字符串(假设为语言模型的响应)并将其解析为某种结构的方法。

然后再加一个可选的方法:

- “带提示解析”: 一个接受字符串(假设为语言模型的响应)和提示(假设为生成此响应的提示)并将其解析为某种结构的方法。

在需要从提示中获取信息以重试或修复输出的情况下,通常提供提示。

快速入门

下面我们来介绍主要类型的输出解析器,PydanticOutputParser。

from langchain.prompts import PromptTemplate, ChatPromptTemplate, HumanMessagePromptTemplate

from langchain.llms import OpenAI

from langchain.chat_models import ChatOpenAIfrom langchain.output_parsers import PydanticOutputParser

from pydantic import BaseModel, Field, validator

from typing import List

model_name = 'text-davinci-003'

temperature = 0.0

model = OpenAI(model_name=model_name, temperature=temperature)

# Define your desired data structure.

class Joke(BaseModel):setup: str = Field(description="question to set up a joke")punchline: str = Field(description="answer to resolve the joke")# You can add custom validation logic easily with Pydantic.@validator('setup')def question_ends_with_question_mark(cls, field):if field[-1] != '?':raise ValueError("Badly formed question!")return field

# Set up a parser + inject instructions into the prompt template.

parser = PydanticOutputParser(pydantic_object=Joke)

prompt = PromptTemplate(template="Answer the user query.\n{format_instructions}\n{query}\n",input_variables=["query"],partial_variables={"format_instructions": parser.get_format_instructions()}

)

# And a query intented to prompt a language model to populate the data structure.

joke_query = "Tell me a joke."

_input = prompt.format_prompt(query=joke_query)

output = model(_input.to_string())

parser.parse(output)

# -> Joke(setup='Why did the chicken cross the road?', punchline='To get to the other side!')

二、列表解析器

当您想要返回逗号分隔的项目列表时,可以使用此输出解析器。

from langchain.output_parsers import CommaSeparatedListOutputParser

from langchain.prompts import PromptTemplate, ChatPromptTemplate, HumanMessagePromptTemplate

from langchain.llms import OpenAI

from langchain.chat_models import ChatOpenAIoutput_parser = CommaSeparatedListOutputParser()

format_instructions = output_parser.get_format_instructions()

prompt = PromptTemplate(template="List five {subject}.\n{format_instructions}",input_variables=["subject"],partial_variables={"format_instructions": format_instructions}

)

model = OpenAI(temperature=0)

_input = prompt.format(subject="ice cream flavors")

output = model(_input)

output_parser.parse(output)

['Vanilla','Chocolate','Strawberry','Mint Chocolate Chip','Cookies and Cream']

三、datetime 日期时间解析器

该输出解析器演示如何将LLM输出解析为日期时间格式。

from langchain.prompts import PromptTemplate

from langchain.output_parsers import DatetimeOutputParser

from langchain.chains import LLMChain

from langchain.llms import OpenAI

output_parser = DatetimeOutputParser()

template = """Answer the users question:{question}{format_instructions}"""

prompt = PromptTemplate.from_template(template,partial_variables={"format_instructions": output_parser.get_format_instructions()},

)

chain = LLMChain(prompt=prompt, llm=OpenAI())

output = chain.run("around when was bitcoin founded?")# output -> '\n\n2008-01-03T18:15:05.000000Z'

output_parser.parse(output)

# -> datetime.datetime(2008, 1, 3, 18, 15, 5)

四、枚举解析器

本笔记本演示如何使用枚举输出解析器。

from langchain.output_parsers.enum import EnumOutputParser

from enum import Enumclass Colors(Enum):RED = "red"GREEN = "green"BLUE = "blue"

parser = EnumOutputParser(enum=Colors)

parser.parse("red")

# -> <Colors.RED: 'red'>

# Can handle spaces

parser.parse(" green")

# -> <Colors.GREEN: 'green'>

# And new lines

parser.parse("blue\n")

# -> <Colors.BLUE: 'blue'>

# And raises errors when appropriate

parser.parse("yellow")

五、自动修复解析器

该输出解析器包装了另一个输出解析器,并在第一个解析器失败时 调用另一个LLM来修复任何错误。

但是我们除了抛出错误之外,还可以做其他事情。

具体来说,我们可以将格式错误的输出以及格式化的指令一起传递给模型,并要求它进行修复。

六、Pydantic(JSON)解析器

该输出解析器允许用户指定任意的JSON模式,并查询符合该模式的JSON输出。

请记住,大型语言模型是有漏洞的抽象!您必须使用具有足够容量的LLM来生成格式正确的JSON。在OpenAI家族中,DaVinci的能力可靠,但Curie的能力已经大幅下降。

使用Pydantic来声明您的数据模型。Pydantic的BaseModel类似于Python的数据类,但具有真正的类型检查和强制转换功能。

from langchain.prompts import (PromptTemplate,ChatPromptTemplate,HumanMessagePromptTemplate,

)

from langchain.llms import OpenAI

from langchain.chat_models import ChatOpenAIfrom langchain.output_parsers import PydanticOutputParser

from pydantic import BaseModel, Field, validator

from typing import List

model_name = "text-davinci-003"

temperature = 0.0

model = OpenAI(model_name=model_name, temperature=temperature)

# Define your desired data structure.

class Joke(BaseModel):setup: str = Field(description="question to set up a joke")punchline: str = Field(description="answer to resolve the joke")# You can add custom validation logic easily with Pydantic.@validator("setup")def question_ends_with_question_mark(cls, field):if field[-1] != "?":raise ValueError("Badly formed question!")return field# And a query intented to prompt a language model to populate the data structure.

joke_query = "Tell me a joke."# Set up a parser + inject instructions into the prompt template.

parser = PydanticOutputParser(pydantic_object=Joke)prompt = PromptTemplate(template="Answer the user query.\n{format_instructions}\n{query}\n",input_variables=["query"],partial_variables={"format_instructions": parser.get_format_instructions()},

)_input = prompt.format_prompt(query=joke_query)output = model(_input.to_string())parser.parse(output)

# -> Joke(setup='Why did the chicken cross the road?', punchline='To get to the other side!')

# Here's another example, but with a compound typed field.

class Actor(BaseModel):name: str = Field(description="name of an actor")film_names: List[str] = Field(description="list of names of films they starred in")actor_query = "Generate the filmography for a random actor."parser = PydanticOutputParser(pydantic_object=Actor)prompt = PromptTemplate(template="Answer the user query.\n{format_instructions}\n{query}\n",input_variables=["query"],partial_variables={"format_instructions": parser.get_format_instructions()},

)_input = prompt.format_prompt(query=actor_query)output = model(_input.to_string())parser.parse(output)

# -> Actor(name='Tom Hanks', film_names=['Forrest Gump', 'Saving Private Ryan', 'The Green Mile', 'Cast Away', 'Toy Story'])

七、重试解析器

在某些情况下,通过仅查看输出就可以修复任何解析错误,但在其他情况下,则不太可能。

一个例子是当输出不仅格式不正确,而且部分不完整时。请考虑下面的例子。

from langchain.llms import OpenAI

from langchain.chat_models import ChatOpenAIfrom langchain.prompts import (PromptTemplate,ChatPromptTemplate,HumanMessagePromptTemplate,

)

from langchain.output_parsers import (PydanticOutputParser,OutputFixingParser,RetryOutputParser,

)

from pydantic import BaseModel, Field, validator

from typing import List

template = """Based on the user question, provide an Action and Action Input for what step should be taken.

{format_instructions}

Question: {query}

Response:"""class Action(BaseModel):action: str = Field(description="action to take")action_input: str = Field(description="input to the action")parser = PydanticOutputParser(pydantic_object=Action)

prompt = PromptTemplate(template="Answer the user query.\n{format_instructions}\n{query}\n",input_variables=["query"],partial_variables={"format_instructions": parser.get_format_instructions()},

)prompt_value = prompt.format_prompt(query="who is leo di caprios gf?")

bad_response = '{"action": "search"}'

这个 response 无法 parse,或报错

parser.parse(bad_response)

八、结构化输出解析器 structured

当您想要返回多个字段时,可以使用此输出解析器。尽管 Pydantic/JSON 解析器更强大,但我们最初尝试的数据结构仅具有文本字段。

from langchain.output_parsers import StructuredOutputParser, ResponseSchema

from langchain.prompts import PromptTemplate, ChatPromptTemplate, HumanMessagePromptTemplate

from langchain.llms import OpenAI

from langchain.chat_models import ChatOpenAI

这里我们定义了我们想要接收的响应模式。

response_schemas = [ResponseSchema(name="answer", description="answer to the user's question"),ResponseSchema(name="source", description="source used to answer the user's question, should be a website.")

]

output_parser = StructuredOutputParser.from_response_schemas(response_schemas)

我们现在获得一个包含响应格式化指令的字符串,然后将其插入到我们的提示中。

format_instructions = output_parser.get_format_instructions()

prompt = PromptTemplate(template="answer the users question as best as possible.\n{format_instructions}\n{question}",input_variables=["question"],partial_variables={"format_instructions": format_instructions}

)

我们现在可以使用这个来格式化一个提示,发送给语言模型,然后解析返回的结果。

model = OpenAI(temperature=0)_input = prompt.format_prompt(question="what's the capital of france?")output = model(_input.to_string())output_parser.parse(output)

{'answer': 'Paris','source': 'https://www.worldatlas.com/articles/what-is-the-capital-of-france.html'}

这里是一个在聊天模型中使用它的例子

chat_model = ChatOpenAI(temperature=0)

prompt = ChatPromptTemplate(messages=[HumanMessagePromptTemplate.from_template("answer the users question as best as possible.\n{format_instructions}\n{question}") ],input_variables=["question"],partial_variables={"format_instructions": format_instructions}

)

_input = prompt.format_prompt(question="what's the capital of france?")output = chat_model(_input.to_messages())output_parser.parse(output.content)

# -> {'answer': 'Paris', 'source': 'https://en.wikipedia.org/wiki/Paris'}

2024-04-08(一)