连接【https://spikingjelly.readthedocs.io/zh-cn/0.0.0.0.14/activation_based/lif_fc_mnist.html】

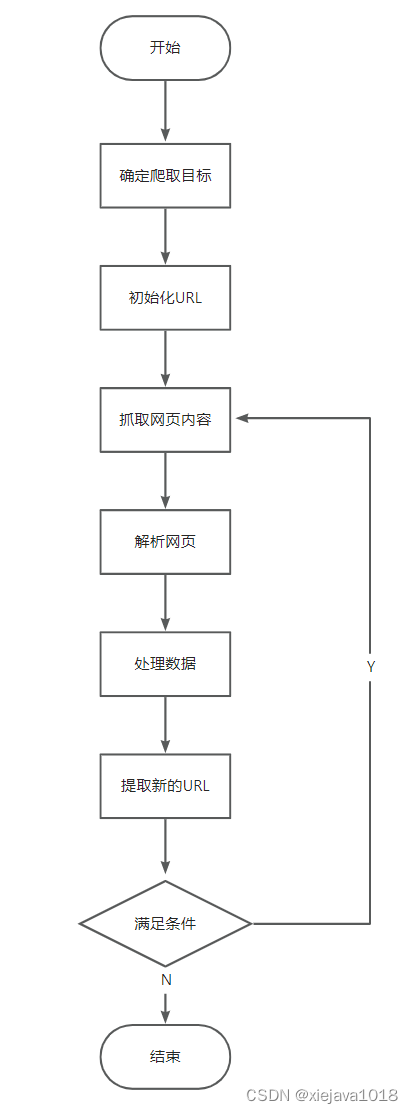

【训练代码的编写需要遵循以下三个要点:

脉冲神经元的输出是二值的,而直接将单次运行的结果用于分类极易受到编码带来的噪声干扰。因此一般认为脉冲网络的输出是输出层一段时间内的发放频率(或称发放率),发放率的高低表示该类别的响应大小。因此网络需要运行一段时间,即使用T个时刻后的平均发放率作为分类依据。

脉冲不一定就是这个类型,应该用一段时间内的发射率高低,代表整个网络的真正的识别的结果

我们希望的理想结果是除了正确的神经元以最高频率发放,其他神经元保持静默。常常采用交叉熵损失或者MSE损失,这里我们使用实际效果更好的MSE损失。

用MSE损失,保证正确的神经元是最高频率发射的

每次网络仿真结束后,需要重置网络状态】

【另外由于我们使用了泊松编码器,因此需要较大的 T保证编码带来的噪声不太大。】

【python -m spikingjelly.activation_based.examples.lif_fc_mnist -tau 2.0 -T 100 -device cuda:0 -b 64 -epochs 50 -data-dir \mnist -amp -opt adam -lr 1e-3 -j 8】

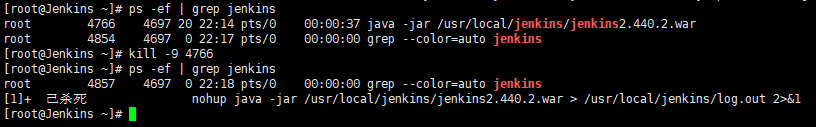

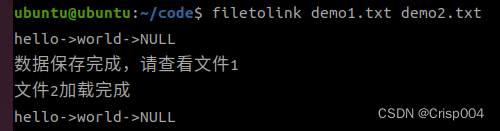

发现崩溃了 可能是线程太多了

RuntimeError: DataLoader worker (pid(s) 12876, 3988, 18264, 8428, 15236, 11128) exited unexpectedly

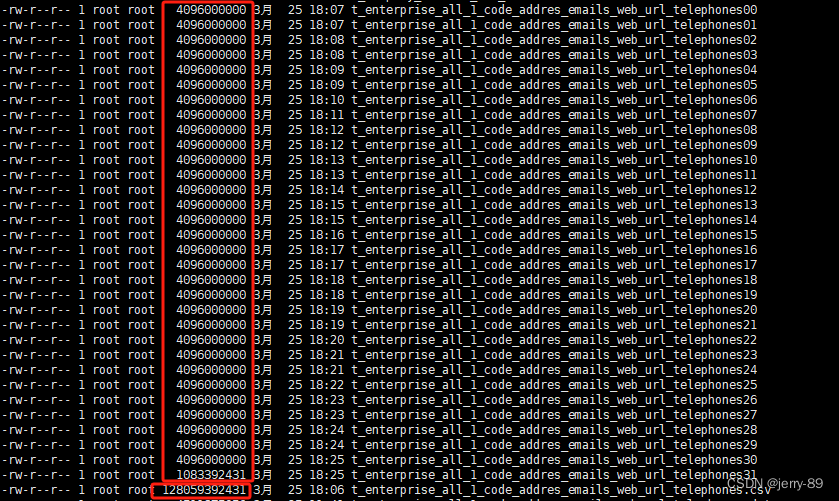

最后用了 j = 2来训练 ,1650显卡 50 epoch 使用时间40min

自己的程序可以【python -m main -tau 2.0 -T 50 -device cuda:0 -b 64 -epochs 5 -data-dir \mnist -opt adam -lr 1e-3 -j 2】

import os

import time

import argparse

import sys

import datetimeimport torch

import torch.nn as nn

import torch.nn.functional as F

import torch.utils.data as data

from torch.cuda import amp

from torch.utils.tensorboard import SummaryWriter

import torchvision

import numpy as npfrom spikingjelly.activation_based import neuron, encoding, functional, surrogate, layerclass SNN(nn.Module):def __init__(self, tau):super().__init__()self.layer = nn.Sequential(layer.Flatten(),layer.Linear(28 * 28, 10, bias=False),neuron.LIFNode(tau=tau, surrogate_function=surrogate.ATan()),)def forward(self, x: torch.Tensor):return self.layer(x)def main():''':return: None* :ref:`API in English <lif_fc_mnist.main-en>`.. _lif_fc_mnist.main-cn:使用全连接-LIF的网络结构,进行MNIST识别。\n这个函数会初始化网络进行训练,并显示训练过程中在测试集的正确率。* :ref:`中文API <lif_fc_mnist.main-cn>`.. _lif_fc_mnist.main-en:The network with FC-LIF structure for classifying MNIST.\nThis function initials the network, starts trainingand shows accuracy on test dataset.'''parser = argparse.ArgumentParser(description='LIF MNIST Training')parser.add_argument('-T', default=100, type=int, help='simulating time-steps')parser.add_argument('-device', default='cuda:0', help='device')parser.add_argument('-b', default=64, type=int, help='batch size')parser.add_argument('-epochs', default=100, type=int, metavar='N',help='number of total epochs to run')parser.add_argument('-j', default=4, type=int, metavar='N',help='number of data loading workers (default: 4)')parser.add_argument('-data-dir', type=str, help='root dir of MNIST dataset')parser.add_argument('-out-dir', type=str, default='./logs', help='root dir for saving logs and checkpoint')parser.add_argument('-resume', type=str, help='resume from the checkpoint path')parser.add_argument('-amp', action='store_true', help='automatic mixed precision training')parser.add_argument('-opt', type=str, choices=['sgd', 'adam'], default='adam', help='use which optimizer. SGD or Adam')parser.add_argument('-momentum', default=0.9, type=float, help='momentum for SGD')parser.add_argument('-lr', default=1e-3, type=float, help='learning rate')parser.add_argument('-tau', default=2.0, type=float, help='parameter tau of LIF neuron')args = parser.parse_args()print(args)net = SNN(tau=args.tau)print(net)net.to(args.device)# 初始化数据加载器train_dataset = torchvision.datasets.MNIST(root=args.data_dir,train=True,transform=torchvision.transforms.ToTensor(),download=True)test_dataset = torchvision.datasets.MNIST(root=args.data_dir,train=False,transform=torchvision.transforms.ToTensor(),download=True)train_data_loader = data.DataLoader(dataset=train_dataset,batch_size=args.b,shuffle=True,drop_last=True,num_workers=args.j,pin_memory=True)test_data_loader = data.DataLoader(dataset=test_dataset,batch_size=args.b,shuffle=False,drop_last=False,num_workers=args.j,pin_memory=True)scaler = Noneif args.amp:scaler = amp.GradScaler()start_epoch = 0max_test_acc = -1optimizer = Noneif args.opt == 'sgd':optimizer = torch.optim.SGD(net.parameters(), lr=args.lr, momentum=args.momentum)elif args.opt == 'adam':optimizer = torch.optim.Adam(net.parameters(), lr=args.lr)else:raise NotImplementedError(args.opt)if args.resume:checkpoint = torch.load(args.resume, map_location='cpu')net.load_state_dict(checkpoint['net'])optimizer.load_state_dict(checkpoint['optimizer'])start_epoch = checkpoint['epoch'] + 1max_test_acc = checkpoint['max_test_acc']out_dir = os.path.join(args.out_dir, f'T{args.T}_b{args.b}_{args.opt}_lr{args.lr}')if args.amp:out_dir += '_amp'if not os.path.exists(out_dir):os.makedirs(out_dir)print(f'Mkdir {out_dir}.')with open(os.path.join(out_dir, 'args.txt'), 'w', encoding='utf-8') as args_txt:args_txt.write(str(args))writer = SummaryWriter(out_dir, purge_step=start_epoch)with open(os.path.join(out_dir, 'args.txt'), 'w', encoding='utf-8') as args_txt:args_txt.write(str(args))args_txt.write('\n')args_txt.write(' '.join(sys.argv))encoder = encoding.PoissonEncoder()for epoch in range(start_epoch, args.epochs):start_time = time.time()net.train()train_loss = 0train_acc = 0train_samples = 0for img, label in train_data_loader:optimizer.zero_grad()img = img.to(args.device)label = label.to(args.device)label_onehot = F.one_hot(label, 10).float()if scaler is not None:with amp.autocast():out_fr = 0.for t in range(args.T):encoded_img = encoder(img)out_fr += net(encoded_img)out_fr = out_fr / args.Tloss = F.mse_loss(out_fr, label_onehot)scaler.scale(loss).backward()scaler.step(optimizer)scaler.update()else:out_fr = 0.for t in range(args.T):encoded_img = encoder(img)out_fr += net(encoded_img)out_fr = out_fr / args.Tloss = F.mse_loss(out_fr, label_onehot)loss.backward()optimizer.step()train_samples += label.numel()train_loss += loss.item() * label.numel()train_acc += (out_fr.argmax(1) == label).float().sum().item()functional.reset_net(net)train_time = time.time()train_speed = train_samples / (train_time - start_time)train_loss /= train_samplestrain_acc /= train_sampleswriter.add_scalar('train_loss', train_loss, epoch)writer.add_scalar('train_acc', train_acc, epoch)net.eval()test_loss = 0test_acc = 0test_samples = 0with torch.no_grad():for img, label in test_data_loader:img = img.to(args.device)label = label.to(args.device)label_onehot = F.one_hot(label, 10).float()out_fr = 0.for t in range(args.T):encoded_img = encoder(img)out_fr += net(encoded_img)out_fr = out_fr / args.Tloss = F.mse_loss(out_fr, label_onehot)test_samples += label.numel()test_loss += loss.item() * label.numel()test_acc += (out_fr.argmax(1) == label).float().sum().item()functional.reset_net(net)test_time = time.time()test_speed = test_samples / (test_time - train_time)test_loss /= test_samplestest_acc /= test_sampleswriter.add_scalar('test_loss', test_loss, epoch)writer.add_scalar('test_acc', test_acc, epoch)save_max = Falseif test_acc > max_test_acc:max_test_acc = test_accsave_max = Truecheckpoint = {'net': net.state_dict(),'optimizer': optimizer.state_dict(),'epoch': epoch,'max_test_acc': max_test_acc}if save_max:torch.save(checkpoint, os.path.join(out_dir, 'checkpoint_max.pth'))torch.save(checkpoint, os.path.join(out_dir, 'checkpoint_latest.pth'))print(args)print(out_dir)print(f'epoch ={epoch}, train_loss ={train_loss: .4f}, train_acc ={train_acc: .4f}, test_loss ={test_loss: .4f}, test_acc ={test_acc: .4f}, max_test_acc ={max_test_acc: .4f}')print(f'train speed ={train_speed: .4f} images/s, test speed ={test_speed: .4f} images/s')print(f'escape time = {(datetime.datetime.now() + datetime.timedelta(seconds=(time.time() - start_time) * (args.epochs - epoch))).strftime("%Y-%m-%d %H:%M:%S")}\n')# 保存绘图用数据net.eval()# 注册钩子output_layer = net.layer[-1] # 输出层output_layer.v_seq = []output_layer.s_seq = []def save_hook(m, x, y):m.v_seq.append(m.v.unsqueeze(0))m.s_seq.append(y.unsqueeze(0))output_layer.register_forward_hook(save_hook)with torch.no_grad():img, label = test_dataset[0]img = img.to(args.device)out_fr = 0.for t in range(args.T):encoded_img = encoder(img)out_fr += net(encoded_img)out_spikes_counter_frequency = (out_fr / args.T).cpu().numpy()print(f'Firing rate: {out_spikes_counter_frequency}')output_layer.v_seq = torch.cat(output_layer.v_seq)output_layer.s_seq = torch.cat(output_layer.s_seq)v_t_array = output_layer.v_seq.cpu().numpy().squeeze() # v_t_array[i][j]表示神经元i在j时刻的电压值np.save("v_t_array.npy",v_t_array)s_t_array = output_layer.s_seq.cpu().numpy().squeeze() # s_t_array[i][j]表示神经元i在j时刻释放的脉冲,为0或1np.save("s_t_array.npy",s_t_array)if __name__ == '__main__':main()

Namespace(T=100, amp=True, b=64, data_dir='\\mnist', device='cuda:0', epochs=50, j=2, lr=0.001, momentum=0.9, opt='adam', out_dir='./logs', resume=None, tau=2.0)

./logs\T100_b64_adam_lr0.001_amp

epoch =49, train_loss = 0.0138, train_acc = 0.9324, test_loss = 0.0146, test_acc = 0.9269, max_test_acc = 0.9282

train speed = 1504.1307 images/s, test speed = 2240.2271 images/s

escape time = 2024-03-22 15:13:23Firing rate: [[0. 0. 0. 0. 0. 0. 0. 1. 0. 0.]]【C:\Users\wx\AppData\Local\Programs\Python\Python37\Lib\site-packages\spikingjelly\activation_based】

# 创建数据加载器

test_dataset = torchvision.datasets.MNIST(root='./data', train=False, download=True, transform=transform)

test_loader = torch.utils.data.DataLoader(test_dataset, batch_size=64, shuffle=False)# 批量预测

for imgs, labels in test_loader:imgs = imgs.unsqueeze(1) # 确保图片有正确的维度with torch.no_grad():outputs = model(imgs)predicted_labels = outputs.argmax(dim=1)for i, label in enumerate(predicted_labels):print(f'Predicted label: {label.item()}, True label: {labels[i].item()}')

333333333333333333333333333333333333333333333333333333333

# 或者从MNIST测试集中获取一张图片

test_dataset = torchvision.datasets.MNIST(root='./data', train=False, download=True, transform=transform)

img, label = test_dataset[0] # 获取第一张图片及其标签

img = img.unsqueeze(0) # 增加批次维度# 模型推理

with torch.no_grad():output = model(img)# 解析结果

predicted_label = output.argmax(dim=1)

print(f'Predicted label: {predicted_label.item()}, True label: {label}')=========================

训练的main

import os

import time

import argparse

import sys

import datetimeimport torch

import torch.nn as nn

import torch.nn.functional as F

import torch.utils.data as data

from torch.cuda import amp

from torch.utils.tensorboard import SummaryWriter

import torchvision

import numpy as npfrom spikingjelly.activation_based import neuron, encoding, functional, surrogate, layerclass SNN(nn.Module):def __init__(self, tau):super().__init__()self.layer = nn.Sequential(layer.Flatten(),layer.Linear(28 * 28, 20, bias=False),neuron.LIFNode(tau=tau, surrogate_function=surrogate.ATan()),layer.Linear(20, 10, bias=False),neuron.LIFNode(tau=tau, surrogate_function=surrogate.ATan()),)def forward(self, x: torch.Tensor):return self.layer(x)def main():''':return: None* :ref:`API in English <lif_fc_mnist.main-en>`.. _lif_fc_mnist.main-cn:使用全连接-LIF的网络结构,进行MNIST识别。\n这个函数会初始化网络进行训练,并显示训练过程中在测试集的正确率。* :ref:`中文API <lif_fc_mnist.main-cn>`.. _lif_fc_mnist.main-en:The network with FC-LIF structure for classifying MNIST.\nThis function initials the network, starts trainingand shows accuracy on test dataset.'''parser = argparse.ArgumentParser(description='LIF MNIST Training')parser.add_argument('-T', default=100, type=int, help='simulating time-steps')parser.add_argument('-device', default='cuda:0', help='device')parser.add_argument('-b', default=64, type=int, help='batch size')parser.add_argument('-epochs', default=100, type=int, metavar='N',help='number of total epochs to run')parser.add_argument('-j', default=4, type=int, metavar='N',help='number of data loading workers (default: 4)')parser.add_argument('-data-dir', type=str, help='root dir of MNIST dataset')parser.add_argument('-out-dir', type=str, default='./logs', help='root dir for saving logs and checkpoint')parser.add_argument('-resume', type=str, help='resume from the checkpoint path')parser.add_argument('-amp', action='store_true', help='automatic mixed precision training')parser.add_argument('-opt', type=str, choices=['sgd', 'adam'], default='adam', help='use which optimizer. SGD or Adam')parser.add_argument('-momentum', default=0.9, type=float, help='momentum for SGD')parser.add_argument('-lr', default=1e-3, type=float, help='learning rate')parser.add_argument('-tau', default=2.0, type=float, help='parameter tau of LIF neuron')args = parser.parse_args()print(args)net = SNN(tau=args.tau)print(net)net.to(args.device)# 初始化数据加载器train_dataset = torchvision.datasets.MNIST(root=args.data_dir,train=True,transform=torchvision.transforms.ToTensor(),download=True)test_dataset = torchvision.datasets.MNIST(root=args.data_dir,train=False,transform=torchvision.transforms.ToTensor(),download=True)train_data_loader = data.DataLoader(dataset=train_dataset,batch_size=args.b,shuffle=True,drop_last=True,num_workers=args.j,pin_memory=True)test_data_loader = data.DataLoader(dataset=test_dataset,batch_size=args.b,shuffle=False,drop_last=False,num_workers=args.j,pin_memory=True)scaler = Noneif args.amp:scaler = amp.GradScaler()start_epoch = 0max_test_acc = -1optimizer = Noneif args.opt == 'sgd':optimizer = torch.optim.SGD(net.parameters(), lr=args.lr, momentum=args.momentum)elif args.opt == 'adam':optimizer = torch.optim.Adam(net.parameters(), lr=args.lr)else:raise NotImplementedError(args.opt)if args.resume:checkpoint = torch.load(args.resume, map_location='cpu')net.load_state_dict(checkpoint['net'])optimizer.load_state_dict(checkpoint['optimizer'])start_epoch = checkpoint['epoch'] + 1max_test_acc = checkpoint['max_test_acc']out_dir = os.path.join(args.out_dir, f'T{args.T}_b{args.b}_{args.opt}_lr{args.lr}')if args.amp:out_dir += '_amp'#是否使用混合精度if not os.path.exists(out_dir):os.makedirs(out_dir)print(f'Mkdir {out_dir}.')with open(os.path.join(out_dir, 'args.txt'), 'w', encoding='utf-8') as args_txt:args_txt.write(str(args))writer = SummaryWriter(out_dir, purge_step=start_epoch)with open(os.path.join(out_dir, 'args.txt'), 'w', encoding='utf-8') as args_txt:args_txt.write(str(args))args_txt.write('\n')args_txt.write(' '.join(sys.argv))encoder = encoding.PoissonEncoder()for epoch in range(start_epoch, args.epochs):start_time = time.time()net.train()train_loss = 0train_acc = 0train_samples = 0for img, label in train_data_loader:optimizer.zero_grad()img = img.to(args.device)label = label.to(args.device)label_onehot = F.one_hot(label, 10).float()if scaler is not None:# 混合精度训练with amp.autocast():out_fr = 0.for t in range(args.T):encoded_img = encoder(img)#这里必须把图片编码成T个批次,用泊松编码out_fr += net(encoded_img)out_fr = out_fr / args.T# out_fr是shape=[batch_size, 10]的tensor# 记录整个仿真时长内,输出层的10个神经元的脉冲发放率loss = F.mse_loss(out_fr, label_onehot)# 损失函数为输出层神经元的脉冲发放频率,与真实类别的MSE# 这样的损失函数会使得:当标签i给定时,输出层中第i个神经元的脉冲发放频率趋近1,而其他神经元的脉冲发放频率趋近0scaler.scale(loss).backward()scaler.step(optimizer)scaler.update()else:out_fr = 0.for t in range(args.T):encoded_img = encoder(img)#这里必须把图片编码成T个批次,用泊松编码out_fr += net(encoded_img)out_fr = out_fr / args.Tloss = F.mse_loss(out_fr, label_onehot)loss.backward()optimizer.step()train_samples += label.numel()train_loss += loss.item() * label.numel()# 正确率的计算方法如下。认为输出层中脉冲发放频率最大的神经元的下标i是分类结果train_acc += (out_fr.argmax(1) == label).float().sum().item()# 优化一次参数后,需要重置网络的状态,因为SNN的神经元是有“记忆”的functional.reset_net(net)train_time = time.time()train_speed = train_samples / (train_time - start_time)train_loss /= train_samplestrain_acc /= train_sampleswriter.add_scalar('train_loss', train_loss, epoch)writer.add_scalar('train_acc', train_acc, epoch)net.eval()test_loss = 0test_acc = 0test_samples = 0with torch.no_grad():for img, label in test_data_loader:img = img.to(args.device)label = label.to(args.device)label_onehot = F.one_hot(label, 10).float()out_fr = 0.for t in range(args.T):encoded_img = encoder(img)out_fr += net(encoded_img)out_fr = out_fr / args.Tloss = F.mse_loss(out_fr, label_onehot)test_samples += label.numel()test_loss += loss.item() * label.numel()test_acc += (out_fr.argmax(1) == label).float().sum().item()functional.reset_net(net)test_time = time.time()test_speed = test_samples / (test_time - train_time)test_loss /= test_samplestest_acc /= test_sampleswriter.add_scalar('test_loss', test_loss, epoch)writer.add_scalar('test_acc', test_acc, epoch)save_max = Falseif test_acc > max_test_acc:max_test_acc = test_accsave_max = Truecheckpoint = {'net': net.state_dict(),'optimizer': optimizer.state_dict(),'epoch': epoch,'max_test_acc': max_test_acc}if save_max:torch.save(checkpoint, os.path.join(out_dir, 'checkpoint_max.pth'))torch.save(checkpoint, os.path.join(out_dir, 'checkpoint_latest.pth'))print(args)print(out_dir)print(f'epoch ={epoch}, train_loss ={train_loss: .4f}, train_acc ={train_acc: .4f}, test_loss ={test_loss: .4f}, test_acc ={test_acc: .4f}, max_test_acc ={max_test_acc: .4f}')print(f'train speed ={train_speed: .4f} images/s, test speed ={test_speed: .4f} images/s')print(f'escape time = {(datetime.datetime.now() + datetime.timedelta(seconds=(time.time() - start_time) * (args.epochs - epoch))).strftime("%Y-%m-%d %H:%M:%S")}\n')# 保存绘图用数据net.eval()# 注册钩子output_layer = net.layer[-1] # 输出层output_layer.v_seq = []output_layer.s_seq = []def save_hook(m, x, y):m.v_seq.append(m.v.unsqueeze(0))m.s_seq.append(y.unsqueeze(0))output_layer.register_forward_hook(save_hook)with torch.no_grad():#预测的时候,使用没有梯度的img, label = test_dataset[0]img = img.to(args.device)out_fr = 0.for t in range(args.T):encoded_img = encoder(img)out_fr += net(encoded_img)out_spikes_counter_frequency = (out_fr / args.T).cpu().numpy()print(f'Firing rate: {out_spikes_counter_frequency}')output_layer.v_seq = torch.cat(output_layer.v_seq)output_layer.s_seq = torch.cat(output_layer.s_seq)v_t_array = output_layer.v_seq.cpu().numpy().squeeze() # v_t_array[i][j]表示神经元i在j时刻的电压值np.save("v_t_array.npy",v_t_array)s_t_array = output_layer.s_seq.cpu().numpy().squeeze() # s_t_array[i][j]表示神经元i在j时刻释放的脉冲,为0或1np.save("s_t_array.npy",s_t_array)if __name__ == '__main__':main()

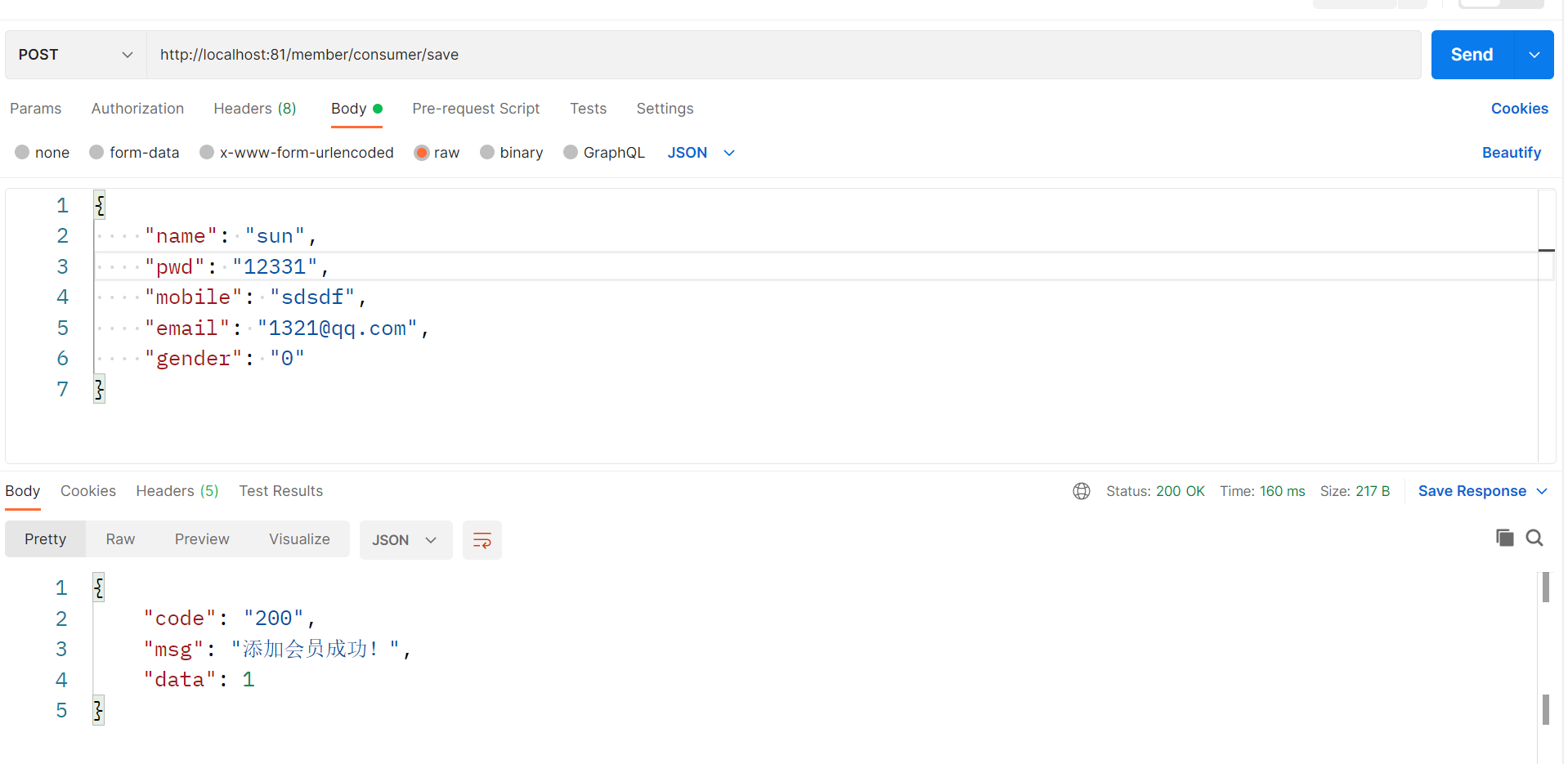

Namespace(T=50, amp=False, b=64, data_dir='\\mnist', device='cuda:0', epochs=5, j=2, lr=0.001, momentum=0.9, opt='adam', out_dir='./logs', resume=None, tau=2.0)

SNN((layer): Sequential((0): Flatten(start_dim=1, end_dim=-1, step_mode=s)(1): Linear(in_features=784, out_features=20, bias=False)(2): LIFNode(v_threshold=1.0, v_reset=0.0, detach_reset=False, step_mode=s, backend=torch, tau=2.0(surrogate_function): ATan(alpha=2.0, spiking=True))(3): Linear(in_features=20, out_features=10, bias=False)(4): LIFNode(v_threshold=1.0, v_reset=0.0, detach_reset=False, step_mode=s, backend=torch, tau=2.0(surrogate_function): ATan(alpha=2.0, spiking=True)))

)

查看内容

import torch# 模型文件路径

model_path = 'logs\\T50_b64_adam_lr0.001\\checkpoint_max.pth'# 加载模型参数

checkpoint = torch.load(model_path, map_location=torch.device('cpu'))# 获取模型状态字典

model_state_dict = checkpoint['net']# 打印模型参数的名称和尺寸

for name, param in model_state_dict.items():print(f"{name}: {param.size()}")layer.1.weight: torch.Size([20, 784])

layer.3.weight: torch.Size([10, 20])

import torch# 模型文件路径

model_path = 'logs\\T50_b64_adam_lr0.001\\checkpoint_max.pth'# 加载模型参数

checkpoint = torch.load(model_path, map_location=torch.device('cpu'))# 获取模型状态字典

model_state_dict = checkpoint['net']# 打印模型参数的名称和尺寸

for name, param in model_state_dict.items():print(f"{name}: {param}")state: {0: {'step': 4685, 'exp_avg': tensor([[-5.6052e-45, 0.0000e+00, 0.0000e+00, ..., 0.0000e+00,0.0000e+00, 0.0000e+00],[-5.6052e-45, 0.0000e+00, 0.0000e+00, ..., 0.0000e+00,0.0000e+00, 0.0000e+00],[-5.6052e-45, 0.0000e+00, 0.0000e+00, ..., 0.0000e+00,0.0000e+00, 0.0000e+00],...,[-5.6052e-45, 0.0000e+00, 0.0000e+00, ..., 0.0000e+00,0.0000e+00, 0.0000e+00],[ 5.6052e-45, 0.0000e+00, 0.0000e+00, ..., 0.0000e+00,0.0000e+00, 0.0000e+00],[-5.6052e-45, 0.0000e+00, 0.0000e+00, ..., 0.0000e+00,0.0000e+00, 0.0000e+00]]), 'exp_avg_sq': tensor([[5.4887e-21, 0.0000e+00, 0.0000e+00, ..., 0.0000e+00, 0.0000e+00,0.0000e+00],[6.9946e-18, 0.0000e+00, 0.0000e+00, ..., 0.0000e+00, 0.0000e+00,0.0000e+00],[4.1606e-18, 0.0000e+00, 0.0000e+00, ..., 0.0000e+00, 0.0000e+00,0.0000e+00],...,[7.3014e-20, 0.0000e+00, 0.0000e+00, ..., 0.0000e+00, 0.0000e+00,0.0000e+00],[3.1269e-22, 0.0000e+00, 0.0000e+00, ..., 0.0000e+00, 0.0000e+00,0.0000e+00],[9.3442e-19, 0.0000e+00, 0.0000e+00, ..., 0.0000e+00, 0.0000e+00,0.0000e+00]])}, 1: {'step': 4685, 'exp_avg': tensor([[ 5.7662e-05, 9.4302e-05, -2.2914e-05, 7.0126e-05, -8.0071e-06,-2.8472e-05, 3.8829e-05, 3.1861e-05, 2.0539e-06, 9.4337e-05,1.0575e-04, 7.2576e-05, 2.5091e-05, 1.0423e-04, 6.8849e-05,-1.1465e-05, 6.4772e-06, 2.8661e-05, 1.0575e-04, -3.0879e-05],[ 4.0370e-05, -2.5490e-05, 3.6750e-05, 1.0383e-04, 4.7854e-05,-1.0464e-04, -3.2048e-05, 7.4514e-05, -2.5424e-05, 2.4497e-05,2.6645e-05, 8.5905e-05, 2.3912e-05, -1.6992e-05, -4.8906e-05,-8.5183e-06, 1.3556e-05, 8.9365e-05, 2.6645e-05, -3.4740e-05],[ 7.1212e-05, 6.5498e-05, 6.8724e-05, -2.0907e-05, 9.8975e-05,7.7583e-05, 4.7027e-05, -3.3001e-05, 4.0501e-05, 1.7189e-05,6.2294e-05, 4.2236e-05, 6.3911e-05, -1.1380e-04, -7.9991e-05,1.2999e-04, 4.3720e-05, 8.7419e-05, 6.2294e-05, 8.0482e-05],[ 6.8617e-05, 1.7685e-05, 5.2521e-05, 1.2824e-04, 1.0524e-04,8.6140e-05, -5.9074e-05, -7.5817e-05, -1.3166e-04, 4.3115e-05,9.3121e-05, -4.5025e-05, 1.7442e-04, 6.6833e-05, -3.5082e-05,2.0399e-05, 1.1166e-05, 1.0254e-04, 9.3121e-05, 2.6103e-05],[-1.7059e-04, -1.2053e-04, 1.7854e-05, 4.8641e-05, 8.1662e-07,-7.4762e-06, 3.2949e-05, -1.8859e-04, -6.8189e-06, -8.8507e-05,-6.0311e-05, -4.3842e-05, -6.9384e-05, -7.4415e-05, -1.3574e-04,1.1167e-04, -1.3956e-05, -1.0982e-04, -6.0311e-05, -1.1075e-04],[ 1.4351e-04, 4.4203e-05, 3.0716e-05, -5.5875e-05, 5.4944e-05,-2.9494e-05, 6.8628e-05, 3.9529e-05, 1.1521e-04, 8.9715e-05,1.1499e-04, 6.7075e-05, -1.2538e-05, 4.8699e-05, 5.7477e-06,3.7231e-05, -6.4857e-05, 1.4535e-04, 1.1499e-04, 1.7541e-04],[-5.7757e-07, -4.9825e-05, 1.3103e-05, -8.2301e-05, -3.4597e-05,-9.3941e-06, -1.4056e-04, -4.9424e-05, 4.5726e-07, 2.7036e-05,-3.4954e-05, -4.0704e-05, 1.8893e-05, -2.3781e-05, -1.9857e-06,-1.0109e-04, -2.3972e-05, -4.5446e-05, -3.4954e-05, 4.2763e-05],[-9.2329e-06, -1.5213e-05, -3.0501e-05, -5.8427e-05, 4.2775e-05,-8.2380e-05, -6.7848e-05, 4.8413e-05, -2.6172e-05, 1.0499e-06,-4.1764e-05, -4.2690e-05, -4.5303e-05, 9.7521e-05, 3.7293e-05,-3.9277e-05, 5.1072e-06, -7.3620e-05, -4.1764e-05, -2.4857e-05],[-2.5447e-04, -1.2637e-04, -2.1258e-05, -8.2895e-05, -2.5426e-04,3.9588e-05, 5.9280e-05, -2.0705e-04, -2.0801e-05, -2.0385e-04,-1.2568e-04, -1.5306e-04, -2.1297e-04, -1.3012e-04, 9.3518e-06,-7.9763e-05, -2.9171e-05, -7.1757e-05, -1.2568e-04, -1.8414e-04],[ 3.7567e-05, 1.4578e-05, 9.4128e-06, -1.0450e-04, 2.9321e-05,2.7592e-05, 4.8101e-05, 2.0659e-04, 2.5532e-05, 2.2231e-06,2.6372e-05, -3.6834e-05, 2.8753e-05, 1.6725e-05, 5.3136e-05,-3.9017e-05, 1.2868e-05, 6.5462e-05, 2.6372e-05, 5.5058e-05]]), 'exp_avg_sq': tensor([[1.3675e-07, 2.8011e-07, 7.2356e-08, 3.1617e-07, 2.7788e-07, 1.8226e-07,2.2574e-08, 4.3278e-08, 1.9314e-09, 2.7671e-07, 3.7019e-07, 3.2069e-07,2.6228e-07, 2.9050e-07, 1.9381e-08, 3.1061e-07, 2.1207e-08, 1.7679e-07,3.7083e-07, 4.9034e-08],[2.6950e-07, 1.5704e-07, 8.8274e-08, 1.0955e-07, 2.3350e-07, 9.4974e-09,1.3759e-07, 4.5118e-08, 1.4009e-08, 2.1749e-07, 2.7301e-07, 8.0331e-08,1.7113e-07, 1.2220e-07, 7.9229e-09, 5.1897e-08, 8.1172e-09, 2.0456e-07,2.7767e-07, 1.0314e-07],[5.7079e-07, 2.7780e-07, 3.6102e-07, 6.1831e-07, 5.3581e-07, 1.1958e-07,1.5398e-07, 1.0561e-07, 2.8518e-08, 3.4183e-07, 7.3553e-07, 6.2640e-07,4.5611e-07, 2.7788e-07, 9.4152e-08, 4.3435e-07, 3.0073e-08, 5.5142e-07,7.3612e-07, 1.0163e-07],[7.7638e-07, 5.5413e-07, 4.1934e-07, 3.3837e-07, 7.1185e-07, 2.6715e-07,5.7251e-08, 9.7615e-08, 1.0739e-07, 7.2413e-07, 8.4244e-07, 6.6952e-07,6.8892e-07, 3.0747e-07, 2.2894e-08, 5.2541e-07, 9.1946e-08, 4.3397e-07,8.4764e-07, 5.9303e-07],[4.4186e-07, 4.5053e-07, 2.4454e-08, 2.6119e-07, 6.7852e-08, 2.3010e-08,8.8053e-08, 1.7867e-07, 7.9515e-09, 2.5979e-07, 5.1960e-07, 4.2289e-07,2.2301e-07, 4.6440e-07, 4.0014e-07, 1.3174e-07, 4.2379e-08, 4.5859e-07,5.2457e-07, 2.0313e-07],[6.5929e-07, 4.9643e-07, 7.8563e-08, 3.8194e-07, 7.1154e-07, 3.1450e-07,6.7366e-08, 3.0115e-07, 4.0987e-08, 6.0577e-07, 8.4811e-07, 6.2267e-07,8.4921e-07, 4.7289e-07],[2.3674e-07, 2.7536e-07, 1.6511e-08, 3.0799e-07, 3.1392e-07, 7.4364e-08,1.8492e-07, 1.5607e-07, 1.9890e-09, 9.1872e-08, 4.2780e-07, 3.7048e-07,2.7426e-07, 3.4437e-07, 4.3100e-08, 3.2461e-07, 1.2477e-07, 2.6438e-07,4.2998e-07, 4.7953e-08],[5.3036e-07, 3.5261e-07, 2.7199e-07, 2.7307e-07, 9.1074e-08, 1.5513e-07,2.8967e-08, 4.5211e-08, 6.9567e-09, 4.8964e-07, 5.5741e-07, 2.5242e-07,1.7199e-07, 3.2779e-07, 1.5638e-07, 7.4442e-08, 4.5998e-08, 4.2168e-07,5.6360e-07, 3.5032e-07],[8.5069e-07, 6.5163e-07, 2.1127e-07, 5.9774e-07, 8.3511e-07, 8.2319e-08,1.2034e-07, 2.7492e-07, 1.8977e-08, 7.6464e-07, 9.5883e-07, 7.2260e-07,7.9380e-07, 6.1829e-07, 3.1071e-08, 3.6084e-07, 9.4017e-08, 7.5522e-07,9.6783e-07, 4.4963e-07],[7.6685e-07, 6.9857e-07, 1.6338e-07, 2.9518e-07, 1.2664e-07, 1.0680e-07,1.8672e-08, 1.4522e-07, 1.7957e-08, 6.4371e-07, 8.0184e-07, 5.5970e-07,4.4589e-07, 7.0233e-07, 3.6082e-07, 7.5321e-08, 1.2476e-07, 6.5821e-07,8.0971e-07, 4.8287e-07]])}}