专业素材网站的搜图功能:

很多背景墙、墙纸、壁纸、电视墙、装修设计素材网都必配以图搜图。这个以图搜图识图的好处不言而喻,是很多素材网、图片网、三维网等等必备功能。

推荐一款专业的以图搜图系统imgso,它是一个以图搜图专业系统,让你的网站拥有站内设计素材搜图识图功能。

以图搜图在现在应用的非常普遍,是一个非常专业和实用的工具。相对于关键字搜索,以图搜图的方式更加的方便,特别对于特征难以用文字描述的,这个时候图像搜索就能展示出它的强大了。

这款imgso专业搜图采用以Ai智能搜图,神经网络学习底层技术,更有其他丰富的功能设置:

1.拖拽本地图片识图

2.粘贴网络图片地址识图

3.截图粘贴图片识图

4.本地上传图片识图

5.裁图识图

这些都是搜图网站的必备功能,另外Imgso系统插件有增强的功能:

限制登录后搜索:开启该功能后,用户需要登录才能搜索。

搜图分类:开启该功能后,搜索结果只展示你该分类下的素材。

......等等,更多功能设置需要你亲自体验。

需要imgso以图搜图系统,可联系下面演示网的客服。

功能演示http://www.sjoneone.com

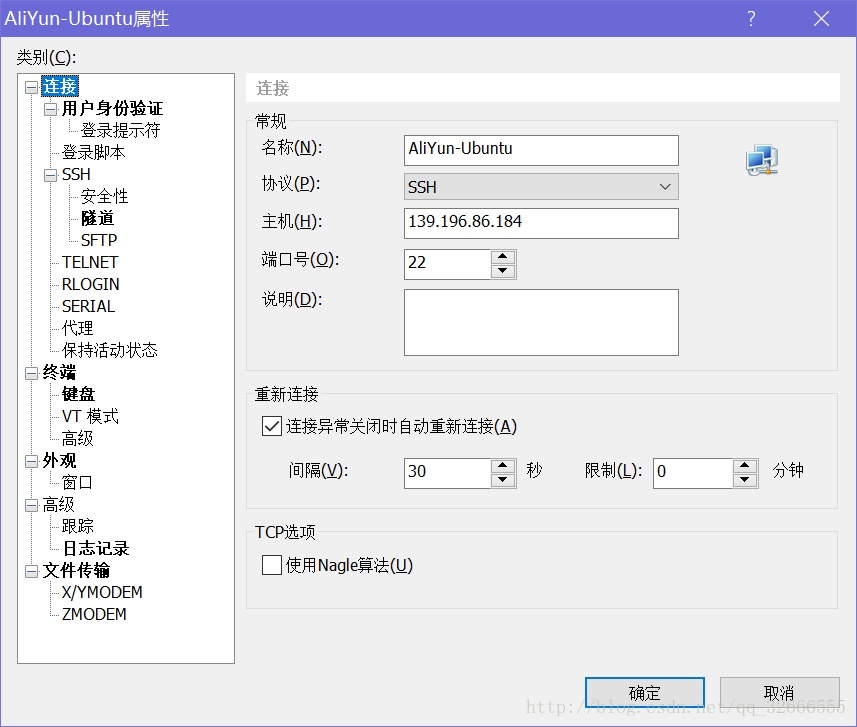

imgso是wordpress专业插件系统,上传后就能使用。如需搭配其他系统,请和客服联系。

imgso搜图结果部分展示:

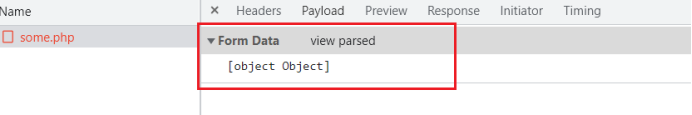

底层部分代码:

#以图搜图图片数据分析计算逻辑

from multiprocessing import Pool

from skimage.transform import resize# Apply transformations to multiple images

def apply_transformer(imgs, transformer, parallel=True):if parallel:pool = Pool()imgs_transform = pool.map(transformer, [img for img in imgs])pool.close()pool.join()else:imgs_transform = [transformer(img) for img in imgs]return imgs_transform# Normalize image data [0, 255] -> [0.0, 1.0]

def normalize_img(img):return img / 255.# Resize image

def resize_img(img, shape_resized):img_resized = resize(img, shape_resized,anti_aliasing=True,preserve_range=True)assert img_resized.shape == shape_resizedreturn img_resized# Flatten image

def flatten_img(img):return img.flatten("C")

"""

import numpy as np

import tensorflow as tf

from src.utils import splitclass AutoEncoder():def __init__(self, modelName, info):self.modelName = modelNameself.info = infoself.autoencoder = Noneself.encoder = Noneself.decoder = None# Traindef fit(self, X, n_epochs=50, batch_size=256):indices_fracs = split(fracs=[0.9, 0.1], N=len(X), seed=0)X_train, X_valid = X[indices_fracs[0]], X[indices_fracs[1]]self.autoencoder.fit(X_train, X_train,epochs = n_epochs,batch_size = batch_size,shuffle = True,validation_data = (X_valid, X_valid))# Inferencedef predict(self, X):return self.encoder.predict(X)# Set neural network architecturedef set_arch(self):shape_img = self.info["shape_img"]shape_img_flattened = (np.prod(list(shape_img)),)# Set encoder and decoder graphsif self.modelName == "simpleAE":encode_dim = 128input = tf.keras.Input(shape=shape_img_flattened)encoded = tf.keras.layers.Dense(encode_dim, activation='relu')(input)decoded = tf.keras.layers.Dense(shape_img_flattened[0], activation='sigmoid')(encoded)elif self.modelName == "convAE":n_hidden_1, n_hidden_2, n_hidden_3 = 16, 8, 8convkernel = (3, 3) # convolution kernelpoolkernel = (2, 2) # pooling kernelinput = tf.keras.layers.Input(shape=shape_img)x = tf.keras.layers.Conv2D(n_hidden_1, convkernel, activation='relu', padding='same')(input)x = tf.keras.layers.MaxPooling2D(poolkernel, padding='same')(x)x = tf.keras.layers.Conv2D(n_hidden_2, convkernel, activation='relu', padding='same')(x)x = tf.keras.layers.MaxPooling2D(poolkernel, padding='same')(x)x = tf.keras.layers.Conv2D(n_hidden_3, convkernel, activation='relu', padding='same')(x)encoded = tf.keras.layers.MaxPooling2D(poolkernel, padding='same')(x)x = tf.keras.layers.Conv2D(n_hidden_3, convkernel, activation='relu', padding='same')(encoded)x = tf.keras.layers.UpSampling2D(poolkernel)(x)x = tf.keras.layers.Conv2D(n_hidden_2, convkernel, activation='relu', padding='same')(x)x = tf.keras.layers.UpSampling2D(poolkernel)(x)x = tf.keras.layers.Conv2D(n_hidden_1, convkernel, activation='relu')(x)x = tf.keras.layers.UpSampling2D(poolkernel)(x)decoded = tf.keras.layers.Conv2D(shape_img[2], convkernel, activation='sigmoid', padding='same')(x)else:raise Exception("Invalid model name given!")# Create autoencoder modelautoencoder = tf.keras.Model(input, decoded)input_autoencoder_shape = autoencoder.layers[0].input_shape[1:]output_autoencoder_shape = autoencoder.layers[-1].output_shape[1:]# Create encoder modelencoder = tf.keras.Model(input, encoded) # set encoderinput_encoder_shape = encoder.layers[0].input_shape[1:]output_encoder_shape = encoder.layers[-1].output_shape[1:]# Create decoder modeldecoded_input = tf.keras.Input(shape=output_encoder_shape)if self.modelName == 'simpleAE':decoded_output = autoencoder.layers[-1](decoded_input) # single layerelif self.modelName == 'convAE':decoded_output = autoencoder.layers[-7](decoded_input) # Conv2Ddecoded_output = autoencoder.layers[-6](decoded_output) # UpSampling2Ddecoded_output = autoencoder.layers[-5](decoded_output) # Conv2Ddecoded_output = autoencoder.layers[-4](decoded_output) # UpSampling2Ddecoded_output = autoencoder.layers[-3](decoded_output) # Conv2Ddecoded_output = autoencoder.layers[-2](decoded_output) # UpSampling2Ddecoded_output = autoencoder.layers[-1](decoded_output) # Conv2Delse:raise Exception("Invalid model name given!")decoder = tf.keras.Model(decoded_input, decoded_output)decoder_input_shape = decoder.layers[0].input_shape[1:]decoder_output_shape = decoder.layers[-1].output_shape[1:]# Generate summariesprint("\nautoencoder.summary():")print(autoencoder.summary())print("\nencoder.summary():")print(encoder.summary())print("\ndecoder.summary():")print(decoder.summary())# Assign modelsself.autoencoder = autoencoderself.encoder = encoderself.decoder = decoder# Compiledef compile(self, loss="binary_crossentropy", optimizer="adam"):self.autoencoder.compile(optimizer=optimizer, loss=loss)# Load model architecture and weightsdef load_models(self, loss="binary_crossentropy", optimizer="adam"):print("Loading models...")self.autoencoder = tf.keras.models.load_model(self.info["autoencoderFile"])self.encoder = tf.keras.models.load_model(self.info["encoderFile"])self.decoder = tf.keras.models.load_model(self.info["decoderFile"])self.autoencoder.compile(optimizer=optimizer, loss=loss)self.encoder.compile(optimizer=optimizer, loss=loss)self.decoder.compile(optimizer=optimizer, loss=loss)# Save model architecture and weights to filedef save_models(self):print("Saving models...")self.autoencoder.save(self.info["autoencoderFile"])self.encoder.save(self.info["encoderFile"])self.decoder.save(self.info["decoderFile"])