本文选取的招聘网站是职友集(www.jobui.com) ,其他招聘网站大体类似。本文以此为例,简单介绍Scrapy框架的使用。

1.pip install Scrapy

这点就不用说了,当然要准备好python和pip环境了。

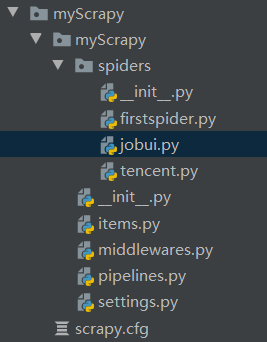

2.scrapy startproject myScrapy

创建自定义名字myScrapy的项目

3.scrapy genspider jobui jobui.com

在创建好的项目根目录下(这点很重要!!!),创建名为jobui的子项目,同时规定爬虫的爬取范围为‘job.com’(命令行输入时应该是不用加引号,因为待会创建好之后子项目文件里面会看到自动加了)

这样一个名为jobui的子项目就创建好了(上下两个文件是另外两个子项目)

4.jobui.py文件

# -*- coding: utf-8 -*-

import scrapyclass JobuiSpider(scrapy.Spider):name = 'jobui'allowed_domains = ['jobui.com']start_urls = ['https://www.jobui.com/jobs?jobKw=%E7%88%AC%E8%99%AB&cityKw=%E6%9D%AD%E5%B7%9E&sortField=last']def parse(self, response):job_list=response.xpath("//div[@class='c-job-list']")for job in job_list:dict={}dict['title']=job.xpath('./div[2]/div[1]/div[1]//h3/text()').extract_first()dict['salary']=job.xpath('./div[2]/div[1]/div[2]//span[3]/text()').extract_first()dict['company']=job.xpath('./div[2]/div[1]/div[3]/a/text()').extract_first()yield dictnext_url=response.xpath('//a[text()="下一页"]/@href').extract_first()if next_url is not None:next_url='https://www.jobui.com/'+next_url# print(next_url)yield scrapy.Request(next_url,callback=self.parse)

需要编写的就是start_url以及下面的parse函数。start_url为第一页的页码。

需要注意的是yeil返回的必须是Request,item,dict三种类型的数据,所以不能习惯性的构造一个列表,一页字典数据添加到列表,再传递到Pipeline。

5.pipline.py文件

# -*- coding: utf-8 -*-# Define your item pipelines here

#

# Don't forget to add your pipeline to the ITEM_PIPELINES setting

# See: https://docs.scrapy.org/en/latest/topics/item-pipeline.htmlclass MyscrapyPipeline(object):def process_item(self, item, spider):print(item)return item此文件是编辑保存数据的地方,此处略去,直接打印

6.setting.py文件

# -*- coding: utf-8 -*-# Scrapy settings for myScrapy project

#

# For simplicity, this file contains only settings considered important or

# commonly used. You can find more settings consulting the documentation:

#

# https://docs.scrapy.org/en/latest/topics/settings.html

# https://docs.scrapy.org/en/latest/topics/downloader-middleware.html

# https://docs.scrapy.org/en/latest/topics/spider-middleware.htmlBOT_NAME = 'myScrapy'SPIDER_MODULES = ['myScrapy.spiders']

NEWSPIDER_MODULE = 'myScrapy.spiders'# Crawl responsibly by identifying yourself (and your website) on the user-agent

#USER_AGENT = 'myScrapy (+http://www.yourdomain.com)'

USER_AGENT: 'Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/80.0.3987.122 Safari/537.36'# Obey robots.txt rules

ROBOTSTXT_OBEY = True# Configure maximum concurrent requests performed by Scrapy (default: 16)

#CONCURRENT_REQUESTS = 32# Configure a delay for requests for the same website (default: 0)

# See https://docs.scrapy.org/en/latest/topics/settings.html#download-delay

# See also autothrottle settings and docs

#DOWNLOAD_DELAY = 3

# The download delay setting will honor only one of:

#CONCURRENT_REQUESTS_PER_DOMAIN = 16

#CONCURRENT_REQUESTS_PER_IP = 16# Disable cookies (enabled by default)

#COOKIES_ENABLED = False# Disable Telnet Console (enabled by default)

#TELNETCONSOLE_ENABLED = False# Override the default request headers:

#DEFAULT_REQUEST_HEADERS = {

# 'Accept': 'text/html,application/xhtml+xml,application/xml;q=0.9,*/*;q=0.8',

# 'Accept-Language': 'en',

#}# Enable or disable spider middlewares

# See https://docs.scrapy.org/en/latest/topics/spider-middleware.html

#SPIDER_MIDDLEWARES = {

# 'myScrapy.middlewares.MyscrapySpiderMiddleware': 543,

#}# Enable or disable downloader middlewares

# See https://docs.scrapy.org/en/latest/topics/downloader-middleware.html

#DOWNLOADER_MIDDLEWARES = {

# 'myScrapy.middlewares.MyscrapyDownloaderMiddleware': 543,

#}# Enable or disable extensions

# See https://docs.scrapy.org/en/latest/topics/extensions.html

#EXTENSIONS = {

# 'scrapy.extensions.telnet.TelnetConsole': None,

#}# Configure item pipelines

# See https://docs.scrapy.org/en/latest/topics/item-pipeline.html

ITEM_PIPELINES = {'myScrapy.pipelines.MyscrapyPipeline': 300,

}# Enable and configure the AutoThrottle extension (disabled by default)

# See https://docs.scrapy.org/en/latest/topics/autothrottle.html

#AUTOTHROTTLE_ENABLED = True

# The initial download delay

#AUTOTHROTTLE_START_DELAY = 5

# The maximum download delay to be set in case of high latencies

#AUTOTHROTTLE_MAX_DELAY = 60

# The average number of requests Scrapy should be sending in parallel to

# each remote server

#AUTOTHROTTLE_TARGET_CONCURRENCY = 1.0

# Enable showing throttling stats for every response received:

#AUTOTHROTTLE_DEBUG = False# Enable and configure HTTP caching (disabled by default)

# See https://docs.scrapy.org/en/latest/topics/downloader-middleware.html#httpcache-middleware-settings

#HTTPCACHE_ENABLED = True

#HTTPCACHE_EXPIRATION_SECS = 0

#HTTPCACHE_DIR = 'httpcache'

#HTTPCACHE_IGNORE_HTTP_CODES = []

#HTTPCACHE_STORAGE = 'scrapy.extensions.httpcache.FilesystemCacheStorage'

LOG_LEVEL = 'WARNING'

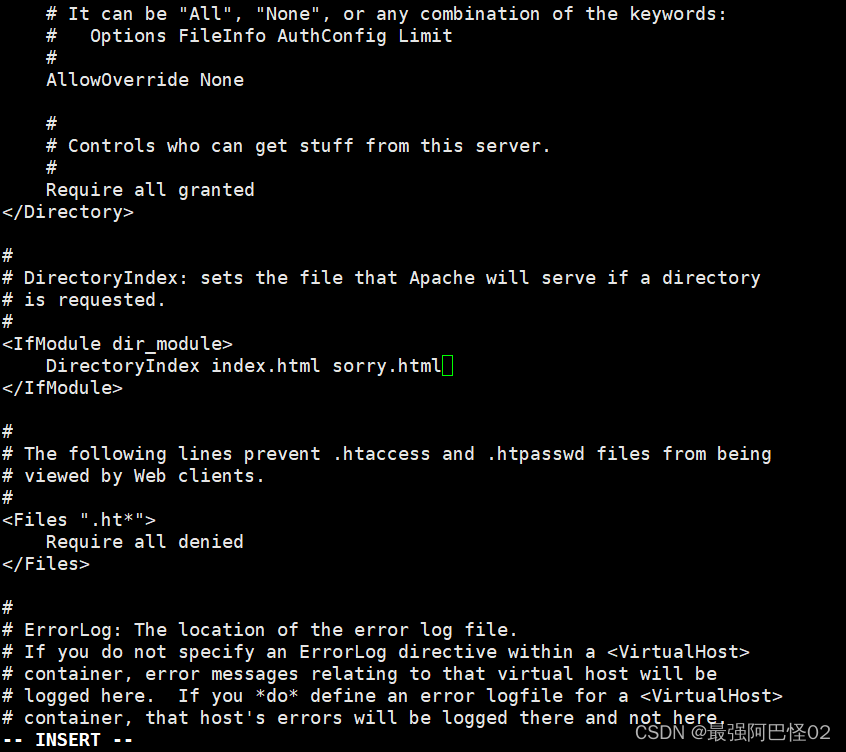

此处将LOG_LEVEL等级设置为WARNING(默认是INFO), 让打印结果更简单一点。

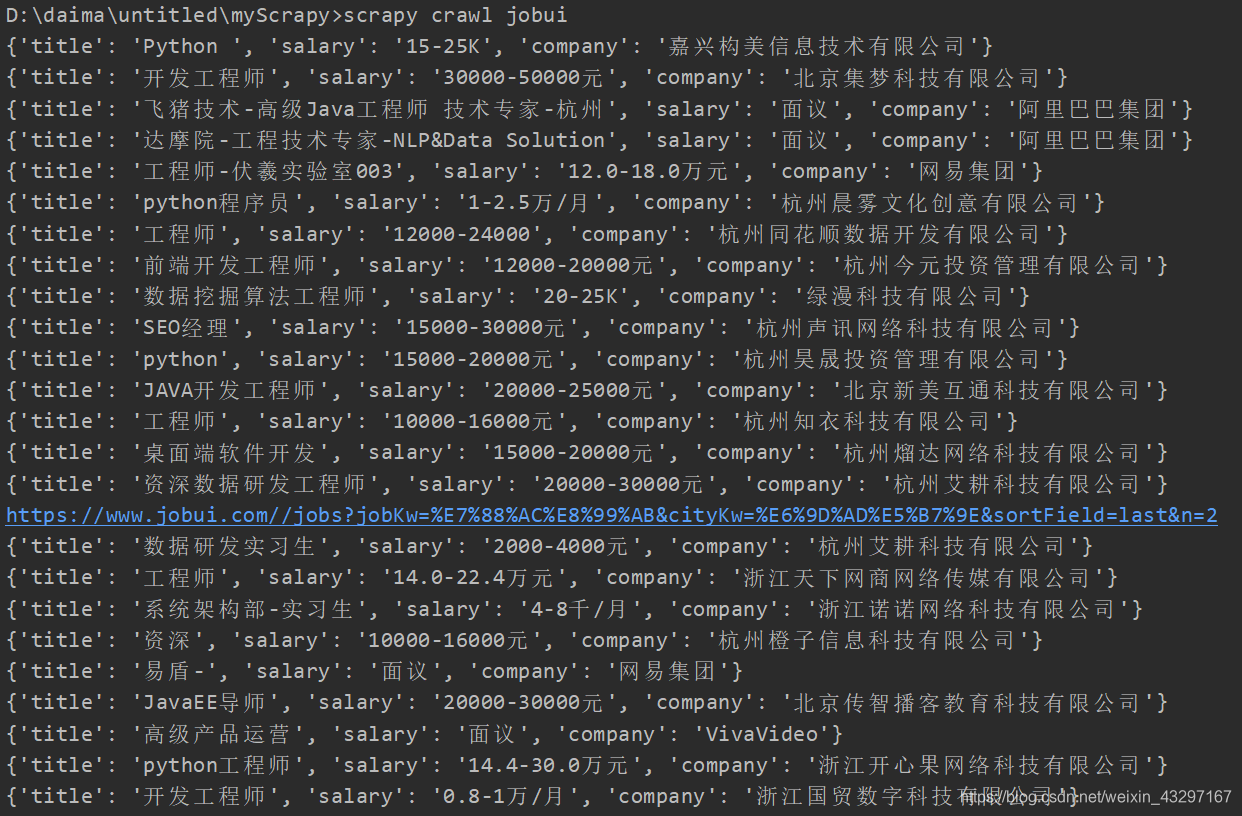

7.scrapy crawl jobui

最后一步运行文件

中间打印的是下一页的url地址,可在jobui.py中注释。