文章详细分析内容发布于个人公众号。谢谢大家关注:

ID:

公众号名称:

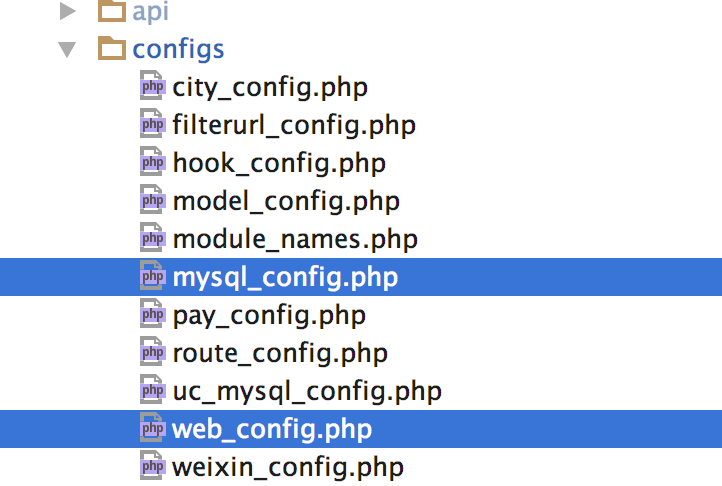

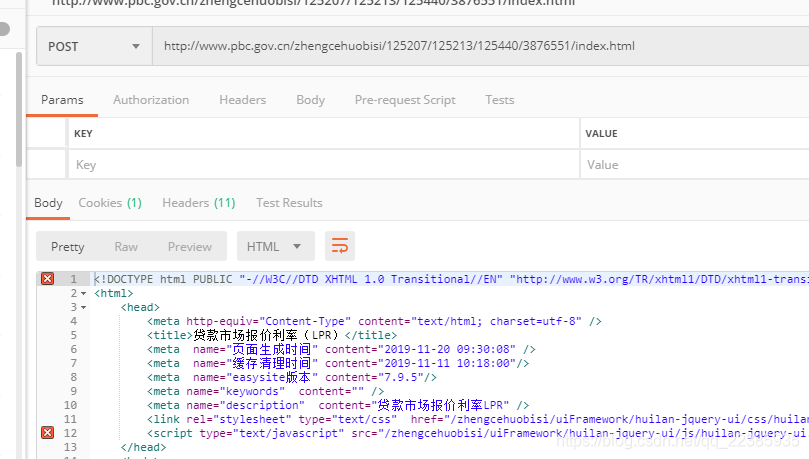

以下为搜索页面信息抓取、信息初步清理、岗位详细信息抓取的爬虫代码:

# - * - coding:utf-8 - * -

from bs4 import BeautifulSoup

import requests

import pandas as pd

url = r'https://search.51job.com/list/000000,000000,0000,00,9,99,' \r'%25E6%2595%25B0%25E6%258D%25AE%25E5%2588%2586%25E6%259E%2590,2,{}.html?' \r'lang=c&stype=1' \r'&postchannel=0000&workyear=99&cotype=99°reefrom=99&jobterm=99&companysize=99&lonlat=0%2' \r'C0&radius=-1&ord_field=0&confirmdate=9&fromType=&dibiaoid=0&address=&line=&specialarea=00&from=&welfare='final = []

def get_brief_contents():

#for i in range(0,2001):url_real = url.format(i)try:res = requests.get(url_real)res.encoding = 'gbk'soup = BeautifulSoup(res.text,'html.parser')total_content = soup.select('.dw_table')[0]companys = total_content.select('.el')

# y = total_content.select('.t1 ')

# print(y)for company in companys:total = {}position_all = company.select('.t1 ')[0]position_a = position_all.select('a')if len(position_a)>0:total['name'] = company.select('.t2')[0].text.strip()total['position'] = position_a[0]['title']total['location'] = company.select('.t3')[0].text.strip()total['salary'] = company.select('.t4')[0].text.strip()total['update_date'] = company.select('.t5')[0].text.strip()total['pos_url'] = position_a[0]['href'].strip()print("Dealing with page " + str(i) + ' Please waiting------')print('Company\'s name is ' + total['name'] )final.append(total)except:print('Failed with page ' + str(i) + ' ------')df_raw = pd.DataFrame(final)df_raw.to_csv('0527_51job_raw.csv',mode = 'a',encoding = 'gbk')print('saved raw_data')return final#cleandata = []

def clean_data(final):#final = pd.read_csv('0521_51job_raw.csv',encoding = 'gbk', header = 0)#print('Now start Clean Raw data------')df = pd.DataFrame(final)df = df.dropna(how = 'any')df.set_index('pos_url')print('Now chose data with keywords------')df = df[df.position.str.contains(r'.*?数据.*?|.*?分析.*?')]df = df[df.salary.str.contains(r'.*?千/月.*?|.*?万/月.*?|.*?万/年.*?')]print('Now split data with dash------')Split = pd.DataFrame((x.split('-') for x in df['salary']), index = df.index, columns = ['low_s', 'high_s'])df = pd.merge(df, Split, right_index = True, left_index = True)print('Now unify the unit------')row_with_T = df['salary'].str.contains('千/月').fillna(False)for i, rows in df[row_with_T].iterrows():df.at[i, 'low_s'] = float(rows['low_s'])df.at[i, 'high_s'] = float(rows['high_s'][:-3])row_with_TT = df['salary'].str.contains('万/月').fillna(False)for i, rows in df[row_with_TT].iterrows():df.at[i, 'low_s'] = float(rows['low_s']) * 10df.at[i, 'high_s'] = float(rows['high_s'][:-3]) * 10print(df)df.to_csv('0527clean.csv',mode = 'a',encoding = 'gbk')print('saved clean data')#cleandata.extend(df)return 1detail_info = []

def get_detail_content():raw = pd.read_csv('0527clean.csv', encoding = 'gbk', header = 0)df = pd.DataFrame(raw)print(df)print(len(df))print('Getting details from specific urls------')df.reset_index()df.set_index('pos_url')urls = df['pos_url']failed_count = 0for url in urls:print('Connecting \n' + url + ' ------')info = {}info['url'] = urlres = requests.get(url)res.encoding = 'gbk'soup = BeautifulSoup(res.text, 'html.parser')try:"""公司类型规模人数"""basic_info = soup.select('.cn')[0].select('p')[1].text.strip()basic_info = [x.strip() for x in basic_info.split('|')]if len(basic_info) == 4:info['company_type'] = basic_info[0]info['company_imp_n'] = basic_info[1]info['company_field'] = basic_info[2]else:info['company_type'] = basic_info[0]info['company_imp_n'] = ''info['company_field'] = basic_info[1]"""工作经验学历人数"""try:tag = soup.select('.sp4')[3].textinfo['basic_req_exp'] = soup.select('.sp4')[0].textinfo['basic_req_gra'] = soup.select('.sp4')[1].textinfo['basic_req_num'] = soup.select('.sp4')[2].textexcept:info['basic_req_exp'] = soup.select('.sp4')[0].textinfo['basic_req_gra'] = ''info['basic_req_num'] = soup.select('.sp4')[1].text# basic_req_exp = basic_req[1].text"""福利"""try:info['benefit'] = soup.select('.t2')[0].text.strip()except:info['benefit'] = ''"""岗位描述与职责"""disc = soup.select('.tBorderTop_box')[1].select('p')[:-1]info['disc'] = [x.text for x in disc]"""职位类别"""label = soup.select('.mt10')[0].text.replace('职能类别:', '').strip()info['label'] = ''.join(label)print('Finishing \n' + url + ' ------')print('The label is \n' + info['label'])detail_info.append(info)except:print('Failed with \n' + url + ' ------')failed_count += 1print('failed_count == ' + str(failed_count))df1 = pd.DataFrame(detail_info)#df.dropna(how = 'any')df1.to_csv('20180527_detail_info.csv', mode = 'a')print('saved detail_info')print(len(detail_info))return 1def save_my_file():df_detail = pd.DataFrame(pd.read_csv('20180527_detail_info.csv', header = 0))print(df_detail)df_clean = pd.DataFrame(pd.read_csv('20180527clean.csv', header = 0))print(df_clean )df_detail.set_index('url')df_clean.set_index('pos_url')df_all = pd.merge(df_clean, df_detail, right_index = True, left_index = True)df_all.to_csv('20180527_51job-data_analysis.csv', mode = 'a',encoding = 'gbk')print('crawling over')get_brief_contents()

clean_data(final)

get_detail_content()

save_my_file()以下为其中一个词云制作的代码:

"""

词云中的generate_from_frequencies方法

不需要分词

需要词频

需要dict方法封装成字典作为frequency

"""

import re

from wordcloud import WordCloud

import matplotlib.pyplot as plt

import pandas as pd

from pandas import DataFrame

font_path=r'C:\WINDOWS\Fonts\msyhl.ttc'

file = pd.DataFrame(pd.read_excel(r'D:\PycharmProjects\新建文件夹\汇合-0602.xlsx',sheet_name='Sheet1'))

benefit = file['disc'].dropna(how='any')

all_text = ''.join(text for text in benefit)

s = re.findall('[a-zA-Z]+',all_text)

s = [i.lower() for i in s]

s1 = set(s)

en_word = []

stopwords = ['A','B','D','T','C','K','Www']

for words in s1:final_en_word = {}final_en_word['key'] = words.title()final_en_word['num'] = s.count(words)en_word.append(final_en_word)

print(en_word)

df = DataFrame(en_word)

print(df)

df.to_excel('en_word.xlsx')

sowe = list(df.key)

value = df.num

dic = dict(zip(sowe,value))

print(dic)

wc = WordCloud(font_path = font_path,background_color = 'White',stopwords = stopwords,max_words = 50,height=2000,scale = 1,width=3000).generate_from_frequencies(dic)

plt.imshow(wc)

plt.axis('off')

wc.to_file(r'D:\公众号\soft_prom_req.jpg')

plt.show()以下为工作经验饼图制作代码:

import pandas as pd

path = r'D:\PycharmProjects\新建文件夹\汇合-0602.xlsx'

scope = pd.DataFrame(pd.read_excel(path,sheetname = 'Sheet2'))

scope['ave_salary'] = (df['low_s'] + df['high_s'])/2

requirement = list(scope['basic_req_exp'])

unique_r = pd.DataFrame(list(set(requirement)))

unique_r.columns = ['exp']

unique_r['exp_num'] = unique_r['exp'].apply(lambda x: requirement.count(x))

unique_r['exp_perc'] = unique_r['exp_num'].apply(lambda y: y/unique_r['exp_num'].sum())

unique_r['year'] = unique_r['exp'].apply(lambda v: v[:v.find('年')+1] if '年' in v else '无经验')

print(unique_r)

print(unique_r['exp_num'].sum())

plt.figure(1, figsize=(10,10))

plt.rcParams['font.sans-serif'] = ['SimHei']

plt.rcParams['font.size'] = 20

explode = [0.1,0.1,0.2,0.1,0.1,0.1,0.2]

plt.pie(x = unique_r['exp_perc'],explode = explode, labels = unique_r['year'],autopct = '%1.1f%%',labeldistance=1.05, shadow = True, startangle= 90)

plt.axis ('equal')

#plt.title('')

#plt.legend(labels = unique_r['year'], loc = 'right',borderaxespad =-2,frameon = False)

plt.savefig('工作经验饼图.jpg')

plt.show()

以下为柱状图代码:

import matplotlib.pyplot as plt

import pandas as pd

path = r'D:\PycharmProjects\charts\en_word.xlsx'

df = pd.DataFrame(pd.read_excel(path))

df.columns = ['softw','num']

pf = df.head(20)

pf.drop([3268,12,2989],inplace = True)

print(pf)

pf.softw = pf.softw.apply(lambda v: v.upper())

fig, ax = plt.subplots(figsize = (15,8))

ax.bar(range(len(pf.softw)),pf.num,tick_label=pf.softw )

ax.set_ylabel('Frequencies',fontsize = 18)

ax.set_xlabel('Top 17 Tools Required', fontsize = 18)

plt.savefig('Top 17 Tools Required.jpg')

plt.show()欢迎大家关注本人微信公众号,公众号将持续更新python,tableau,SQL等数据分析的文章。

ID:

公众号名称: