哈喽你们好啊

最近有这样一个作业,本来想偷懒,在csdn上找一个代码,复制粘贴完成就🆗了,结果搜寻全站,竟然无此内容,这就很无奈了

既然如此 ,那我就自己动手,丰衣足食了 。

好了,回归正题,今天我分享是用scrapy爬取淘车(https://www.taoche.com/),接下来我们一起看看应如何爬取呢??

scrapy 是什么呢 ?我简单的介绍一下吧

Scrapy 是一套基于基于Twisted的异步处理框架,纯python实现的爬虫框架,用户只需要定制开发几个模块就可以轻松的实现一个爬虫,用来抓取网页内容以及各种图片,非常之方便~

首先是创建项目

在py里命令框输入文件就会创建好的。

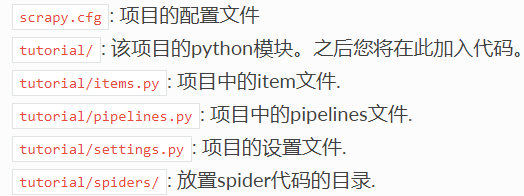

这些就是各py文件具体作用了。

步入正题

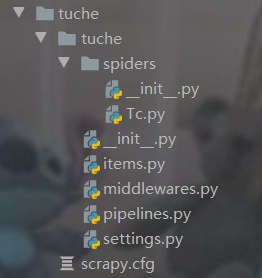

我们先创建项目

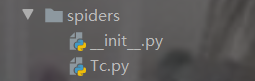

然后切入spiders这个文件夹创建爬虫文件

输入命令 scrapy genspider Tc

接下来我们看淘车网站,我需要爬取他每一个城市的车辆有的和出售价格品牌

第一步,我先获取全国城市,要进行拼接url

city = ['quanguo', 'shijiazhuang', 'tangshan', 'qinhuangdao', 'handan', 'xingtai', 'baoding', 'zhangjiakou','chengde', 'cangzhou', 'langfang', 'hengshui', 'taiyuan', 'datong', 'yangquan', 'changzhi', 'jincheng','shuozhou', 'jinzhong', 'yuncheng', 'xinzhou', 'linfen', 'lvliang', 'huhehaote', 'baotou', 'wuhai','chifeng', 'tongliao', 'eerduosi', 'hulunbeier', 'bayannaoer', 'wulanchabu', 'xinganmeng','xilinguolemeng', 'alashanmeng', 'changchun', 'jilin', 'hangzhou', 'ningbo', 'wenzhou', 'jiaxing','huzhou', 'shaoxing', 'jinhua', 'quzhou', 'zhoushan', 'tz', 'lishui', 'bozhou', 'chizhou', 'xuancheng','nanchang', 'jingdezhen', 'pingxiang', 'jiujiang', 'xinyu', 'yingtan', 'ganzhou', 'jian', 'yichun','jxfz','shangrao', 'xian', 'tongchuan', 'baoji', 'xianyang', 'weinan', 'yanan', 'hanzhong', 'yl', 'ankang','shangluo', 'lanzhou', 'jiayuguan', 'jinchang', 'baiyin', 'tianshui', 'wuwei', 'zhangye', 'pingliang','jiuquan', 'qingyang', 'dingxi', 'longnan', 'linxia', 'gannan', 'xining', 'haidongdiqu', 'haibei','huangnan', 'hainanzangzuzizhizho', 'guoluo', 'yushu', 'haixi', 'yinchuan', 'shizuishan', 'wuzhong','guyuan', 'zhongwei', 'wulumuqi', 'kelamayi', 'shihezi', 'tulufandiqu', 'hamidiqu', 'changji','boertala','bazhou', 'akesudiqu', 'xinjiangkezhou', 'kashidiqu', 'hetiandiqu', 'yili', 'tachengdiqu','aletaidiqu','xinjiangzhixiaxian', 'changsha', 'zhuzhou', 'xiangtan', 'hengyang', 'shaoyang', 'yueyang', 'changde','zhangjiajie', 'yiyang', 'chenzhou', 'yongzhou', 'huaihua', 'loudi', 'xiangxi', 'guangzhou','shaoguan','shenzhen', 'zhuhai', 'shantou', 'foshan', 'jiangmen', 'zhanjiang', 'maoming', 'zhaoqing', 'huizhou','meizhou', 'shanwei', 'heyuan', 'yangjiang', 'qingyuan', 'dongguan', 'zhongshan', 'chaozhou','jieyang','yunfu', 'nanning', 'liuzhou', 'guilin', 'wuzhou', 'beihai', 'fangchenggang', 'qinzhou', 'guigang','yulin', 'baise', 'hezhou', 'hechi', 'laibin', 'chongzuo', 'haikou', 'sanya', 'sanshashi','qiongbeidiqu','qiongnandiqu', 'hainanzhixiaxian', 'chengdu', 'zigong', 'panzhihua', 'luzhou', 'deyang', 'mianyang','guangyuan', 'suining', 'neijiang', 'leshan', 'nanchong', 'meishan', 'yibin', 'guangan', 'dazhou','yaan','bazhong', 'ziyang', 'aba', 'ganzi', 'liangshan', 'guiyang', 'liupanshui', 'zunyi', 'anshun','tongrendiqu', 'qianxinan', 'bijiediqu', 'qiandongnan', 'qiannan', 'kunming', 'qujing', 'yuxi','baoshan','zhaotong', 'lijiang', 'puer', 'lincang', 'chuxiong', 'honghe', 'wenshan', 'xishuangbanna', 'dali','dehong', 'nujiang', 'diqing', 'siping', 'liaoyuan', 'tonghua', 'baishan', 'songyuan', 'baicheng','yanbian', 'haerbin', 'qiqihaer', 'jixi', 'hegang', 'shuangyashan', 'daqing', 'yc', 'jiamusi','qitaihe','mudanjiang', 'heihe', 'suihua', 'daxinganlingdiqu', 'shanghai', 'tianjin', 'chongqing', 'nanjing','wuxi','xuzhou', 'changzhou', 'suzhou', 'nantong', 'lianyungang', 'huaian', 'yancheng', 'yangzhou','zhenjiang','taizhou', 'suqian', 'lasa', 'changdudiqu', 'shannan', 'rikazediqu', 'naqudiqu', 'alidiqu','linzhidiqu','hefei', 'wuhu', 'bengbu', 'huainan', 'maanshan', 'huaibei', 'tongling', 'anqing', 'huangshan','chuzhou','fuyang', 'sz', 'chaohu', 'luan', 'fuzhou', 'xiamen', 'putian', 'sanming', 'quanzhou', 'zhangzhou','nanping', 'longyan', 'ningde', 'jinan', 'qingdao', 'zibo', 'zaozhuang', 'dongying', 'yantai','weifang','jining', 'taian', 'weihai', 'rizhao', 'laiwu', 'linyi', 'dezhou', 'liaocheng', 'binzhou', 'heze','zhengzhou', 'kaifeng', 'luoyang', 'pingdingshan', 'jiyuan', 'anyang', 'hebi', 'xinxiang', 'jiaozuo','puyang', 'xuchang', 'luohe', 'sanmenxia', 'nanyang', 'shangqiu', 'xinyang', 'zhoukou', 'zhumadian','henanzhixiaxian', 'wuhan', 'huangshi', 'shiyan', 'yichang', 'xiangfan', 'ezhou', 'jingmen', 'xiaogan','jingzhou', 'huanggang', 'xianning', 'qianjiang', 'suizhou', 'xiantao', 'tianmen', 'enshi','hubeizhixiaxian', 'beijing', 'shenyang', 'dalian', 'anshan', 'fushun', 'benxi', 'dandong', 'jinzhou','yingkou', 'fuxin', 'liaoyang', 'panjin', 'tieling', 'chaoyang', 'huludao', 'anhui', 'fujian', 'gansu','guangdong', 'guangxi', 'guizhou', 'hainan', 'hebei', 'henan', 'heilongjiang', 'hubei', 'hunan', 'jl','jiangsu', 'jiangxi', 'liaoning', 'neimenggu', 'ningxia', 'qinghai', 'shandong', 'shanxi', 'shaanxi','sichuan', 'xizang', 'xinjiang', 'yunnan', 'zhejiang', 'jjj', 'jzh', 'zsj', 'csj', 'ygc']

车的品牌

car_list = ['southeastautomobile', 'sma', 'audi', 'hummer', 'tianqimeiya', 'seat', 'lamborghini','weltmeister','changanqingxingche-281', 'chevrolet', 'fiat', 'foday', 'eurise', 'dongfengfengdu', 'lotus-146','jac','enranger', 'bjqc', 'luxgen', 'jinbei', 'sgautomotive', 'jonwayautomobile', 'beijingjeep','linktour','landrover', 'denza', 'jeep', 'rely', 'gacne', 'porsche', 'wey', 'shenbao', 'bisuqiche-263','beiqihuansu', 'sinogold', 'roewe', 'maybach', 'greatwall', 'chenggongqiche', 'zotyeauto','kaersen','gonow', 'dodge', 'siwei', 'ora', 'lifanmotors', 'cajc', 'hafeiautomobile', 'sol','beiqixinnengyuan','dorcen', 'lexus', 'mercedesbenz', 'ford', 'huataiautomobile', 'jmc', 'peugeot', 'kinglongmotor','oushang', 'dongfengxiaokang-205', 'chautotechnology', 'faw-hongqi', 'mclaren', 'dearcc','fengxingauto', 'singulato', 'nissan', 'saleen', 'ruichixinnengyuan', 'yulu', 'isuzu', 'zhinuo','alpina', 'renult', 'kawei', 'cadillac', 'hanteng', 'defu', 'subaru', 'huasong', 'casyc', 'geely','xpeng', 'jlkc', 'sj', 'nanqixinyatu1', 'horki', 'venucia', 'xinkaiauto', 'traum','shanghaihuizhong-45', 'zhidou', 'ww', 'riich', 'brillianceauto', 'galue', 'bugatti','guagnzhouyunbao', 'borgward', 'qzbd1', 'bj', 'changheauto', 'faw', 'saab', 'fuqiautomobile','skoda','citroen', 'mitsubishi', 'opel', 'qorosauto', 'zxauto', 'infiniti', 'mazda', 'arcfox-289','jinchengautomobile', 'kia', 'mini', 'tesla', 'gmc-109', 'chery', 'daoda-282', 'joylongautomobile','hafu-196', 'sgmw', 'wiesmann', 'acura', 'yunqueqiche', 'volvo', 'lynkco', 'karry', 'chtc', 'gq','redstar', 'everus', 'kangdi', 'chrysler', 'cf', 'maxus', 'smart', 'maserati', 'dayu', 'besturn','dadiqiche', 'ym', 'huakai', 'buick', 'faradayfuture', 'leapmotor', 'koenigsegg', 'bentley','rolls-royce', 'iveco', 'dongfeng-27', 'haige1', 'ds', 'landwind', 'volkswagen', 'sitech','toyota','polarsunautomobile', 'zhejiangkaersen', 'ladaa', 'lincoln', 'weilaiqiche', 'li', 'ferrari','jetour','honda', 'barbus', 'morgancars', 'ol', 'sceo', 'hama', 'dongfengfengguang', 'mg-79', 'ktm','changankuayue-283', 'suzuki', 'yudo', 'yusheng-258', 'fs', 'bydauto', 'jauger', 'foton', 'pagani','shangqisaibao', 'guangqihinomotors', 'polestar', 'fujianxinlongmaqichegufenyouxiangongsi','alfaromeo', 'shanqitongjia1', 'xingchi', 'lotus', 'hyundai', 'kaiyi', 'isuzu-132', 'bmw','ssangyong','astonmartin']

这些首要任务完成后就是拼接了

start_urls = []# 拼接初始urlfor i in city:for j in car_list:car_url = f"https://{i}.taoche.com/{j}/"start_urls.append(car_url)

打印一下结果

接下来就是匹配各链接每一页

def parse(self, response, **kwargs):"""获取每页的url"""page_num_max = response.xpath("""//*[@id="containerId"]/div[1]/div[7]/div/div//a[last()-1]/text()""").extract()# print(page_num_max)page_num_max = page_num_max[0] if page_num_max else '1'for v in range(1, int(page_num_max) + 1):real_url = response.url + '?page=' + str(v)print(real_url)yield scrapy.Request(url=real_url, callback=self.detail_page)pass

打印结果

最后就是匹配每一页的内容

def detail_page(self, response):"""获取车子信息"""car_dict = {}# print(response.url)all_car_title = response.xpath("""//*[@id="container_base"]/ul//li/div/div/a/@title""").extract()all_car_img = response.xpath("""//*[@id="container_base"]/ul//li/div/div/a/img/@src""").extract()all_car_years = response.xpath("""//*[@id="container_base"]/ul//li/div[2]/p/i[1]/text()""").extract()all_car_distance = response.xpath("""//*[@id="container_base"]/ul//li/div[2]/p/i[2]/text()""").extract()all_car_area = response.xpath("""//*[@id="container_base"]/ul//li/div[2]/p/i[3]/text()""").extract()all_car_area = all_car_area[0].strip()all_car_price = response.xpath("""//*[@id="container_base"]/ul//li/div[2]/div[1]/i/text()""").extract()if all_car_title:for i in range(len(all_car_title) - 1):car_dict['title'] = all_car_title[i]car_dict['img'] = "https:" + all_car_img[i]car_dict['years'] = all_car_years[i]car_dict['distance'] = all_car_distance[i]car_dict['area'] = all_car_area[i]car_dict['price'] = all_car_price[i]# print(car_dict)打印结果

今天分享就到这里了