items文件定义爬取数据:

apartment = scrapy.Field()

total_price = scrapy.Field()

agent = scrapy.Field()

image_urls = scrapy.Field()

images = scrapy.Field()

spider文件:

# -*- coding: utf-8 -*-

import scrapy

from pachong2.items import Pachong2Itemclass WoaiwojiaSpider(scrapy.Spider):name = 'woaiwojia'allowed_domains = ['bj.5i5j.com']start_urls = ['http://bj.5i5j.com/']# 重写def start_requests(self):urls = ['https://bj.5i5j.com/ershoufang/n' + str(x) + '/' for x in range(1, 5)]for url in urls:yield scrapy.Request(url=url, callback=self.parsex)# 房源列表页解析def parsex(self, response):print("response状态码:", response.status)print("部分网页代码:", response.body)house_list = response.xpath('/html/body/div[6]/div[1]/div[2]/ul/li')print("房源列表:",house_list)for house in house_list:item = Pachong2Item()item['apartment'] = house.xpath('div[2]/h3/a/text()').extract_first()print("标题:", item['apartment'])item['total_price'] = house.xpath('div[2]/div[1]/div/p[1]/strong/text()').extract_first()print("总价:", item['total_price'])# 解析并构造详情页URLdetail_url = response.urljoin(house.xpath('div[2]/h3/a/@href').extract_first())# 继续请求详情页URL,用用meta传递已经爬取到的部分数据# 使用callback指定回调函数yield scrapy.Request(detail_url, meta={'item': item}, callback=self.parse_detail)# next_url = response.xpath('//div[@class="pageSty rf"]/a[1]/@href').extract_first()# if next_url and page_num < 3:# next_url = response.urljoin(next_url)# yield scrapy.Request(next_url, callback=self.parse)# 房源详情页面解析def parse_detail(self, response):# 接受传递过来的数据print("detail_response:", response.xpath)item = response.meta['item']# 继续向Item添加经纪人信息item['agent'] = response.xpath('/html/body/div[5]/div[2]/div[2]/div[3]/ul/li[2]/h3/a/text()').extract_first()item['image_urls'] = response.xpath('/html/body/div[5]/div[2]/div[1]/div[1]/div/a[1]/img/@src').extract()print('agent:', item['agent'])yield item

settings文件:

BOT_NAME = 'pachong2'SPIDER_MODULES = ['pachong2.spiders']

NEWSPIDER_MODULE = 'pachong2.spiders'

ROBOTSTXT_OBEY = False

DOWNLOAD_DELAY = 3

COOKIES_ENABLED = False

# 图片下载存储

ITEM_PIPELINES = {'scrapy.pipelines.images.ImagesPipeline':1}

IMAGES_STORE='E:\Projects\PycharmProjects\pachong2\images'

# 设置cookie,通过浏览器开发工具获取

DEFAULT_REQUEST_HEADERS = {'Cookie':'......'}

# 自动限速

AUTOTHROTTLE_ENABLED = True

HTTPERROR_ALLOWED_CODES = [403]

# 中间件

DOWNLOADER_MIDDLEWARES = {# 'scrapy.contrib.downloadermiddleware.httpproxy.HttpProxyMiddleware':None,# 'pachong2.middlewares.ProxyMiddleWare':125,# 'scrapy.downloadermiddlewares.defaultheaders.DefaultHeadersMiddleware':None'pachong2.middlewares.UserAgentMiddleware': 543,'scrapy.downloadermiddlewares.useragent.UserAgentMiddleware':None, # 关闭默认的USER-AGENT中间建

}

pipelines文件:

class Pachong2Pipeline(object):def process_item(self, item, spider):return item

中间件设置自己的user-agent信息:

class UserAgentMiddleware(object):def process_request(self, request, spider):USER_AGENT = ''request.headers.setdefault('User-Agent', USER_AGENT)

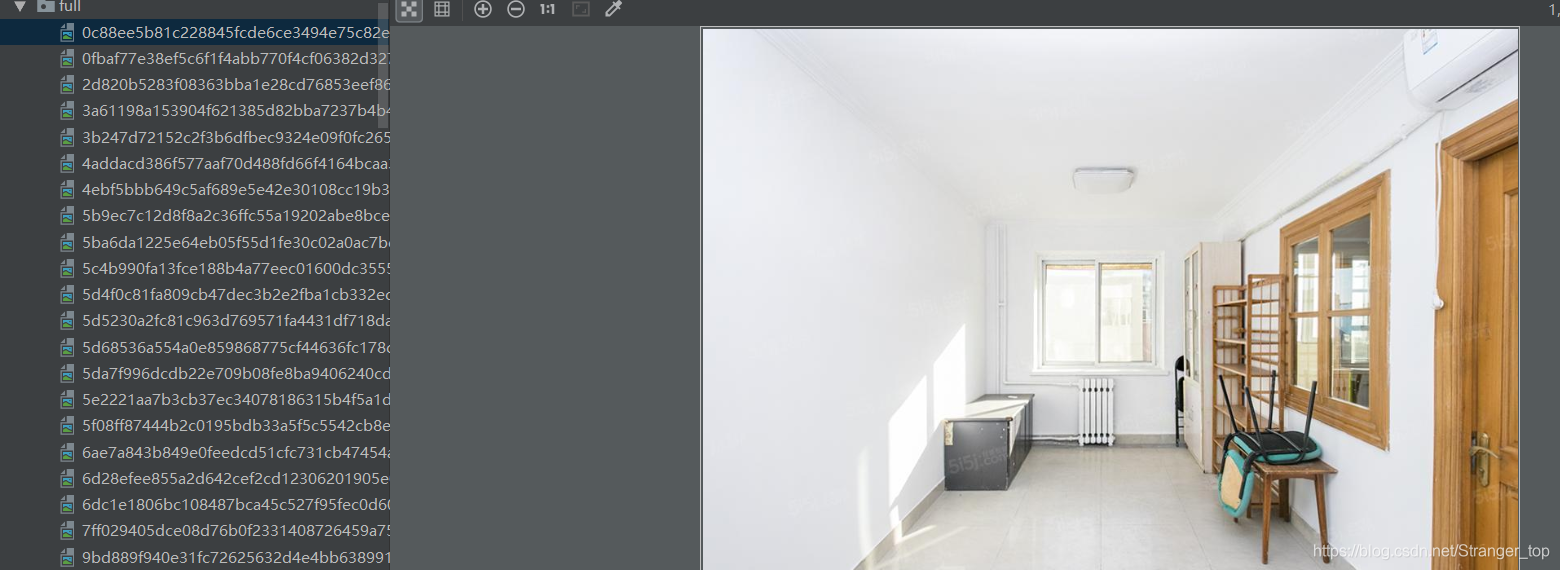

结果:

仅供个人学习,如有侵权联系删除