使用beautifulSoup框架爬取114黄页数据。

代码开源在gitee地址: https://gitee.com/aismarter/ScrapySpider_bs4_openpyxl_tinker

github地址: https://github.com/Aismarter/ScrapySpider_BS4_openpyxl_tinker

分析网站

首先打开网页,分析爬取网页的元素。

点击选中需要爬取的地方-鼠标右键-检查元素。

检测可见,要爬取的内容定位于:

<td id="tdDetails" class="text" height="500" valign="top">释放数据潜能,激...。</td>在td块下。

之后,对分析结果进行爬取。

循环爬取实现

设置一个循环,手动的输入不同网站爬取。

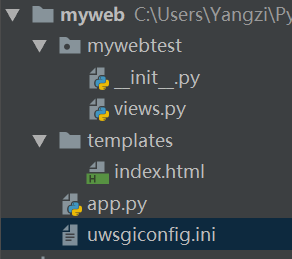

但手动输入失误过多,使用tkinter,图像化输入。

启一个图像化界面,图像化输入。

图像化测试代码如下:

# tktest.pyimport tkinter as tkclass Window:def __init__(self, handle):self.win = handleself.createwindow()self.run()def createwindow(self):self.win.geometry('400x400')# label 1self.label_text = tk.StringVar()self.label_text.set("----")self.lable = tk.Label(self.win,textvariable=self.label_text,font=('Arial', 11), width=15, height=2)self.lable.pack()# text_contrlself.entry_text = tk.StringVar()self.entry = tk.Entry(self.win, textvariable=self.entry_text, width=30)self.entry.pack()# buttonself.button = tk.Button(self.win, text="set label to text", width=15, height=2, command=self.setlabel)self.button.pack()def setlabel(self):print(self.entry_text.get())self.label_text.set(self.entry_text.get())def get_input(self):newinfo = self.entry_text.get()print("this is new information: " + newinfo)return newinfodef run(self):try:self.win.mainloop()except Exception as e:print("*** exception:\n".format(e))def input_info():window = tk.Tk()window.title('hello tkinter')Window(window).run()if __name__ == "__main__":input_info()

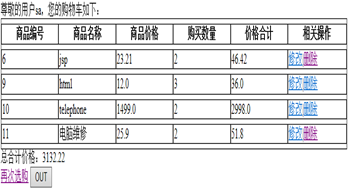

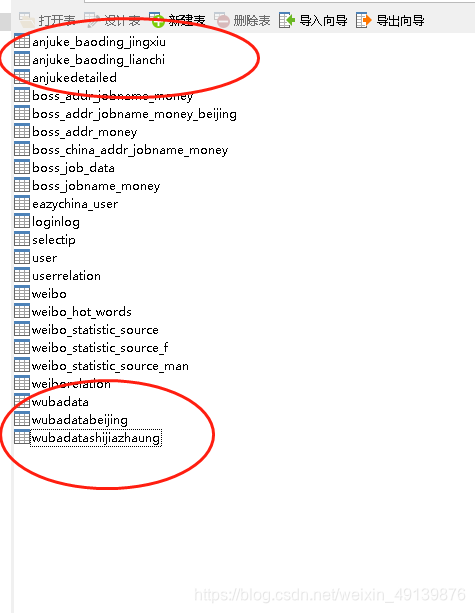

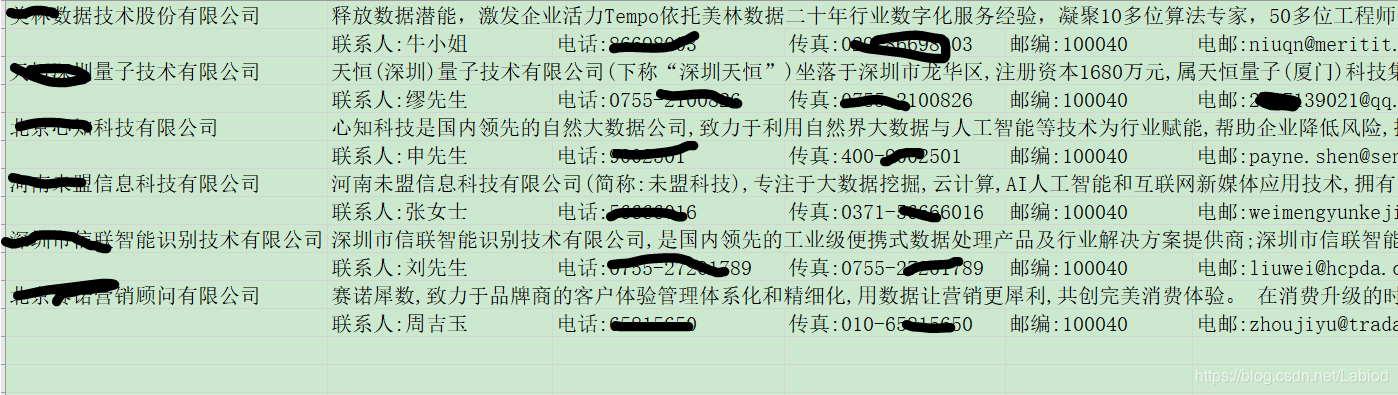

最终爬取结果

最终代码

import requests

import os

from tktest import *

from bs4 import BeautifulSoup

from openpyxl import Workbook

import openpyxldef get_information(url):# url = "http://www.ygsoft.com/BigData/index.html?from=baidu"page = requests.get(url)# print(page.status_code)# print(page.content)soup = BeautifulSoup(page.content, 'html.parser')# print(soup.prettify())# details = soup.find_all('td', class_="text")# print(details.get_text())# return detailsname = soup.find_all('td', class_="lian-right")name_mess = []for name1 in name:mess = str(name1.get_text())print(mess)# print(name1.get_text())name_mess.append(mess)print(name_mess)return name_messdef get_information_name(url):# url = "http://www.ygsoft.com/BigData/index.html?from=baidu"page = requests.get(url)# print(page.status_code)# print(page.content)soup = BeautifulSoup(page.content, 'html.parser')# print(soup.prettify())# details = soup.find_all('td', class_="text")# print(details.get_text())# return detailsname = soup.find_all('td', class_="top2")name_mess = []for name1 in name:mess = str(name1.get_text())print(mess)# print(name1.get_text())name_mess.append(mess)print(name_mess)return name_messdef get_information_company(url):# url = "http://www.ygsoft.com/BigData/index.html?from=baidu"page = requests.get(url)# print(page.status_code)# print(page.content)soup = BeautifulSoup(page.content, 'html.parser')# print(soup.prettify())# details = soup.find_all('td', class_="text")# print(details.get_text())# return detailsname = soup.find_all('td', class_="text")name_mess = []for name1 in name:mess = str(name1.get_text())print(mess)# print(name1.get_text())name_mess.append(mess)print(name_mess)return name_messdef store_into_excel(filename,titleList, company ,name, wb, ws1, n):r = 1if n is not 1:r = n + n -1else:r = n# 设置表头# titleList = ['远光大数据平台']c = 1for n1 in company:ws1.cell(r, c + 2, n1)for row in range(len(titleList)):ws1.cell(row=r, column=c, value=titleList[row])c += 1c = 1# 填写表内容r += 1for name1 in name:ws1.cell(r, c+2, name1)c += 1c = 1wb.save(filename=filename)def main():# 将数据写入Excelwb = Workbook()# 设置Excel文件名filename = '黄页数据.xlsx'# 新建一个表ws1 = wb.activen = 1while True:print("\n\n\n**********进行第" + str(n) + "次爬取")print("网址:")url = Window(tk.Tk()).get_input()print(type(url))print("以获取到爬取连接: " + url)print("平台:")# titleList.append(Window(tk.Tk()).get_input())titleList = get_information_name(url)company = get_information_company(url)print("正在爬取数据")name = get_information(url)store_into_excel(filename, titleList, company, name, wb, ws1, n)# try:# name = get_information(url)# store_into_excel(filename, name, wb, ws1, n)# except:# print("输入有误。。。")print("输入任意值继续,输入q键退出。。")ans = Window(tk.Tk()).get_input()n += 1if ans is not 'q':continueelse:breakif __name__ == "__main__":main()