文章目录

- 一、内存异步回收

- 1.1 异步回收的初始化

- 1.2 异步回收的唤醒

- 1.3 异步回收过程分析

- 二、内存同步回收

- 三、shrink_node

申请分配页的时候,页分配器首先尝试使用低水线分配页。如果使用低水线分配失败,说明内存轻微不足,页分配器将会唤醒所有符合分配条件的内存节点的页回收线程,异步回收页,然后尝试使用最低水线分配页。如果分配失败,说明内存严重不足,页分配器将会进行内存碎片整理,整理后尝试使用最低水线分配页。如果分配失败,说明内存不够用了,需要回收一些暂时用不上的内存,页分配器将会直接回收页。后面才会oom等等。也就是说,存在内存异步回收和内存同步回收。

一、内存异步回收

每个内存节点有一个页回收线程,如果内存节点的所有内存区域的空闲页数小于高水线,页回收线程就会反复尝试回收页,以回收内存节点中的页。

1.1 异步回收的初始化

kswapd_init初始化:

static int __init kswapd_init(void)

{int nid;swap_setup();//根据系统的内存大小设置全局变量page_clusterfor_each_node_state(nid, N_MEMORY)//遍历每一个有内存的内存节点kswapd_run(nid);//创建内核线程kswapdreturn 0;

}int kswapd_run(int nid)

{pg_data_t *pgdat = NODE_DATA(nid);int ret = 0;if (pgdat->kswapd)return 0;pgdat->kswapd = kthread_run(kswapd, pgdat, "kswapd%d", nid);//创建内核进程kswapd%dif (IS_ERR(pgdat->kswapd)) {/* failure at boot is fatal */BUG_ON(system_state < SYSTEM_RUNNING);pr_err("Failed to start kswapd on node %d\n", nid);ret = PTR_ERR(pgdat->kswapd);pgdat->kswapd = NULL;}return ret;

}

系统初始化期间,调用kswapd_init,在每个内存节点上创建kswap进程,该进程是一个无限循环的函数,我们进去看看kswap这个函数是怎么跑的:

static int kswapd(void *p)

{unsigned int alloc_order, reclaim_order;unsigned int highest_zoneidx = MAX_NR_ZONES - 1;pg_data_t *pgdat = (pg_data_t*)p;struct task_struct *tsk = current;const struct cpumask *cpumask = cpumask_of_node(pgdat->node_id);if (!cpumask_empty(cpumask))set_cpus_allowed_ptr(tsk, cpumask);/** Tell the memory management that we're a "memory allocator",* and that if we need more memory we should get access to it* regardless (see "__alloc_pages()"). "kswapd" should* never get caught in the normal page freeing logic.** (Kswapd normally doesn't need memory anyway, but sometimes* you need a small amount of memory in order to be able to* page out something else, and this flag essentially protects* us from recursively trying to free more memory as we're* trying to free the first piece of memory in the first place).*/tsk->flags |= PF_MEMALLOC | PF_SWAPWRITE | PF_KSWAPD;set_freezable();WRITE_ONCE(pgdat->kswapd_order, 0);WRITE_ONCE(pgdat->kswapd_highest_zoneidx, MAX_NR_ZONES);//无限循环for ( ; ; ) {bool ret;alloc_order = reclaim_order = READ_ONCE(pgdat->kswapd_order);highest_zoneidx = kswapd_highest_zoneidx(pgdat,highest_zoneidx);kswapd_try_sleep://尝试进入睡眠状态,让出cpu,知道被唤醒kswapd_try_to_sleep(pgdat, alloc_order, reclaim_order,highest_zoneidx);/* Read the new order and highest_zoneidx */alloc_order = reclaim_order = READ_ONCE(pgdat->kswapd_order);//修改申请的内存orderhighest_zoneidx = kswapd_highest_zoneidx(pgdat, //可以扫描和回收最高的zone的idxhighest_zoneidx);WRITE_ONCE(pgdat->kswapd_order, 0);WRITE_ONCE(pgdat->kswapd_highest_zoneidx, MAX_NR_ZONES);ret = try_to_freeze();//尝试冻住这个进程,在系统进入suspend的时候调用if (kthread_should_stop())//如果current->flags表示可以停止break; //退出死循环if (ret)//如果是冻住后唤醒continue;//从头开始运行,不需要balance_pgdattrace_mm_vmscan_kswapd_wake(pgdat->node_id, highest_zoneidx,alloc_order);//对节点进行回收,用于平衡节点的内存水位,返回回收到的内存的orderreclaim_order = balance_pgdat(pgdat, alloc_order,highest_zoneidx);if (reclaim_order < alloc_order)//如果回收到的内存小于申请内存goto kswapd_try_sleep;}tsk->flags &= ~(PF_MEMALLOC | PF_SWAPWRITE | PF_KSWAPD);return 0;

}

kswapd过程如下:

- 进入for这个无限循环,在循环里面,执行如下操作:

- 初始化alloc_order 和highest_zoneidx

- 调用函数kswapd_try_to_sleep进入睡眠,直到被唤醒。

- 更新alloc_order 和highest_zoneidx

- 调用函数try_to_freeze看看是不是需要冻住自己这个进程,如果不需要,往下走;

- 调用函数kthread_should_stop看看自己这个任务是不是需要停止,如果是,退出死循环

- 调用函数balance_pgdat对节点进行回收,用于平衡节点的内存水位,返回回收到的内存的order。

1.2 异步回收的唤醒

进程是在kswapd_try_to_sleep函数中睡眠的,也是在这个函数中唤醒的,我们想知道他是怎么唤醒的,需要看看这个函数:

static void kswapd_try_to_sleep(pg_data_t *pgdat, int alloc_order, int reclaim_order,unsigned int highest_zoneidx)

{long remaining = 0;DEFINE_WAIT(wait);if (freezing(current) || kthread_should_stop())return;//把wait加入到kswapd_wait等待队列中,并且设置为可中断状态prepare_to_wait(&pgdat->kswapd_wait, &wait, TASK_INTERRUPTIBLE);//为kswapd睡眠做准备,并且判断kswapd是否可以睡眠if (prepare_kswapd_sleep(pgdat, reclaim_order, highest_zoneidx)) {reset_isolation_suitable(pgdat);//重置内存碎片整理的时候的一些标记//唤醒内存碎片整理的进程wakeup_kcompactd(pgdat, alloc_order, highest_zoneidx);remaining = schedule_timeout(HZ/10);//尝试睡眠0.1sif (remaining) {//如果remaining不为0,说明被唤醒了//需要更新kswapd_highest_zoneidx和kswapd_orderWRITE_ONCE(pgdat->kswapd_highest_zoneidx,kswapd_highest_zoneidx(pgdat,highest_zoneidx));if (READ_ONCE(pgdat->kswapd_order) < reclaim_order)WRITE_ONCE(pgdat->kswapd_order, reclaim_order);}finish_wait(&pgdat->kswapd_wait, &wait);//kswapd_wait等待队列中移除wait//把wait加入到kswapd_wait等待队列中,并且设置为可中断状态prepare_to_wait(&pgdat->kswapd_wait, &wait, TASK_INTERRUPTIBLE);}//如果刚刚尝试的睡眠没有被唤醒,为kswapd睡眠做准备,并且判断kswapd是否可以睡眠if (!remaining && prepare_kswapd_sleep(pgdat, reclaim_order, highest_zoneidx)) {trace_mm_vmscan_kswapd_sleep(pgdat->node_id);//调用calculate_normal_threshold计算内存压力,并且写入pcpu的thresholdset_pgdat_percpu_threshold(pgdat, calculate_normal_threshold);if (!kthread_should_stop())//如果任务应该停止schedule();//调度出去set_pgdat_percpu_threshold(pgdat, calculate_pressure_threshold);} else {if (remaining)//如果刚刚尝试的睡眠被唤醒了//KSWAPD处于低水位事件加一count_vm_event(KSWAPD_LOW_WMARK_HIT_QUICKLY);else//否则就是kswapd睡眠没准备好//KSWAPD处于高水位事件加一count_vm_event(KSWAPD_HIGH_WMARK_HIT_QUICKLY);}finish_wait(&pgdat->kswapd_wait, &wait);//kswapd_wait等待队列中移除wait

}

kswapd_try_to_sleep过程如下:

- 调用函数prepare_to_wait把wait加入到kswapd_wait等待队列中,并且设置为可中断状态

- 调用函数prepare_kswapd_sleep为kswapd睡眠做准备,并且判断kswapd是否可以睡眠,如果可以睡眠,去到3;如果不可以睡眠去到5

- 调用函数reset_isolation_suitable重置内存碎片整理的时候的一些标记,调用函数wakeup_kcompactd唤醒内存碎片整理的进程,

- 调用函数schedule_timeout尝试睡眠0.1s,如果中途被唤醒了,需要更新kswapd_highest_zoneidx和kswapd_order,更新kswapd_wait队列

- 如果刚刚尝试的睡眠没有被唤醒,为kswapd睡眠做准备,并且判断kswapd是否可以睡眠,调用函数set_pgdat_percpu_threshold计算内存压力,并且写入pcpu的threshold,调用函数scheduled睡眠。

- 等待唤醒,唤醒则在这里继续,调用函数finish_wait把kswapd_wait等待队列中移除wait。

看到这里我们知道只要唤醒kswapd_wait这个等待队列,我们的这个任务就会被唤醒继续运行。

1.3 异步回收过程分析

我们知道是kswapd通过调用函数balance_pgdat对节点进行回收,balance_pgdat调用函数kswapd_shrink_node,kswapd_shrink_node调用函数shrink_node函数进行回收的。这里的代码量比较多,很多都看不懂,而最主要的shrink_node放在后面讲。

二、内存同步回收

直接回收页针对备用区域列表中符合分配条件的每个内存区域,在慢速路径中是调用函数__alloc_pages_direct_reclaim来回收内存区域所属的内存节点中的页。__alloc_pages_direct_reclaim:

static inline struct page *

__alloc_pages_direct_reclaim(gfp_t gfp_mask, unsigned int order,unsigned int alloc_flags, const struct alloc_context *ac,unsigned long *did_some_progress)

{struct page *page = NULL;bool drained = false;//直接同步页面回收 *did_some_progress = __perform_reclaim(gfp_mask, order, ac);if (unlikely(!(*did_some_progress)))return NULL;retry:page = get_page_from_freelist(gfp_mask, order, alloc_flags, ac);//进行页面分配操作/** If an allocation failed after direct reclaim, it could be because* pages are pinned on the per-cpu lists or in high alloc reserves.* Shrink them and try again*/if (!page && !drained) {//分配失败并且还没有重试//把高阶原子类型的页块转换成申请的迁移类型unreserve_highatomic_pageblock(ac, false);drain_all_pages(NULL);//把pcp的物理页放回伙伴系统drained = true;goto retry;//再试一次}return page;

}

__alloc_pages_direct_reclaim过程如下:

- 调用函数__perform_reclaim进行直接同步页面回收,

- 然后调用函数get_page_from_freelist进行页面分配操作

- 如果分配失败并且还没有重试,调用函数unreserve_highatomic_pageblock把高阶原子类型的页块转换成申请的迁移类型,调用函数drain_all_pages把pcp的物理页放回伙伴系统,回到2再试一次。

__perform_reclaim:

static unsigned long

__perform_reclaim(gfp_t gfp_mask, unsigned int order,const struct alloc_context *ac)

{unsigned int noreclaim_flag;unsigned long pflags, progress;cond_resched();//主动让出cpu/* We now go into synchronous reclaim */cpuset_memory_pressure_bump();psi_memstall_enter(&pflags);//空函数fs_reclaim_acquire(gfp_mask);//空函数//设置current的flags为PF_MEMALLOC,表示在内存碎片整理进行中noreclaim_flag = memalloc_noreclaim_save();//尝试释放页面progress = try_to_free_pages(ac->zonelist, order, gfp_mask,ac->nodemask);memalloc_noreclaim_restore(noreclaim_flag);//恢复current的flags,表示compact结束fs_reclaim_release(gfp_mask);//空函数psi_memstall_leave(&pflags);//空函数cond_resched();//主动让出cpureturn progress;

}

__perform_reclaim过程如下:

- 调用函数memalloc_noreclaim_save设置current的flags为PF_MEMALLOC,表示在正在回收内存

- 调用函数try_to_free_pages尝试释放页面,

- 调用函数memalloc_noreclaim_restore恢复current的flags,表示回收内存完毕

我们继续看看try_to_free_pages函数是怎么释放内存的:

unsigned long try_to_free_pages(struct zonelist *zonelist, int order,gfp_t gfp_mask, nodemask_t *nodemask)

{unsigned long nr_reclaimed;struct scan_control sc = {//初始化扫描控制器.nr_to_reclaim = SWAP_CLUSTER_MAX,.gfp_mask = current_gfp_context(gfp_mask),.reclaim_idx = gfp_zone(gfp_mask),.order = order,.nodemask = nodemask,.priority = DEF_PRIORITY,.may_writepage = !laptop_mode,.may_unmap = 1,.may_swap = 1,};/** scan_control uses s8 fields for order, priority, and reclaim_idx.* Confirm they are large enough for max values.*/BUILD_BUG_ON(MAX_ORDER > S8_MAX);BUILD_BUG_ON(DEF_PRIORITY > S8_MAX);BUILD_BUG_ON(MAX_NR_ZONES > S8_MAX);//如果在节流期间传递了致命信号,不应该进行页面直接回收,而是kswapif (throttle_direct_reclaim(sc.gfp_mask, zonelist, nodemask))return 1;set_task_reclaim_state(current, &sc.reclaim_state);//设置current状态为正在回收内存trace_mm_vmscan_direct_reclaim_begin(order, sc.gfp_mask);nr_reclaimed = do_try_to_free_pages(zonelist, &sc);//真正的尝试释放页面trace_mm_vmscan_direct_reclaim_end(nr_reclaimed);set_task_reclaim_state(current, NULL);//恢复current状态return nr_reclaimed;

}

try_to_free_pages过程如下:

- 静态初始化初始化扫描控制器sc

- 如果在节流期间传递了致命信号,不应该进行页面直接回收,而是kswap,返回1

- 调用函数set_task_reclaim_state设置current的flags为PF_MEMALLOC,表示在正在回收内存

- 调用函数do_try_to_free_pages真正的尝试释放页面

- 调用函数set_task_reclaim_state恢复current的flags,表示回收内存完毕

do_try_to_free_pages函数是真正的释放内存怒页面,我们一起看看:

static unsigned long do_try_to_free_pages(struct zonelist *zonelist,struct scan_control *sc)

{int initial_priority = sc->priority;pg_data_t *last_pgdat;struct zoneref *z;struct zone *zone;

retry:delayacct_freepages_start();if (!cgroup_reclaim(sc))//如果sc没有达到cgroup的极限//计数加一,什么计数,我也不知道__count_zid_vm_events(ALLOCSTALL, sc->reclaim_idx, 1);do {//通过回收优先级计算内存压力vmpressure_prio(sc->gfp_mask, sc->target_mem_cgroup,sc->priority);sc->nr_scanned = 0;//初始化扫描计数shrink_zones(zonelist, sc);//从zone中进行直接回收//如果回收到的页面大于需要的页面if (sc->nr_reclaimed >= sc->nr_to_reclaim)break;//退出循环if (sc->compaction_ready)//如果内存碎片整理准备好了break;//退出循环if (sc->priority < DEF_PRIORITY - 2)//如果回收页面很小了sc->may_writepage = 1;//设置允许回写} while (--sc->priority >= 0);last_pgdat = NULL;//扫描zonelist里面的每一个区域,for_each_zone_zonelist_nodemask(zone, z, zonelist, sc->reclaim_idx,sc->nodemask) {if (zone->zone_pgdat == last_pgdat)continue;last_pgdat = zone->zone_pgdat;snapshot_refaults(sc->target_mem_cgroup, zone->zone_pgdat);if (cgroup_reclaim(sc)) {struct lruvec *lruvec;//获取一个memcg的lru列表向量lruvec = mem_cgroup_lruvec(sc->target_mem_cgroup,zone->zone_pgdat);clear_bit(LRUVEC_CONGESTED, &lruvec->flags);//清除lru链表的脏标志位}}delayacct_freepages_end();//完成时间戳和计数器的统计if (sc->nr_reclaimed)//如果直接回收页return sc->nr_reclaimed;//返回直接回收到的页数量//回收不到页面,但是内存碎片整理准备好了if (sc->compaction_ready)return 1;//尝试压缩/** We make inactive:active ratio decisions based on the node's* composition of memory, but a restrictive reclaim_idx or a* memory.low cgroup setting can exempt large amounts of* memory from reclaim. Neither of which are very common, so* instead of doing costly eligibility calculations of the* entire cgroup subtree up front, we assume the estimates are* good, and retry with forcible deactivation if that fails.*/if (sc->skipped_deactivate) {sc->priority = initial_priority;sc->force_deactivate = 1;sc->skipped_deactivate = 0;goto retry;}/* Untapped cgroup reserves? Don't OOM, retry. */if (sc->memcg_low_skipped) {sc->priority = initial_priority;sc->force_deactivate = 0;sc->memcg_low_reclaim = 1;sc->memcg_low_skipped = 0;goto retry;}return 0;

}

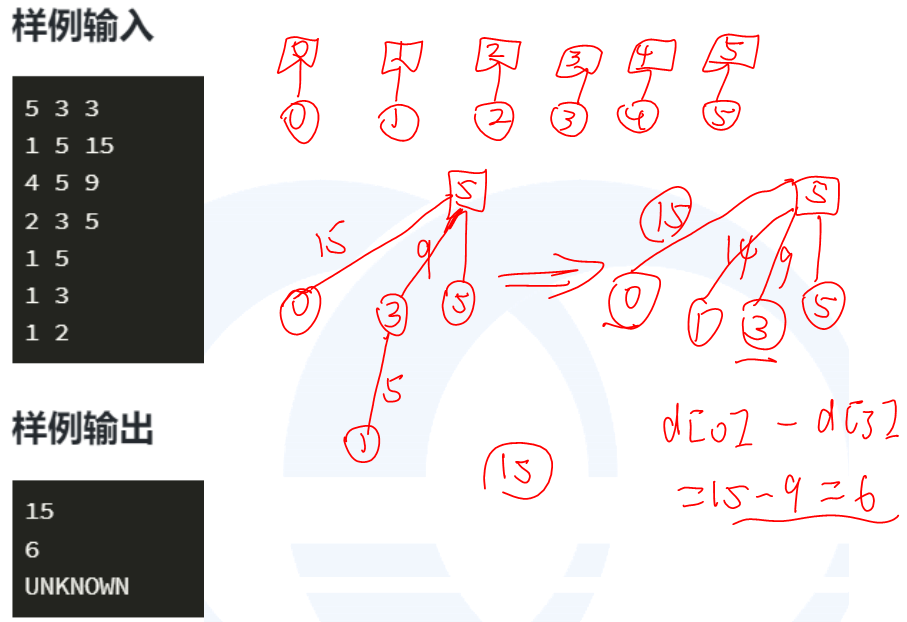

do_try_to_free_pages过程如下:

- 如果sc没有达到cgroup的极限,计数加一

- 从sc->priority开始,每次循环priority减一,在循环体中执行:

- 调用函数vmpressure_prio通过回收优先级计算内存压力

- 调用函数shrink_zones从zone中进行直接回收

- 如果回收到的页面大于需要的页面或者内存碎片整理准备好了,退出循环

- 如果回收页面的优先级很小了,表示很难回收到内存了,设置允许回写标志位

- 调用函数for_each_zone_zonelist_nodemask遍历每一个zone,清除lru链表的脏标志位

- 调用函数delayacct_freepages_end完成时间戳和计数器的统计

- 如果直接回收到页,返回直接回收到的页数量

- 如果回收不到页面,但是内存碎片整理准备好了,返回1表示尝试压缩

shrink_zones是直接回收的核心函数:

static void shrink_zones(struct zonelist *zonelist, struct scan_control *sc)

{struct zoneref *z;struct zone *zone;unsigned long nr_soft_reclaimed;unsigned long nr_soft_scanned;gfp_t orig_mask;pg_data_t *last_pgdat = NULL;/** If the number of buffer_heads in the machine exceeds the maximum* allowed level, force direct reclaim to scan the highmem zone as* highmem pages could be pinning lowmem pages storing buffer_heads*/orig_mask = sc->gfp_mask;if (buffer_heads_over_limit) {sc->gfp_mask |= __GFP_HIGHMEM;sc->reclaim_idx = gfp_zone(sc->gfp_mask);}//遍历zonelist的每一个zonefor_each_zone_zonelist_nodemask(zone, z, zonelist,sc->reclaim_idx, sc->nodemask) {/** Take care memory controller reclaiming has small influence* to global LRU.*/if (!cgroup_reclaim(sc)) {//如果sc没有达到cgroup的极限if (!cpuset_zone_allowed(zone,GFP_KERNEL | __GFP_HARDWALL))continue;/** If we already have plenty of memory free for* compaction in this zone, don't free any more.* Even though compaction is invoked for any* non-zero order, only frequent costly order* reclamation is disruptive enough to become a* noticeable problem, like transparent huge* page allocations.*/if (IS_ENABLED(CONFIG_COMPACTION) && //如果可以内存碎片整理sc->order > PAGE_ALLOC_COSTLY_ORDER && //高阶内存的回收compaction_ready(zone, sc)) { //内存碎片整理工作已经准备好了sc->compaction_ready = true;continue;//已经有足够的空闲内存用于压缩,就不要再释放了。}/** Shrink each node in the zonelist once. If the* zonelist is ordered by zone (not the default) then a* node may be shrunk multiple times but in that case* the user prefers lower zones being preserved.*/if (zone->zone_pgdat == last_pgdat)//如果是最后一个zone_pgdatcontinue;//一般都是重要的zone,不要回收了/** This steals pages from memory cgroups over softlimit* and returns the number of reclaimed pages and* scanned pages. This works for global memory pressure* and balancing, not for a memcg's limit.*/nr_soft_scanned = 0;nr_soft_reclaimed = mem_cgroup_soft_limit_reclaim(zone->zone_pgdat,sc->order, sc->gfp_mask,&nr_soft_scanned);sc->nr_reclaimed += nr_soft_reclaimed;sc->nr_scanned += nr_soft_scanned;/* need some check for avoid more shrink_zone() */}/* See comment about same check for global reclaim above */if (zone->zone_pgdat == last_pgdat)continue;last_pgdat = zone->zone_pgdat;shrink_node(zone->zone_pgdat, sc);//回收内存节点中的页}/** Restore to original mask to avoid the impact on the caller if we* promoted it to __GFP_HIGHMEM.*/sc->gfp_mask = orig_mask;

}

shrink_zones的作用只有一个,就是遍历每一个zone,调用shrink_node进行回收内存节点中的页。

三、shrink_node

好零零散散的,不知道怎么写,涉及到内容好多,包括回收lru链表不活动页,回收slab内存,swap换入换出,lru不活动页转化为lru活动页等等,先不写。