源码编译文章 —> 源码编译 DolphinScheduler 1.3.9 海豚调度,修改Hadoop、Hive组件版本兼容

DS官方文档手册 —> DS 海豚调度集群部署

0、大前提

-

1、确保:集群机器之间 免密互信

-

2、确保:配置

/etc/hosts映射 IP地址 主机名 -

3、确保:mysql数据库服务启动,hadoop集群启动,zk集群启动

-

4、确保:mysql数据库创建ds数据库

mysql> CREATE DATABASE dolphinscheduler DEFAULT CHARACTER SET utf8 DEFAULT COLLATE utf8_general_ci;

mysql> GRANT ALL PRIVILEGES ON dolphinscheduler.* TO 'dolphinscheduler'@'%' IDENTIFIED BY 'dolphinscheduler';

mysql> flush privileges;

1、先解压 源码编译好的 ds 海豚调度 的 bin 二进制包

tar -zxvf /opt/software/apache-dolphinscheduler-1.3.9-bin.tar.gz -C /opt/module/

2、进入解压后的目录

cd /opt/module/apache-dolphinscheduler-1.3.9-bin

3、开始配置

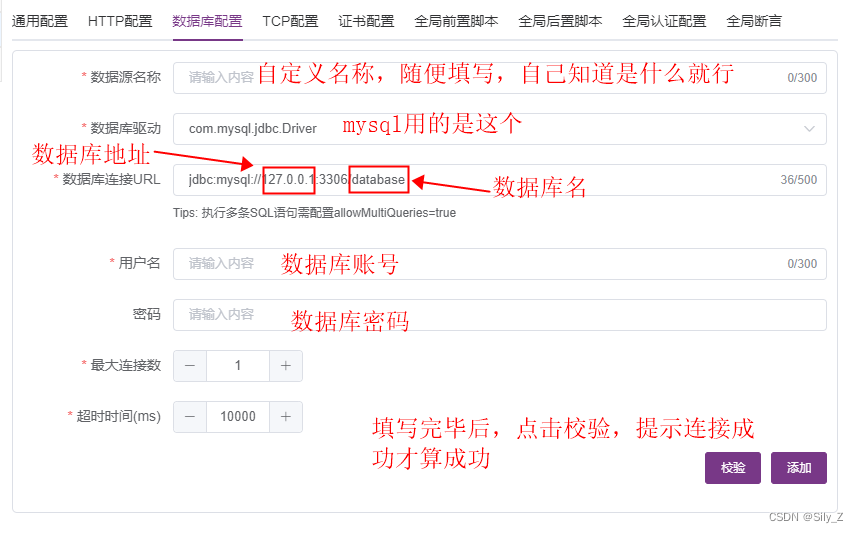

3.1 数据源

vim /opt/module/apache-dolphinscheduler-1.3.9-bin/conf/datasource.properties

# mysql example

spring.datasource.driver-class-name=com.mysql.jdbc.Driver

spring.datasource.url=jdbc:mysql://hadoop102:3306/dolphinscheduler?characterEncoding=UTF-8&allowMultiQueries=true

spring.datasource.username=dolphinscheduler

spring.datasource.password=dolphinscheduler

3.2 环境变量

vim /opt/module/apache-dolphinscheduler-1.3.9-bin/conf/env/dolphinscheduler_env.sh

# JAVA_HOME

export JAVA_HOME=/opt/module/jdk1.8.0_212# HADOOP_HOME

export HADOOP_HOME=/opt/module/hadoop-3.1.3

export HADOOP_CONF_DIR=/opt/module/hadoop-3.1.3/etc/hadoop# DATAX_HOME

export DATAX_HOME=/opt/module/datax# HIVE_HOME

export HIVE_HOME=/opt/module/apache-hive-3.1.2-bin# SPARK_HOME

export SPARK_HOME=/opt/module/spark-3.0.0-bin-hadoop3.2

3.3 安装配置文件

vim /opt/module/apache-dolphinscheduler-1.3.9-bin/conf/config/install_config.conf

# postgresql or mysql

dbtype="mysql"# db config

# db address and port

dbhost="hadoop102:3306"# db username

username="dolphinscheduler"# database name

dbname="dolphinscheduler"# db passwprd

# NOTICE: if there are special characters, please use the \ to escape, for example, `[` escape to `\[`

password="dolphinscheduler"# zk cluster

zkQuorum="hadoop102:2181,hadoop103:2181,hadoop104:2181"# Note: the target installation path for dolphinscheduler, please not config as the same as the current path (pwd)

installPath="/opt/module/dolphinscheduler"# deployment user

# Note: the deployment user needs to have sudo privileges and permissions to operate hdfs. If hdfs is enabled, the root directory needs to be created by itself

deployUser="zhangsan"# alert config

# mail server host

mailServerHost="smtp.exmail.qq.com"# mail server port

# note: Different protocols and encryption methods correspond to different ports, when SSL/TLS is enabled, make sure the port is correct.

mailServerPort="25"# sender

mailSender="xxxxxxxxxx"# user

mailUser="xxxxxxxxxx"# sender password

# note: The mail.passwd is email service authorization code, not the email login password.

mailPassword="xxxxxxxxxx"# TLS mail protocol support

starttlsEnable="true"# SSL mail protocol support

# only one of TLS and SSL can be in the true state.

sslEnable="false"#note: sslTrust is the same as mailServerHost

sslTrust="smtp.exmail.qq.com"# user data local directory path, please make sure the directory exists and have read write permissions

dataBasedirPath="/tmp/dolphinscheduler"# resource storage type: HDFS, S3, NONE

resourceStorageType="HDFS"# resource store on HDFS/S3 path, resource file will store to this hadoop hdfs path, self configuration, please make sure the directory exists on hdfs and have read write permissions. "/dolphinscheduler" is recommended

resourceUploadPath="/dolphinscheduler"# if resourceStorageType is HDFS,defaultFS write namenode address,HA you need to put core-site.xml and hdfs-site.xml in the conf directory.

# if S3,write S3 address,HA,for example :s3a://dolphinscheduler,

# Note,s3 be sure to create the root directory /dolphinscheduler

defaultFS="hdfs://hadoop102:8020"# if resourceStorageType is S3, the following three configuration is required, otherwise please ignore

s3Endpoint="http://192.168.xx.xx:9010"

s3AccessKey="xxxxxxxxxx"

s3SecretKey="xxxxxxxxxx"# resourcemanager port, the default value is 8088 if not specified

resourceManagerHttpAddressPort="8088"# if resourcemanager HA is enabled, please set the HA IPs; if resourcemanager is single, keep this value empty

yarnHaIps="" #"192.168.xx.xx,192.168.xx.xx"# if resourcemanager HA is enabled or not use resourcemanager, please keep the default value; If resourcemanager is single, you only need to replace ds1 to actual resourcemanager hostname

singleYarnIp="hadoop103"# who have permissions to create directory under HDFS/S3 root path

# Note: if kerberos is enabled, please config hdfsRootUser=

hdfsRootUser="zhangsan"# kerberos config

# whether kerberos starts, if kerberos starts, following four items need to config, otherwise please ignore

kerberosStartUp="false"

# kdc krb5 config file path

krb5ConfPath="$installPath/conf/krb5.conf"

# keytab username

keytabUserName="hdfs-mycluster@ESZ.COM"

# username keytab path

keytabPath="$installPath/conf/hdfs.headless.keytab"

# kerberos expire time, the unit is hour

kerberosExpireTime="2"# api server port

apiServerPort="12345"# install hosts

# Note: install the scheduled hostname list. If it is pseudo-distributed, just write a pseudo-distributed hostname

ips="hadoop102,hadoop103,hadoop104"# ssh port, default 22

# Note: if ssh port is not default, modify here

sshPort="22"# run master machine

# Note: list of hosts hostname for deploying master

masters="hadoop102"

#masters="hadoop103"# run worker machine

# note: need to write the worker group name of each worker, the default value is "default"

#workers="hadoop102:default,hadoop103:default,hadoop104:default"

workers="hadoop103:default,hadoop104:default"# run alert machine

# note: list of machine hostnames for deploying alert server

alertServer=""# run api machine

# note: list of machine hostnames for deploying api server

apiServers="hadoop102"

注意 yarn 配置:

- 如果是yarn HA高可用,那么配置高可用地址,需要置空单点配置

- 如果yarn单点,需要置空高可用配置

# if resourcemanager HA is enabled, please set the HA IPs; if resourcemanager is single, keep this value empty

yarnHaIps="" #"192.168.xx.xx,192.168.xx.xx"# if resourcemanager HA is enabled or not use resourcemanager, please keep the default value; If resourcemanager is single, you only need to replace ds1 to actual resourcemanager hostname

singleYarnIp="hadoop103"

示例1(yarn HA 高可用):

yarnHaIps="hadoop102,hadoop103"

singleYarnIp=""

示例2(yarn 单点):

yarnHaIps=""

singleYarnIp="hadoop103"

4、开始安装

sh /opt/module/apache-dolphinscheduler-1.3.9-bin/install.sh

5、浏览器访问 DS 海豚调度 Web UI

http://hadoop102:12345/dolphinscheduler

初始用户名/密码

admin

dolphinscheduler123

我们下期见,拜拜!

![[java安全]URLDNS](https://img-blog.csdnimg.cn/img_convert/41b55cca44fbb0d02c1d2eab87ed80fa.png)

![MySQL [环境配置]](https://img-blog.csdnimg.cn/64ebd6bad0c04c469f5b6e04503fc4fc.png)